Table of Contents

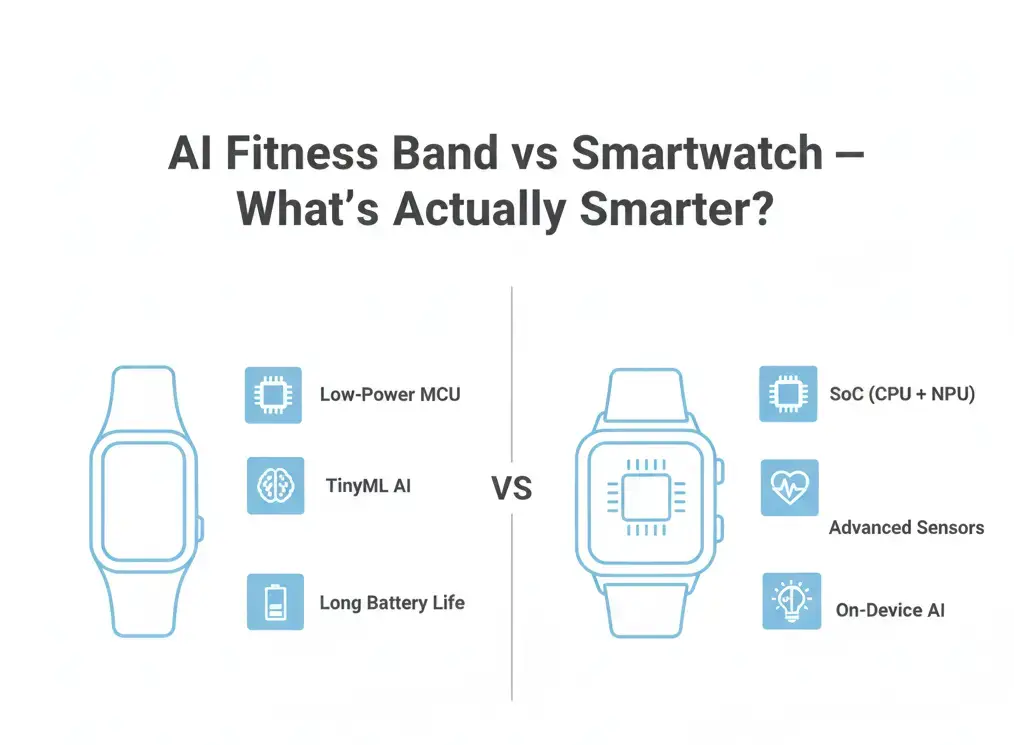

In the AI Fitness Bands vs Smartwatches comparison, smartwatches are generally more capable in executing complex on-device AI tasks due to more powerful System-in-Packages (SiPs), dedicated NPUs, larger memory pools, and richer sensor arrays. Fitness bands prioritize ultra-low power consumption and extended battery life, typically running simplified AI models locally while offloading heavier analytics to a connected smartphone. The difference in “smartness” is therefore rooted in hardware architecture, power budgets, and real-time processing capability—not just software frameworks.

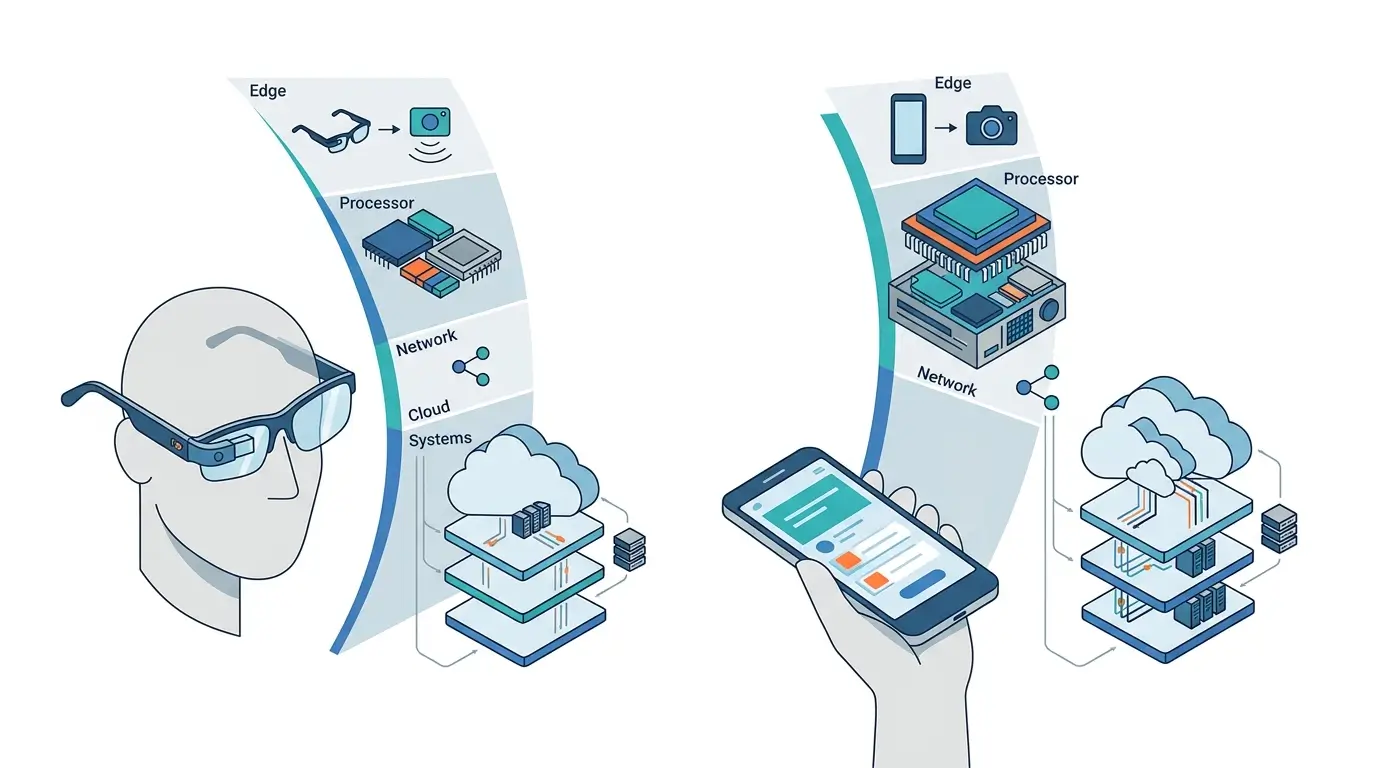

Artificial intelligence has become central to modern wearables. From sleep stage estimation to fall detection and voice commands, these devices increasingly rely on on-device AI inference rather than cloud-only processing.

However, compact form factors impose strict constraints on battery capacity, thermal dissipation, and memory bandwidth. Understanding how AI Fitness Bands vs Smartwatches differ at the hardware level—rather than just feature lists—is essential to determine what “smarter” actually means.

In wearable AI, intelligence is defined by:

Power efficiency within thermal limits

Silicon capability (CPU, DSP, NPU)

Memory bandwidth and capacity

Sensor fusion complexity

This architectural lens is essential when evaluating AI Fitness Bands vs Smartwatches, as differences in silicon design and power budgets directly shape real-world AI capability.

AI Fitness Bands vs Smartwatches: Hardware Context

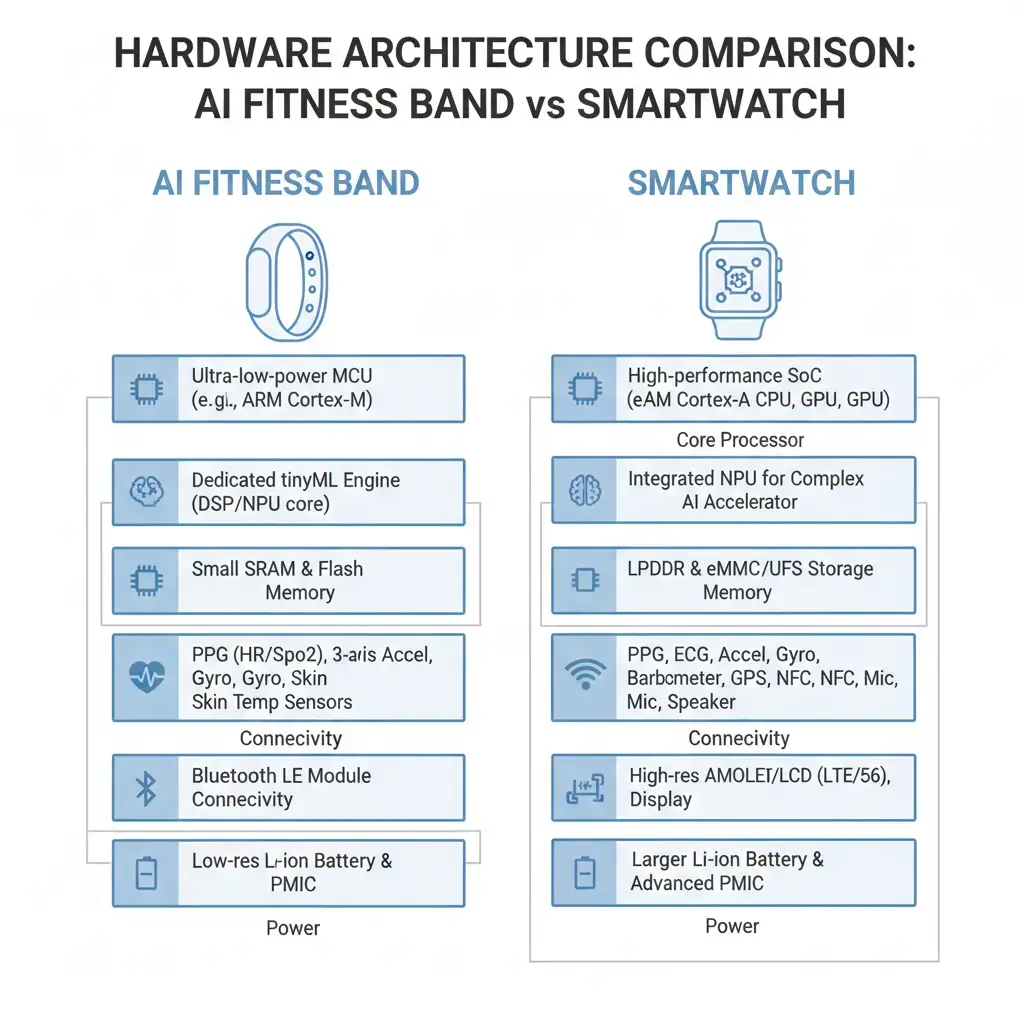

Before analyzing AI frameworks and runtimes, it is important to understand the hardware baseline in AI Fitness Bands vs Smartwatches. Fitness bands typically use ultra-low-power microcontrollers or DSP-class processors optimized for endurance. Smartwatches integrate more advanced System-in-Packages that may include multi-core CPUs, GPUs, and increasingly dedicated Neural Processing Units (NPUs).

This architectural difference directly influences model complexity, inference frequency, sensor fusion depth, and sustained AI performance within battery and thermal constraints.

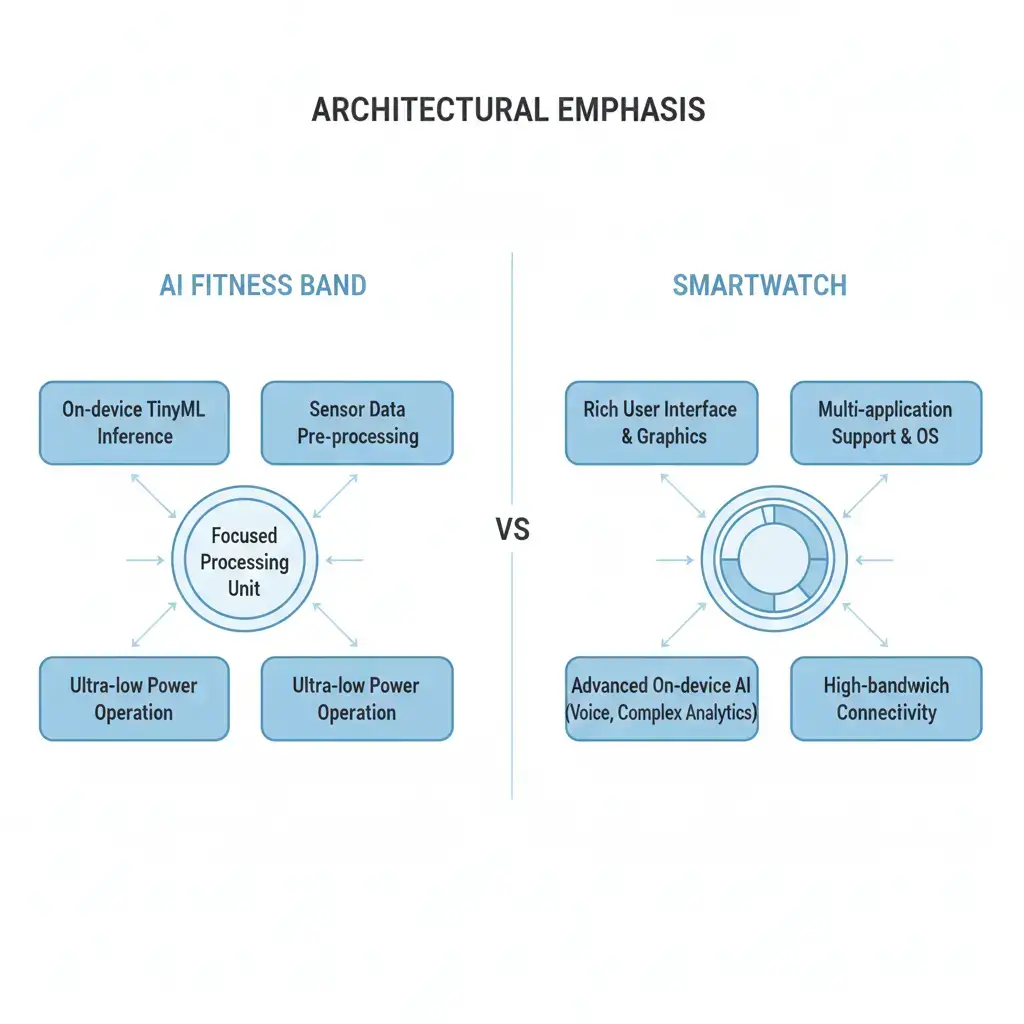

AI Fitness Bands vs Smartwatches: Architectural Breakdown

In the realm of wearables, several AI frameworks and standards dictate how machine learning models are executed on device hardware. These frameworks act as the bridge between a trained AI model and the specialized silicon designed to run it efficiently.

Core ML is Apple’s framework for integrating machine learning models into its ecosystem. It functions as both a compiler and a runtime. Core ML takes models trained in popular frameworks like TensorFlow or PyTorch and converts them into an optimized .mlmodel format. This format is specifically tailored for Apple’s proprietary silicon, including the Neural Engine found in their S-series System-in-Packages (SiPs) used in Apple Watch. This tight coupling ensures optimized performance and power efficiency within the Apple ecosystem.

NNAPI (Neural Networks API) is Android’s standard API for accelerating on-device machine learning inference. Unlike Core ML, NNAPI is primarily an abstract API layer. It allows Android applications to request hardware-accelerated inference without needing to know the specifics of the underlying chip. Chip vendors (e.g., Qualcomm, Samsung, Google) then provide their own optimized drivers and backends that translate NNAPI calls into instructions for their dedicated AI accelerators, such as DSPs (Digital Signal Processors) or NPUs (Neural Processing Units), subject to their specific hardware capabilities. This vendor-agnostic approach promotes broader hardware compatibility.

ONNX (Open Neural Network Exchange) is an open standard format for representing machine learning models. It is not a compiler or runtime itself, but rather a universal interchange format. Models trained in various frameworks can be converted to ONNX, allowing them to be deployed across different hardware platforms and runtimes that support the ONNX standard. This promotes model portability and reduces vendor lock-in.

AI Fitness Bands vs Smartwatches: Hardware Architecture Differences

| Feature | Fitness Bands | Smartwatches |

| Typical Processor | Low-power MCU or DSP | Full SoC/SiP with NPU |

| RAM | Limited (KB–MB class) | 1–2 GB typical |

| Dedicated NPU | Rare or minimal | Common in modern models |

| Sensor Array | Basic motion + heart rate | ECG, SpO2, temperature, and advanced motion |

| AI Model Complexity | Lightweight classification | Multi-sensor fusion, real-time inference |

| Battery Capacity | ~150–250 mAh | ~300–500 mAh |

| Cloud Dependency | Higher | Lower |

The wearable AI comparison clearly shows that smartwatches operate at a higher computational tier, while fitness bands are optimized for endurance and efficiency.

Performance Comparison

Performance in wearable AI is not solely about peak computational speed, but rather sustained, power-efficient execution within the device’s thermal and battery envelopes.

Core ML, due to its tight integration with Apple’s Neural Engine, frequently achieves optimized performance and energy efficiency within the Apple ecosystem. The compiler can perform deep optimizations, such as quantization (reducing model precision from 32-bit floating point to 8-bit integer) and graph transformations, directly leveraging the Neural Engine’s architecture for specific workloads. This results in lower inference latency for real-time tasks like fall detection or voice processing, which is crucial for immediate user feedback and safety features.

NNAPI’s performance is highly contingent on the quality of the hardware vendor’s implementation and the specific workload. While it provides an abstraction layer, the actual execution speed and power efficiency can vary significantly between different Android smartwatch chipsets (e.g., Qualcomm Snapdragon W5+ Gen 1 vs. Samsung Exynos W930), even for similar models. A well-optimized vendor backend can approach the efficiency of tightly coupled solutions, but a less optimized one can lead to higher latency and increased power consumption, negatively impacting user experience and battery life. ONNX, as a format, doesn’t dictate performance directly, but its interoperability allows developers to deploy models on various runtimes, potentially choosing the most performant option for a given hardware. Developers can consult the ONNX Runtime documentation for details on deploying models across diverse platforms.

In practical terms, fitness bands typically run lightweight models for activity classification and basic sleep staging. Smartwatches, with more robust hardware, support advanced real-time AI tasks such as ECG classification, gesture recognition, and voice command processing. This is where the AI Fitness Bands vs Smartwatches gap becomes measurable, especially under sustained AI workloads.

Processing Power and NPU Capabilities

Power efficiency is paramount in wearables with limited battery capacities (typically 150–500 mAh). Dedicated AI accelerators such as NPUs significantly reduce energy per inference compared to general-purpose CPUs. This allows smartwatches to execute complex models while maintaining acceptable battery life.

Power & Thermal Behavior

Running low-frequency inference cycles

Using lightweight models

Offloading heavier analytics to smartphones

For example, running a continuous activity classification model on a CPU might drain the battery rapidly and generate noticeable heat. Offloading this to a specialized NPU via Core ML or NNAPI can reduce power consumption by orders of magnitude, enabling extended battery life, depending on the model complexity and usage patterns. This highlights a key advantage of on-device AI vs cloud AI for power-constrained wearables. This also minimizes heat generation, preventing thermal throttling – a critical mechanism where the device dynamically reduces performance to maintain safe operating temperatures. Thermal throttling can lead to inconsistent AI performance and a degraded user experience, particularly during sustained background monitoring tasks like continuous heart rate variability (HRV) analysis or sleep stage detection, where consistent data processing is vital. Efficient hardware designs and optimized runtimes are essential to maintain consistent AI performance without overheating the device.

Memory & Bandwidth Handling

Wearables operate under stringent memory and bandwidth constraints. Typical smartwatches might feature 1-2 GB of RAM and limited internal storage, posing challenges for larger AI models. AI models, particularly deep neural networks, can be parametrically large and require substantial memory for their weights, biases, and intermediate activations. Efficient memory management is crucial to minimize excessive data movement, which directly consumes power and bandwidth, contributing to system-level bottlenecks.

Fitness bands operate with limited RAM, requiring aggressive:

- Model quantization

- Pruning

- Reduced sampling rates

Smartwatches, by contrast, can support higher-resolution sensor streams and more complex inference pipelines.

Efficient data movement between sensors, memory, and accelerators is critical. Bandwidth limitations often become system-level bottlenecks before raw compute ceilings are reached. In AI Fitness Bands vs Smartwatches, memory architecture often becomes the hidden constraint that limits model complexity before raw compute does.

Real-World Deployment: AI Fitness Bands vs Smartwatches: What’s Actually Smarter?

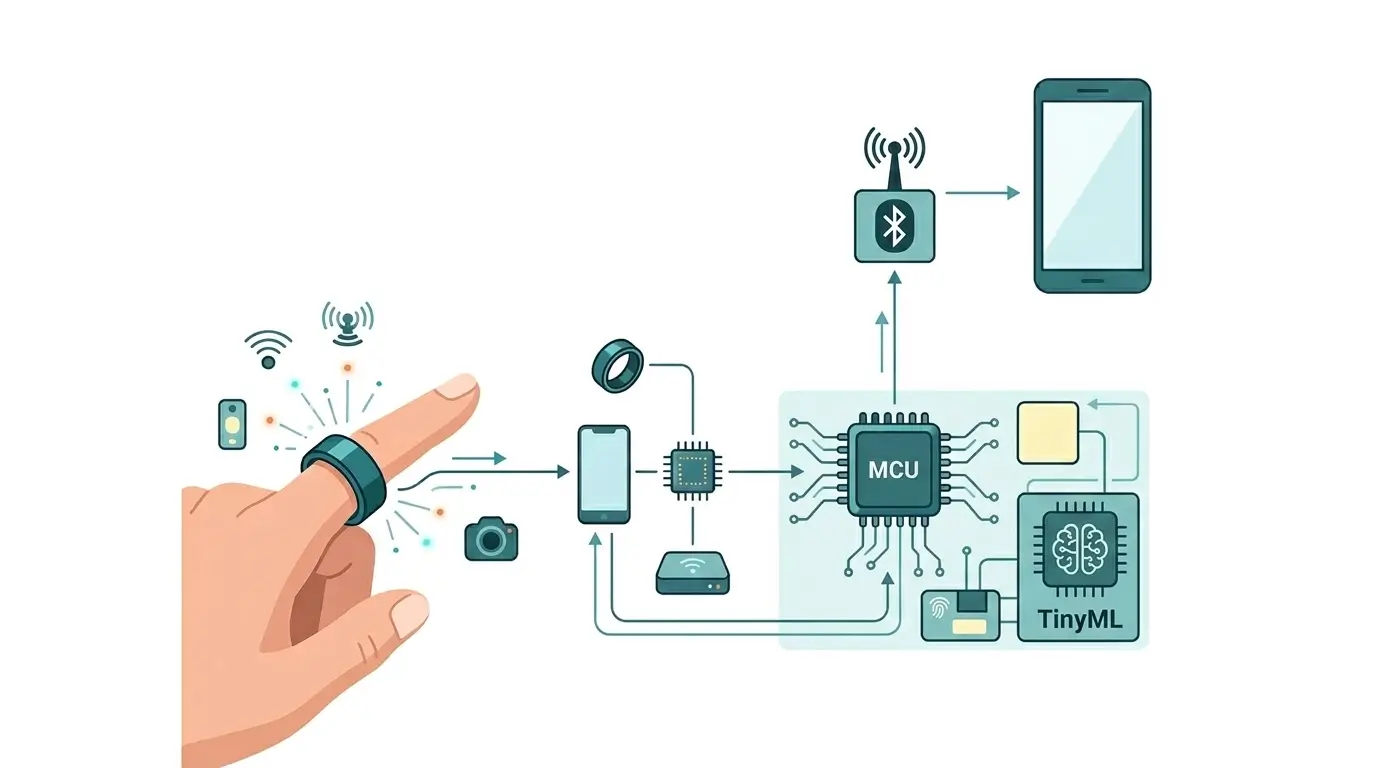

The deployment of AI in fitness bands versus smartwatches clearly delineates their differing capabilities and target use cases, primarily driven by hardware resources and power budgets. Fitness bands, characterized by simpler sensor arrays and a focus on extended battery life, typically deploy lightweight AI models for basic activity classification (walking, running), step counting, and rudimentary sleep phase detection. These models are often compact enough to execute on low-power microcontrollers or DSPs, sometimes offloading more computationally intensive analysis to a connected smartphone to preserve device energy. For instance, a basic band might use a simple neural network to classify motion sensor data into activity types, consuming minimal power.

Smartwatches, conversely, leverage more advanced sensor suites (ECG, SpO2, skin temperature, advanced motion sensors) and more powerful SiPs featuring dedicated Neural Engines or NPUs. This enables them to execute significantly more complex AI models directly on the device, within their thermal and power envelopes, showcasing the advanced capabilities of AI in smartwatches. Examples include:

- Advanced Health Metrics: Real-time ECG analysis for atrial fibrillation detection, continuous blood oxygen monitoring, and sophisticated sleep stage analysis.

- On-device Voice Assistants: Processing voice commands locally for faster responses and enhanced privacy.

- Complex Gesture Recognition: Detecting specific hand movements for device interaction.

- Fall Detection: Using accelerometer and gyroscope data to identify falls and trigger emergency services.

- Personalized Coaching: Analyzing activity and biometric data to provide tailored fitness recommendations.

Apple Watch Series 8/Ultra, with its S8 SiP and integrated Neural Engine, uses Core ML for many of these tasks. Android smartwatches like the Samsung Galaxy Watch 6 (Exynos W930 with NPU) or devices with Qualcomm Snapdragon W5+ Gen 1 utilize NNAPI to access their respective AI accelerators for similar advanced functionalities. The ability to perform these complex inferences on-device, with low latency and high reliability, is what fundamentally differentiates the “smartness” of a smartwatch from a basic fitness band, subject to the specific model and workload.

Which Design Is More Efficient

Assessing which design exhibits “greater efficiency” is highly dependent on the specific application requirements, target performance metrics, and ecosystem constraints. For optimized performance and energy efficiency within a vertically integrated ecosystem, a tightly coupled design like Apple’s Core ML on its Neural Engine demonstrates high efficiency. This vertical integration facilitates deep hardware-software co-optimization, leading to minimized power consumption for a given AI workload and predictable performance characteristics, subject to thermal and power budgets. This architectural synergy contributes to the Apple Watch’s ability to deliver complex AI features while maintaining competitive battery life.

For the broader Android ecosystem, NNAPI provides a flexible, vendor-agnostic abstraction layer. Its operational efficiency is contingent on the underlying hardware vendor’s implementation and the specific AI model being executed. A well-optimized NPU/DSP with robust NNAPI drivers can achieve excellent efficiency for certain workloads, but performance and power consumption can vary significantly across different devices and chipsets. This design prioritizes compatibility and choice, enabling various manufacturers to innovate on their AI hardware implementations. ONNX, as a model interchange format, contributes to efficiency indirectly by enabling developers to select the most optimized runtime for their target hardware, thereby fostering broader model portability.

Ultimately, for the most demanding, real-time, and continuous AI tasks on a wearable, the tightly integrated approach often yields the highest sustained efficiency. However, the abstracted approach offers greater flexibility and broader device support, making it a viable and often efficient solution across a diverse range of Android wearables, depending on the specific application’s requirements.

Key Takeaways

- On-device AI is crucial for wearables: It enables enhanced privacy, reduced latency, and minimized cloud dependency.

- Frameworks bridge models and hardware: Core ML (Apple), NNAPI (Android), and ONNX (model format) are key architectural components.

- Hardware coupling dictates efficiency: Tightly coupled systems (Core ML on Apple Silicon) deliver optimized performance and power efficiency within their respective ecosystems.

- Abstracted APIs offer flexibility: NNAPI provides broad compatibility but relies on vendor-specific hardware optimizations, leading to varied performance across devices.

- Power and thermal constraints are paramount: Dedicated AI accelerators (Neural Engines, NPUs, DSPs) are essential for reducing power draw and heat generation, enabling sustained AI workloads within device limits.

- Smartwatches lead in complex AI: Their more robust hardware supports advanced on-device inference for health monitoring, voice assistants, and safety features, making them generally more capable in AI than fitness bands, though they are subject to specific model and workload demands.

Final Verdict: AI Fitness Bands vs Smartwatches

When comparing AI Fitness Bands vs Smartwatches from an architectural perspective, the difference is not software — it is silicon capability and power envelope design.