Table of Contents

Edge TPU vs Mobile NPU: Edge TPUs are specialized ASIC accelerators built around systolic arrays and optimized for extremely efficient INT8 convolutional neural network inference in continuous edge deployments. Mobile NPUs, in contrast, are programmable AI accelerators integrated into mobile SoCs that support diverse workloads, data types (INT8, FP16), and dynamic applications such as photography, voice processing, and on-device AI. The key difference is specialization versus flexibility: Edge TPUs maximize inference efficiency, while Mobile NPUs prioritize adaptability within mobile power and thermal limits.

Deploying AI on edge devices and mobile platforms introduces a major engineering challenge: executing neural network inference with real-time responsiveness while staying within strict power and thermal limits. To achieve this, modern systems rely on dedicated AI accelerators designed to handle inference workloads far more efficiently than general-purpose CPUs or GPUs.

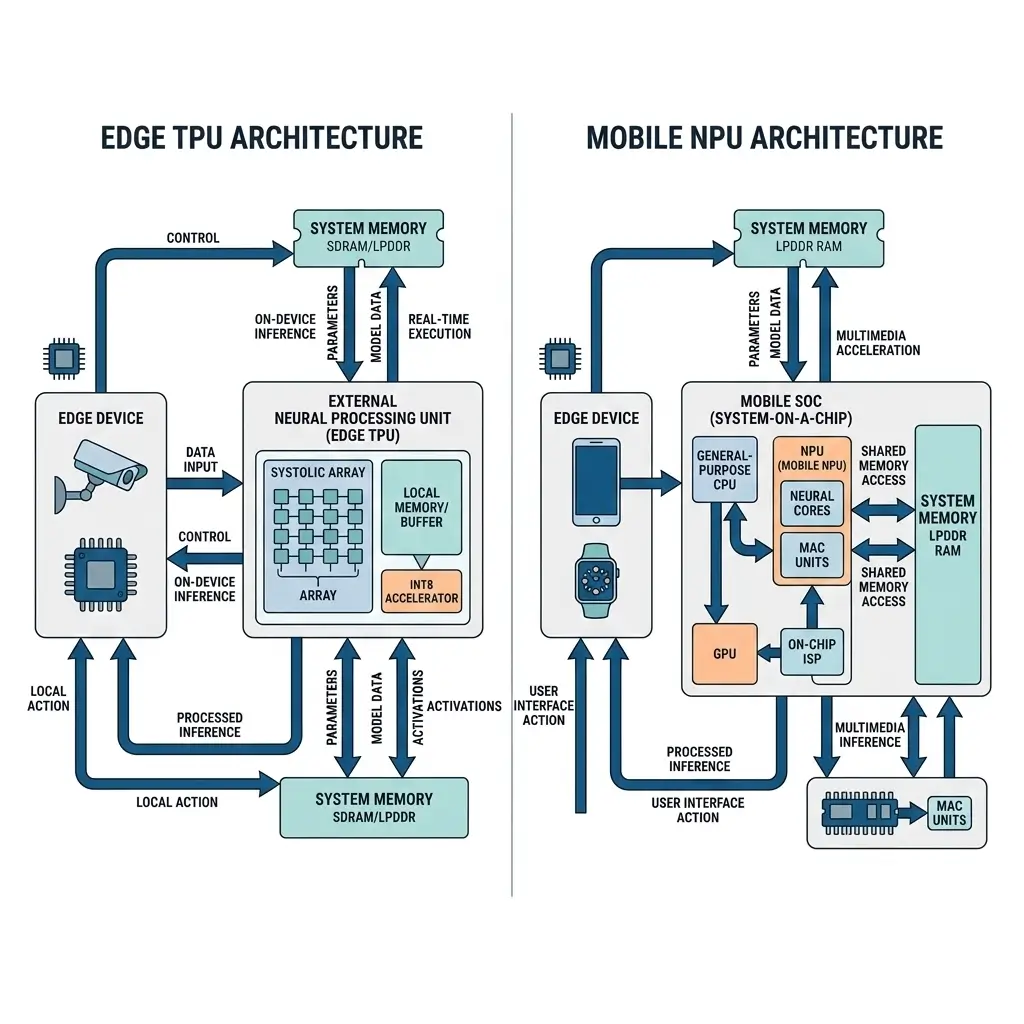

In the Edge TPU vs Mobile NPU comparison, these accelerators represent two distinct architectural approaches. Edge TPUs are specialized ASICs optimized for highly efficient, low-latency inference on edge devices, while Mobile NPUs are programmable accelerators integrated into mobile SoCs that support a wider range of AI workloads.

This analysis explores the compute architecture, silicon constraints, runtime behavior, and system bottlenecks of both technologies to understand how they perform in real-world deployments and why choosing between Edge TPU vs Mobile NPU depends heavily on workload characteristics and deployment requirements.

Edge TPU vs Mobile NPU: What Each Architecture Is Designed For

Edge TPU: The Edge TPU is a dedicated, fixed-function accelerator designed to offload highly specific AI inference tasks from a host CPU. Its primary role is to execute quantized (INT8) convolutional neural networks (CNNs) with high energy efficiency and predictable low latency. It is not a general-purpose processor but a specialized coprocessor, enabling continuous AI capabilities in resource-constrained edge devices where power budget and consistent inference latency are paramount. It excels at repetitive, well-defined tasks like object detection, classification, and pose estimation, ensuring reliable offline execution even in remote industrial settings.

Mobile NPU: Mobile NPUs are integrated accelerators inside modern mobile SoCs. As explained in NPU in smartphones, these processors handle AI workloads such as image processing, voice recognition, and on-device machine learning. Its purpose is to provide broad AI applicability across a diverse, dynamic, and often bursty set of user-facing applications. Unlike the Edge TPU, it must adapt to evolving model architectures (CNNs, RNNs, Transformers), various data types (INT8, FP16), and varying computational demands from applications such as computational photography, voice processing, and on-device generative AI. It acts as an opportunistic orchestrator, leveraging available compute resources across the SoC while adhering to the strict thermal and power envelopes of the mobile platform, managing shared memory bandwidth. This dynamic resource allocation directly impacts battery life, as the NPU intelligently scales its operation based on immediate application needs.

Edge TPU vs Mobile NPU Architecture Comparison

The Edge TPU vs Mobile NPU architecture comparison highlights the trade-off between fixed-function efficiency and programmable AI acceleration. The core architectural divergence between Edge TPUs and Mobile NPUs stems from their fundamental design goals: hyper-specialization for efficiency versus broad programmability for flexibility.

| Feature | Edge TPU (e.g., Coral Edge TPU) | Mobile NPU (e.g., Qualcomm Hexagon, Apple Neural Engine) |

|---|---|---|

| Core Architecture | Systolic array ASIC | Heterogeneous, programmable vector DSPs, MMUs, specialized units |

| Primary Data Type | INT8 (fixed-point 8-bit integer) | Mixed precision: INT8, FP16 (half-precision float), sometimes FP32 |

| Programmability | Fixed-function; operations mapped deterministically | Highly programmable; supports diverse neural network operators |

| Control Logic | Minimal, streamlined for specific operations | Complex, supports dynamic scheduling and diverse instruction sets |

| Integration | Discrete accelerator (USB, PCIe, M.2); can be integrated | Integrated into SoC; shared power/thermal domains |

| Memory Access | Software-managed on-chip scratchpad memory | Integrated into SoC’s shared memory hierarchy (DRAM, caches) |

| Workload Focus | Continuous, predictable, low-latency CNN inference | Dynamic, bursty, varied AI workloads (CNNs, RNNs, Transformers) |

The Edge TPU’s systolic array is a hardwired grid of multiply-accumulate (MAC) units, optimized for matrix multiplications inherent in CNNs. Data streams through the array, minimizing data movement and maximizing MAC utilization. This fixed-functionality can help reduce the overhead of instruction fetching, decoding, and general-purpose control logic, leading to high operational efficiency for its target workloads.

Mobile NPUs, conversely, employ a more flexible architecture, often built around vector DSPs (Digital Signal Processors) with specialized instruction sets for neural network operations, coupled with dedicated Matrix Multiplication Units (MMUs). This heterogeneity allows them to handle a wider range of operations, including convolutions, activations, pooling, and complex tensor manipulations found in various model types. Their programmability, while introducing some overhead, is crucial for adapting to evolving AI models and frameworks.

Edge TPU vs Mobile NPU Architecture Comparison

In the Edge TPU vs Mobile NPU performance comparison, workload specialization plays a major role in determining real-world efficiency. Comparing raw “TOPS” (Tera Operations Per Second) between these architectures can be misleading due to their differing operational contexts and precision.

The Edge TPU achieves high inferences per watt for its target INT8 CNN workloads. Its fixed-function systolic array ensures near 100% MAC utilization for optimized operations, within its power limits, resulting in highly predictable, low-latency execution. For example, a 4 TOPS Edge TPU can sustain its performance consistently for its specific tasks, given model optimization. However, this efficiency comes at the cost of architectural rigidity. Any operation not natively supported (e.g., complex attention mechanisms in Transformers, non-INT8 data types, novel activation functions) either incurs a significant performance penalty by falling back to the host CPU or is simply incompatible, requiring costly model re-engineering.

Mobile NPUs typically boast higher peak TOPS numbers, often in the tens or even hundreds of TOPS, depending on SoC generation. However, these are frequently burst-mode metrics and rarely sustained over extended periods due to the shared power and thermal budget within the SoC. The NPU must dynamically throttle its frequency and voltage (Dynamic Voltage and Frequency Scaling – DVFS) or offload tasks to prevent overheating. This leads to a highly variable performance profile under sustained load, making guaranteed low-latency, continuous inference challenging, especially for memory-intensive models. While more adaptable to diverse models and data types, the overhead of flexibility, complex scheduling, and shared resource contention means they generally exhibit lower peak efficiency (inferences/watt) for hyper-specialized tasks compared to an Edge TPU, particularly with suboptimal memory access. This dynamic behavior directly impacts the sustained performance of complex, multi-tasking AI applications on mobile devices Sustained Ai Performance Vs Peak Tops.

Power Efficiency and Thermal Constraints in Edge TPU vs Mobile NPU

Power efficiency is another key factor in the Edge TPU vs Mobile NPU design trade-off. Many edge accelerators rely heavily on INT8 vs FP16 vs INT4 inference precision trade-offs to balance performance, accuracy, and power efficiency.

Edge TPU: Designed for high power efficiency, the Edge TPU operates within a very tight thermal design power (TDP) envelope, typically in the single-digit watt range for sustained operation. Its fixed-function ASIC design minimizes dynamic power consumption by eliminating unnecessary control logic and optimizing data flow. This allows for fanless, battery-powered, or continuously operating embedded systems where AI inference is a primary, dedicated function, within its TDP. The predictable execution profile means power consumption is relatively stable during active inference, making thermal management straightforward.

Mobile NPU: Integrated into a larger SoC, the Mobile NPU shares a common power and thermal budget with the CPU, GPU, and other accelerators. This necessitates sophisticated dynamic power management and thermal throttling. The NPU’s frequency and voltage are constantly adjusted based on workload, temperature, and overall SoC power limits, impacting sustained performance. While capable of high-performance bursts, sustained high utilization is often limited by thermal constraints, leading to performance degradation over time. The runtime’s primary responsibility shifts from pure compute maximization to dynamic resource arbitration and thermal management, prioritizing overall user experience and device stability.

Memory & Bandwidth Handling

Edge TPU: The Edge TPU often relies on software-managed on-chip scratchpad memory rather than a traditional cache hierarchy, minimizing external DRAM access. This design choice maximizes bandwidth and minimizes power consumption by eliminating the overhead of cache coherence protocols and tag lookups. However, it shifts the burden of memory orchestration to the compiler and runtime. Sophisticated graph partitioning and data staging algorithms are required to ensure optimal data flow, prevent stalls, and efficiently manage data movement between the host system’s DRAM and the Edge TPU’s on-chip memory, mitigating interconnect bottlenecks. Poorly optimized memory management can negate the hardware’s efficiency.

Mobile NPU: Mobile NPUs are tightly integrated into the SoC’s high-bandwidth memory hierarchy, sharing access to the system DRAM and various levels of cache with the CPU, GPU, and ISP, via a shared interconnect fabric. While this provides flexibility, it also introduces memory contention. The NPU competes for shared DRAM bandwidth and cache resources, especially for memory-intensive models or when multiple demanding applications are active, potentially hitting bandwidth ceilings. This contention can introduce unpredictable latency spikes and reduce effective throughput, particularly under high system load. Advanced memory controllers with Quality of Service (QoS) mechanisms are employed to mitigate these issues, but it remains a fundamental limitation of shared resource architectures, directly impacting the latency behavior of real-time AI applications.

Software Ecosystem & Tooling

Edge TPU: The software ecosystem for Edge TPUs is relatively constrained, reflecting its fixed-function nature. It typically involves a TensorFlow Lite-based toolchain that requires models to be quantized to INT8 and compiled specifically for the Edge TPU’s instruction set. The compiler performs graph partitioning, operator mapping, and memory allocation for the on-chip scratchpad. While straightforward for supported operations, the rigidity means developers must adhere to strict model architectures and quantization pipelines. This limits flexibility for novel AI research but simplifies deployment for well-defined tasks, provided models adhere to the supported operator set. This strict compilation process can impact offline execution, as models must be pre-optimized and cannot dynamically adapt to new operators without recompilation.

Mobile NPU: Mobile NPUs operate within a more complex and heterogeneous software ecosystem. They typically rely on vendor-specific SDKs and abstraction layers (e.g., Qualcomm’s AI Engine Direct, Apple’s Core ML, MediaTek’s NeuroPilot). These SDKs provide APIs and compilers that translate popular AI frameworks (TensorFlow, PyTorch, ONNX) into optimized, vendor-specific instructions for the NPU. This flexibility comes with an abstraction overhead and potential vendor lock-in. For instance, developers can leverage the Qualcomm AI Engine SDK documentation to optimize models for Hexagon NPUs. The runtime scheduler is highly sophisticated, responsible for dynamic resource allocation, task prioritization, and managing data flow across the diverse compute units within the SoC, often balancing multiple concurrent AI workloads and their memory demands.

Real-World Edge AI Deployment Scenarios

Edge TPU:

- Ideal for: Dedicated, continuous, power-sensitive, real-time inference in resource-constrained edge devices.

- Examples: Industrial machine vision (defect detection on a production line), smart sensors (continuous anomaly detection), IoT devices (local voice command processing), security cameras (on-device object detection).

- Deployment Characteristics: Predictable workloads, minimal power budget, consistent latency requirements, often deployed as a discrete module. The architectural rigidity means the AI model must be carefully designed and optimized for the Edge TPU from the outset, often requiring specific quantization.

Mobile NPU:

- Ideal for: User-facing applications requiring broad AI capabilities within a mobile ecosystem, where flexibility and adaptability to diverse workloads are key.

- Examples: Computational photography (image enhancement, semantic segmentation), voice processing (transcription, virtual assistants), on-device generative AI (text-to-image, style transfer), augmented reality (object tracking, scene understanding).

- Deployment Characteristics: Dynamic and bursty workloads, shared power/thermal envelope, emphasis on user experience, tightly integrated into the SoC. The runtime must efficiently manage opportunistic resource use across the SoC to deliver a smooth experience, balancing performance with power and thermal constraints.

Edge TPU vs Mobile NPU: Which Architecture Is More Efficient?

The concept of “efficiency” is context-dependent.

- Edge TPU is more efficient for its specific, narrow workload. For continuous, low-latency INT8 CNN inference, its fixed-function systolic array delivers high inferences per watt. The silicon is purpose-built to execute these operations with minimal overhead, making it a highly efficient choice when the workload perfectly aligns with its capabilities and power budget.

- Mobile NPU is more efficient for broad, dynamic workloads within its power/thermal envelope. While not achieving the same peak inferences/watt for a single, specialized task as an Edge TPU, its heterogeneous and programmable architecture allows it to efficiently handle a much wider range of AI models, data types, and application demands, within the overall SoC power envelope. Its efficiency lies in its versatility and ability to opportunistically leverage shared SoC resources to deliver a consistent user experience across diverse AI-powered features.

In essence, the Edge TPU optimizes for peak efficiency in a specific niche, while the Mobile NPU optimizes for broad applicability and overall system efficiency within the constraints of a general-purpose mobile SoC.

Edge TPU vs Mobile NPU: Key Technical Takeaways

Ultimately, choosing between Edge TPU vs Mobile NPU depends on workload specialization, power constraints, and deployment environment.

- Specialization vs. Generality: Edge TPUs are hyper-specialized ASICs for INT8 CNNs, prioritizing high power efficiency and predictable latency. Mobile NPUs are heterogeneous, programmable accelerators designed for broad AI workload flexibility within an SoC.

- Architectural Trade-offs: Edge TPUs leverage fixed-function systolic arrays for efficiency but suffer from rigidity. Mobile NPUs use flexible vector DSPs and MMUs for adaptability but incur overhead from programmability and shared resource management.

- Runtime Implications: Edge TPU runtimes are simpler, focused on deterministic execution and memory staging. Mobile NPU runtimes are complex, managing dynamic resource allocation, thermal throttling, and contention across a heterogeneous SoC, often balancing multiple concurrent AI workloads.

- Deployment Context: Edge TPUs excel in dedicated, continuous, power-sensitive edge applications. Mobile NPUs are crucial for diverse, user-facing AI features in mobile devices, balancing performance with overall system constraints.

- Efficiency Definition: True efficiency is defined by the workload. An Edge TPU is more efficient for its specific tasks, within its power envelope, while a Mobile NPU is more efficient for the vast and varied demands of a modern mobile computing platform, considering shared resources.