Summary

AI Model Loading Time on Devices is the process of transferring AI models from storage or network into memory before execution. It significantly impacts device performance, latency, and battery life. This often-overlooked phase involves CPU processing, memory usage, and data transfer, leading to hidden power consumption. Optimizing AI model loading is essential for improving responsiveness, efficiency, and overall user experience in edge AI systems.

Table of Contents

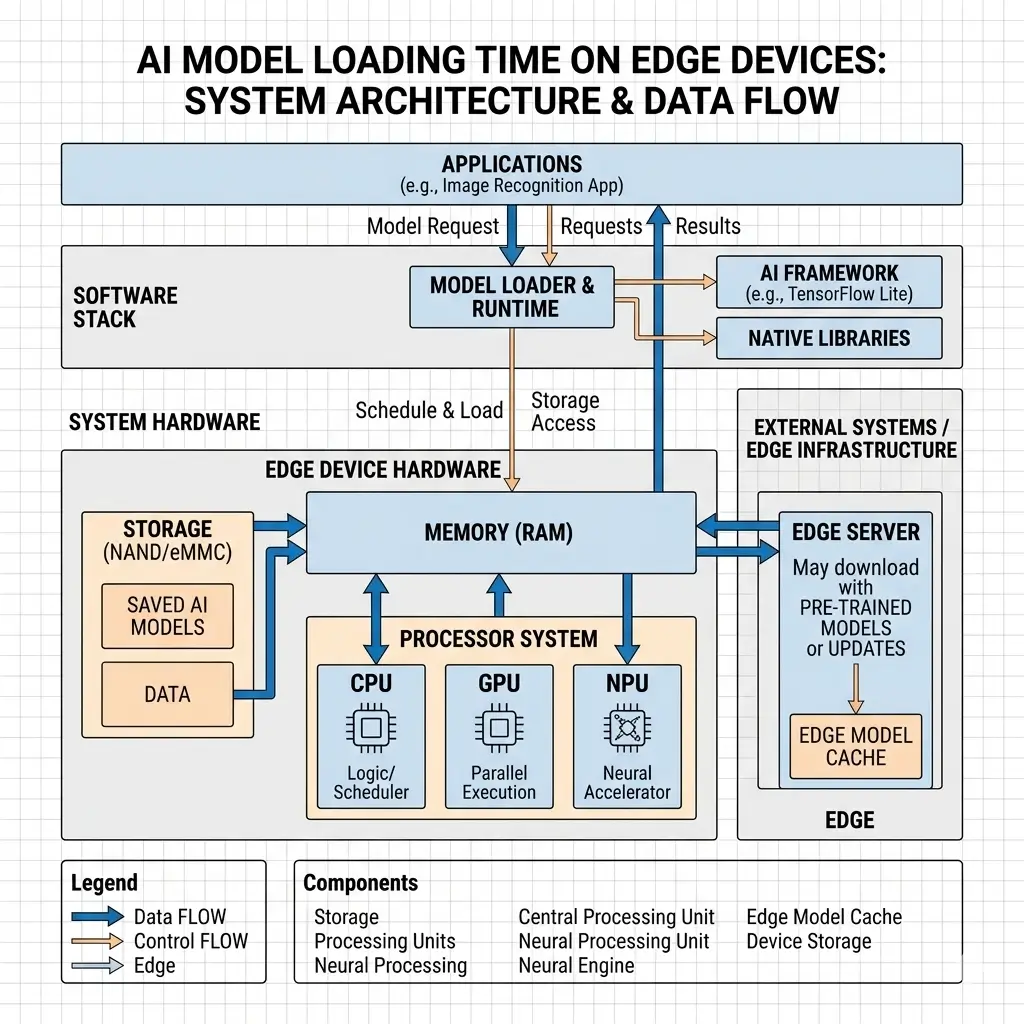

AI Model Loading Time on Devices refers to the duration and associated energy expenditure required to transfer a pre-trained AI model from persistent storage or a network source into active memory and prepare it for execution on a device’s inference engine. This transient phase, often overlooked in power budgets, involves significant CPU activity for parsing, decompression, and memory management, alongside data transfer across various system buses, leading to substantial cumulative power consumption before any inference begins. Optimizing this loading process is critical for overall device power efficiency and responsiveness, especially for frequently updated or dynamically loaded models, within the device’s power envelope.

AI is becoming everywhere—from smartphones to wearables and edge devices. Most optimization efforts focus on improving inference performance and power efficiency. However, one critical and often overlooked factor is AI Model Loading Time on Devices.

This phase occurs when a model moves from storage into memory and becomes ready for execution. Although it happens before inference, it introduces significant hidden latency and power consumption. In many cases, this stage can quietly impact performance more than expected.

Optimizing AI Model Loading Time on Devices is essential for building fast, responsive, and power-efficient edge AI systems. It directly affects user experience, startup time, and overall battery life.

What Is AI Model Loading Time on Devices?

AI Model Loading Time on Devices encompasses the entire sequence of operations from initiating a request for an AI model to its readiness for inference execution on the target hardware accelerator (e.g., NPU, DSP, GPU). This is not a single atomic event but a multi-stage process involving data retrieval, parsing, transformation, and memory allocation.

From a power efficiency perspective, it represents a period of elevated system activity across multiple components—NVM, DRAM, CPU, network interface, and potentially the accelerator itself—before the primary AI workload even commences. This cumulative power draw during loading can be substantial, especially for large or frequently updated models, and directly impacts the overall energy budget of the device, subject to its thermal and power envelopes.

How AI Model Loading Time on Devices Works

The process of AI Model Loading Time on Devices typically involves several sequential and often interleaved steps, each contributing to the overall power consumption and latency:

- Model Retrieval: The model data, often in a compressed or quantized format, is retrieved from its source. This can be local Non-Volatile Memory (NVM) such as eMMC or UFS, constrained by memory bandwidth ceilings, or a remote server via a network interface (Wi-Fi or cellular modem), subject to available network bandwidth. * Power Implication: NVM access incurs power for the controller and flash memory. Network retrieval activates power-hungry radios (modems, Wi-Fi), which consume CPU cycles for network stack processing, TLS handshakes, and decryption. This is a significant transient power spike, directly impacting battery life.

- Parsing and Validation: The retrieved model file (e.g., TFLite, ONNX, OpenVINO IR) is parsed by the AI framework’s runtime. This involves reading the model’s graph structure, operator definitions, tensor shapes, and weights. Basic validation checks are performed. * Power Implication: This stage is primarily CPU-bound, keeping the processor active and increasing power consumption. Additionally, general-purpose operating systems and AI frameworks introduce overhead, which can reduce the efficiency of on-device AI compared to cloud-based AI systems.

- Decompression and Dequantization: If the model was stored in a compressed format (e.g., gzip, custom compression) or a lower precision (e.g., INT8, INT4), it must be decompressed and potentially dequantized to a format suitable for the target accelerator. * Power Implication: This is a compute-intensive task, typically executed on the CPU or a general-purpose DSP. The absence of dedicated hardware accelerators for universal decompression/dequantization shifts this power burden to general-purpose compute units, which are less power-efficient for these specific operations, prolonging their active state and increasing overall energy consumption.

- Memory Allocation and Data Transfer: The model’s weights and intermediate tensor buffers are allocated in DRAM. The processed model data is then transferred from system memory to the accelerator’s dedicated memory (if present) or made accessible via a Unified Memory Architecture (UMA). * Power Implication: DRAM access and memory controller activity consume power. Data transfers across system buses (e.g., AXI, PCIe) also incur energy costs, with performance characterized by sustained vs. peak throughput. In UMA systems, while explicit copies are reduced, contention for shared DRAM bandwidth can prolong the active state of the memory controller and DRAM, potentially consuming more cumulative power than a faster, dedicated transfer to high-bandwidth VRAM.

- Accelerator Preparation (Runtime Compilation/JIT): The AI framework translates the model graph into a sequence of operations optimized for the specific target hardware accelerator. This might involve Just-In-Time (JIT) compilation or loading pre-compiled kernels. * Power Implication: This is another CPU-intensive task, potentially involving the accelerator’s control plane, keeping both active and consuming power. This phase directly impacts the sustained performance of subsequent inference tasks by determining how efficiently the accelerator can operate.

AI Model Loading Time on Devices Architecture Explained

The architecture influencing AI Model Loading Time on Devices is a complex interplay of hardware and software components, each contributing to power consumption and performance.

| Component Category | Key Elements | Role in Model Loading | Power Efficiency Considerations |

|---|---|---|---|

| Storage Subsystem | NVM (UFS, eMMC), NVM Controller | Stores compressed/quantized model data. | NVM access power, controller efficiency, sustained read bandwidth, and memory bandwidth ceilings. |

| Network Subsystem | Wi-Fi/Cellular Modem, RF Front-End | Retrieves models from remote servers. | High transient power consumption of radios, CPU overhead for network stack/TLS, subject to network conditions, and available bandwidth. |

| Main Processor (CPU) | General-purpose cores | Orchestrates loading, parsing, decompression, dequantization, memory management, JIT compilation. | Dominant power consumer during loading due to sustained activity and lack of specialized hardware for these tasks, within its power envelope. |

| System Memory (DRAM) | LPDDRx modules, Memory Controller | Stores model weights, intermediate buffers, and framework runtime. | DRAM refresh power, memory controller activity, bandwidth contention, and memory bandwidth ceilings. |

| AI Accelerator (NPU/DSP/GPU) | Inference engines, dedicated memory (optional) | Target for model execution; may assist with some loading tasks (e.g., pre-fetching). | Primarily optimized for inference power; the loading phase often relies on CPU/DRAM. |

| Operating System (OS) | Kernel, Scheduler, Memory Manager | Manages resources, schedules tasks, handles I/O. | General-purpose scheduling can prolong loading, increasing cumulative power. |

| AI Framework Runtime | TFLite, ONNX Runtime, PyTorch Mobile | Interprets model format, manages execution graph, and interfaces with hardware. | Abstraction overhead, dynamic compilation, and memory management efficiency. |

The prevailing design paradigm prioritizes inference-time power efficiency for NPUs/DSPs, often at the expense of optimizing the data pathways and CPU overheads for the transient loading phase. This asymmetry means that while the accelerator is highly efficient for its core task, the system as a whole can be inefficient during model setup due to prolonged CPU activity and unoptimized data movement. Tools like the Intel OpenVINO toolkit documentation provide insights into optimizing model deployment and execution across various hardware.

Performance Characteristics of AI Model Loading Time on Devices

The performance characteristics of AI Model Loading Time on Devices are primarily defined by latency, throughput, and critically, the cumulative energy consumed per load.

- Latency: The total time from initiation to readiness for inference. This is directly impacted by network speed, NVM read speeds, CPU processing power for parsing and decompression, and bus bandwidth. High latency directly leads to a poor user experience, such as app startup delays and slow feature activation, which is especially critical for AI-powered smart rings and wearable AI devices.

- Throughput: The rate at which model data can be processed and moved through the system. This is often limited by the slowest link in the chain—be it network bandwidth, NVM read speed, or CPU decompression capability.

- Energy per Load: The total electrical energy (Joules) consumed by the device during the entire loading process. This is the product of instantaneous power draw and the duration of the loading phase.

Key Performance Tradeoffs from a Power Efficiency Lens:

| Tradeoff Dimension | Description | Power Efficiency Impact |

|---|---|---|

| Inference Power vs. Loading Power | Designs optimize for sustained, low-power inference, often treating loading as a less frequent, “setup” task. | Optimizing for inference often overlooks the cumulative power cost of inefficient loading. A short, power-intensive load might be better than a long, moderately intensive load if it allows components to idle sooner, improving overall battery impact. |

| Storage/Network Savings vs. Compute Burden | Quantization and compression reduce model size, saving NVM capacity and network bandwidth/data costs. | This shifts the power burden to the CPU/DSP for on-device decompression/dequantization, which can be less power-efficient than if dedicated hardware were available or if the model were loaded in native precision. |

| Abstraction Overhead vs. Flexibility | General-purpose OS and AI frameworks provide broad compatibility but introduce CPU overhead for parsing, scheduling, and memory management. | Increased CPU activity during loading, consuming more power than a highly specialized, bare-metal loader, leading to higher thermal output. |

| Network Benefits vs. Local Power/Latency | Network-based loading allows for immediate updates and reduces local storage requirements. | Incurs significantly higher transient power consumption (modem/Wi-Fi, CPU for network stack/TLS) and latency compared to local loading, creating “phantom” battery drain spikes. |

Real-World Applications of AI Model Loading Time on Devices

The impact of AI Model Loading Time on Devices is pervasive across various domains:

- Mobile Devices (Smartphones, Tablets): App startup times for AI-powered features (e.g., advanced camera filters, on-device language models, real-time translation). Frequent model updates or dynamic loading of specialized models (e.g., for specific photo effects) can lead to noticeable delays and battery drain spikes. Frameworks like

TensorFlow Lite documentationguide on optimizing on-device AI performance and model loading efficiency. - Edge AI Devices (IoT, Smart Home): Devices like smart speakers, security cameras, or industrial sensors often download new or updated models over the air. Prolonged loading times can delay critical responses (e.g., object detection alerts) and significantly impact battery-powered devices, affecting their offline execution impact.

- Automotive Infotainment/ADAS: Loading large perception models for Advanced Driver-Assistance Systems or personalized infotainment features must be fast and reliable. Slow loading can delay safety-critical functions or degrade user experience, directly impacting latency behavior.

- Wearables: Extremely power-constrained devices where every millijoule counts. Loading even small models efficiently is crucial for maintaining multi-day battery life and responsiveness for features like activity tracking or voice commands.

Limitations of AI Model Loading Time on Devices in Edge AI Systems

Several inherent limitations contribute to the challenges of optimizing AI Model Loading Time on Devices:

- Asymmetric Hardware Optimization: Compute units (NPUs, DSPs) are highly optimized for low-power inference, but the data pathways, memory controllers, and CPU overheads for loading are often less optimized for power. This architectural imbalance means the system is not uniformly efficient across the entire AI lifecycle, leading to suboptimal battery impact during setup.

- Lack of Dedicated Decompression/Dequantization Hardware: There is a general absence of universal, dedicated hardware blocks for efficient, low-power decompression of various model formats or on-the-fly dequantization. This forces these compute-intensive tasks onto general-purpose CPUs or DSPs, which are less power-efficient for these specific operations, increasing thermal stability challenges.

- Software Stack Complexity and Abstraction Overhead: The reliance on general-purpose operating systems and AI frameworks (e.g., TFLite, ONNX Runtime) for broad compatibility across diverse hardware introduces multiple layers of abstraction. These layers incur CPU overhead for parsing, scheduling, memory management, and dynamic compilation, keeping the CPU active longer and consuming more power during the loading phase, which can degrade sustained performance.

- Memory Bandwidth Contention: In Unified Memory Architectures, while data copies are reduced, the shared DRAM bus can become a bottleneck during large model transfers, leading to contention between the CPU and accelerator. This prolongs the active state of the memory controller and DRAM, increasing cumulative power consumption.

- Network Variability and Power Cost: For network-first strategies, the variability of network conditions (latency, bandwidth) and the high transient power consumption of cellular modems or Wi-Fi radios are significant limitations that are largely outside the control of the on-device AI stack, directly affecting latency behavior.

Why AI Model Loading Time on Devices Matters for Performance and Battery Life

Optimizing AI Model Loading Time on Devices matters profoundly for several critical reasons:

- Device Power Efficiency and Battery Life: Inefficient loading leads to significant, often overlooked, cumulative power consumption. Prolonged CPU activity, network radio usage, and memory transfers drain battery life, particularly for devices with frequent model updates or dynamic AI features. This “phantom” drain can be a major source of user dissatisfaction.

- User Experience and Responsiveness: Slow model loading directly translates to application startup delays, feature activation lag, and overall system unresponsiveness. In critical applications like ADAS or real-time communication, this can have severe consequences for latency behavior.

- Thermal Management: The cumulative power consumption during loading generates heat. Prolonged loading phases can trigger thermal throttling before any actual inference begins, degrading subsequent inference performance and impacting device longevity, thus affecting thermal stability.

- System Design and Resource Allocation: Understanding loading power costs allows architects to make informed decisions about model delivery strategies (local vs. network), hardware acceleration for specific loading tasks, and overall system power budgeting. It highlights the need for a holistic power analysis beyond just inference.

- Scalability and Deployment: As AI models grow in complexity and are deployed on an increasing diversity of edge devices, efficient loading becomes a bottleneck for widespread adoption and seamless updates, impacting sustained performance.

Key Takeaways on AI Model Loading Time on Devices

- AI Model Loading Time on Devices is a critical, often underestimated, phase for device power consumption and performance.

- The current design paradigm prioritizes inference efficiency, leading to architectural asymmetries where loading pathways are less optimized for power.

- Network-based loading, while offering flexibility, incurs significant transient power spikes from radios and CPU activity.

- Model compression and quantization save storage/network power but shift the computational and power burden to the CPU/DSP for on-device decompression/dequantization.

- General-purpose OS and AI frameworks introduce CPU overhead during loading due to abstraction layers and dynamic processing.

- Optimizing loading is essential for improving battery life, device responsiveness, and overall system thermal management in modern AI systems.

FAQ: AI Model Loading Time on Devices

What affects AI Model Loading Time on Devices the most?

AI Model Loading Time on Devices is mainly affected by storage speed, CPU performance, and model size.

Why is AI Model Loading Time on Devices important?

AI Model Loading Time on Devices directly impacts latency, battery life, and user experience.