Table of Contents

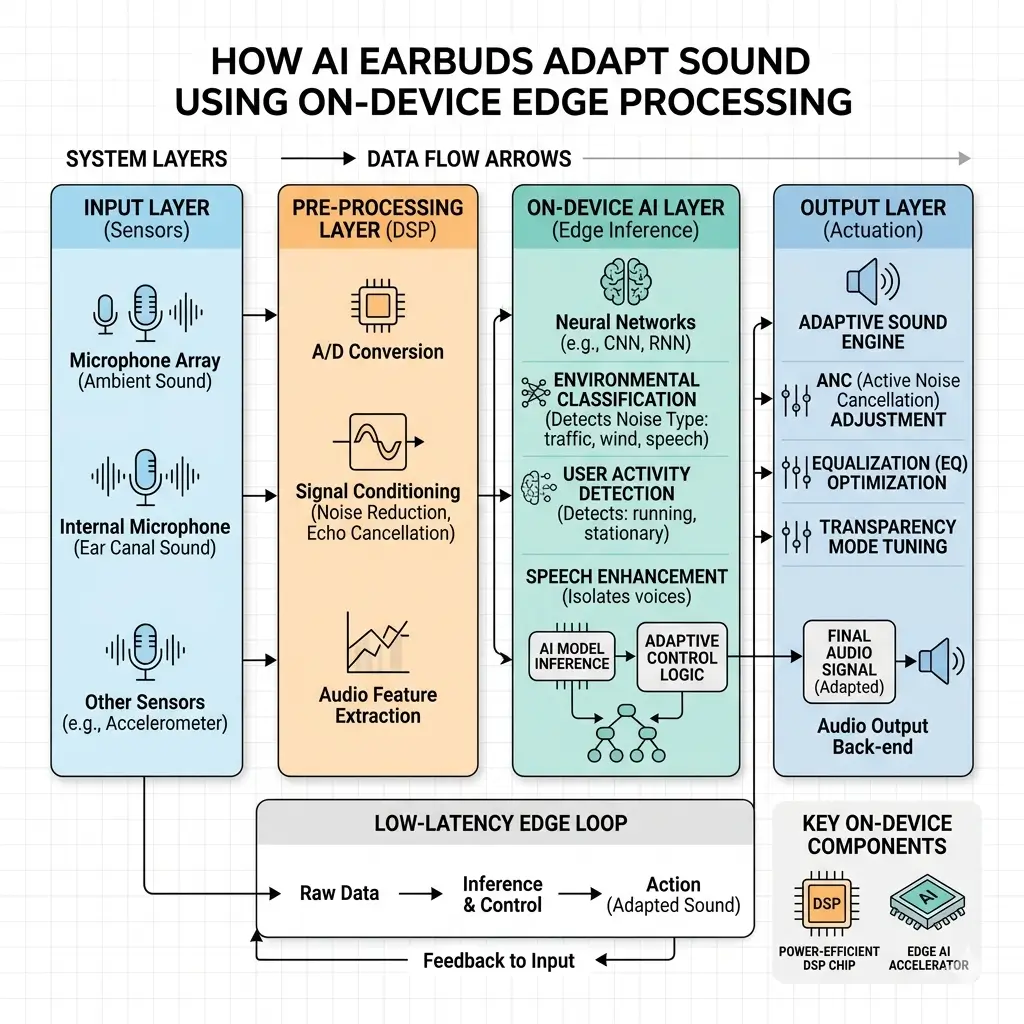

AI earbuds adapt sound using on-device AI processing that runs machine learning models directly inside the earbud. Specialized System-on-Chip (SoC) architectures combine Digital Signal Processors (DSPs) and Neural Processing Units (NPUs) to analyze ambient noise, optimize audio output, and enable features like adaptive noise cancellation in real time. Because processing happens locally, latency is reduced, battery efficiency improves, and user audio data stays private.

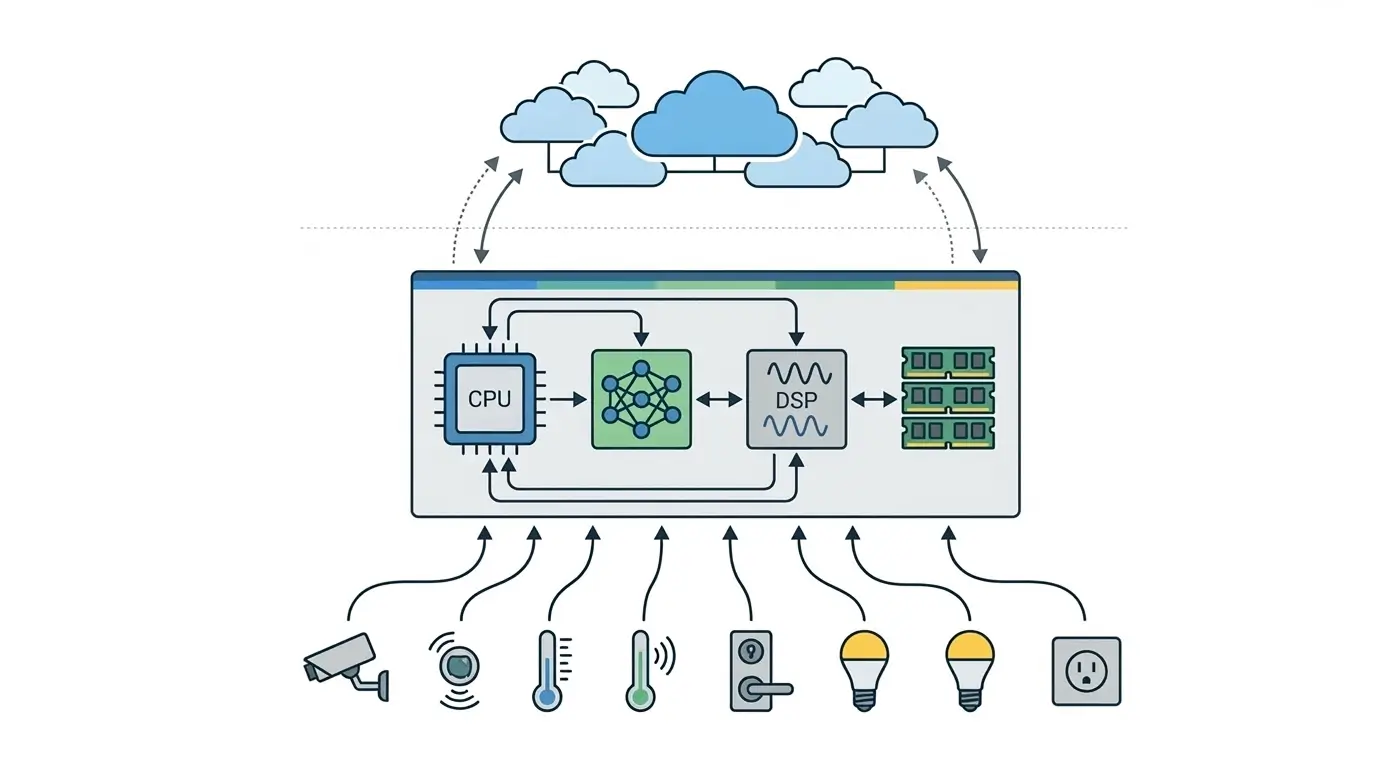

Bringing sophisticated artificial intelligence capabilities to ultra-compact, power-constrained devices like earbuds presents a formidable engineering challenge. The problem lies in executing complex machine learning (ML) models for real-time audio adaptation—such as active noise cancellation or personalized spatial audio—without compromising latency, battery life, or user privacy. This necessitates a hardware-first design philosophy, where silicon architecture and runtime scheduling are meticulously optimized to enable advanced AI functionality directly at the edge, similar to modern edge AI architectures used in embedded intelligent devices. Understanding how AI earbuds adapt sound using on-device AI helps explain why modern earbuds can deliver real-time noise cancellation and personalized audio experiences.

How AI Earbuds Adapt Sound Using On-Device AI

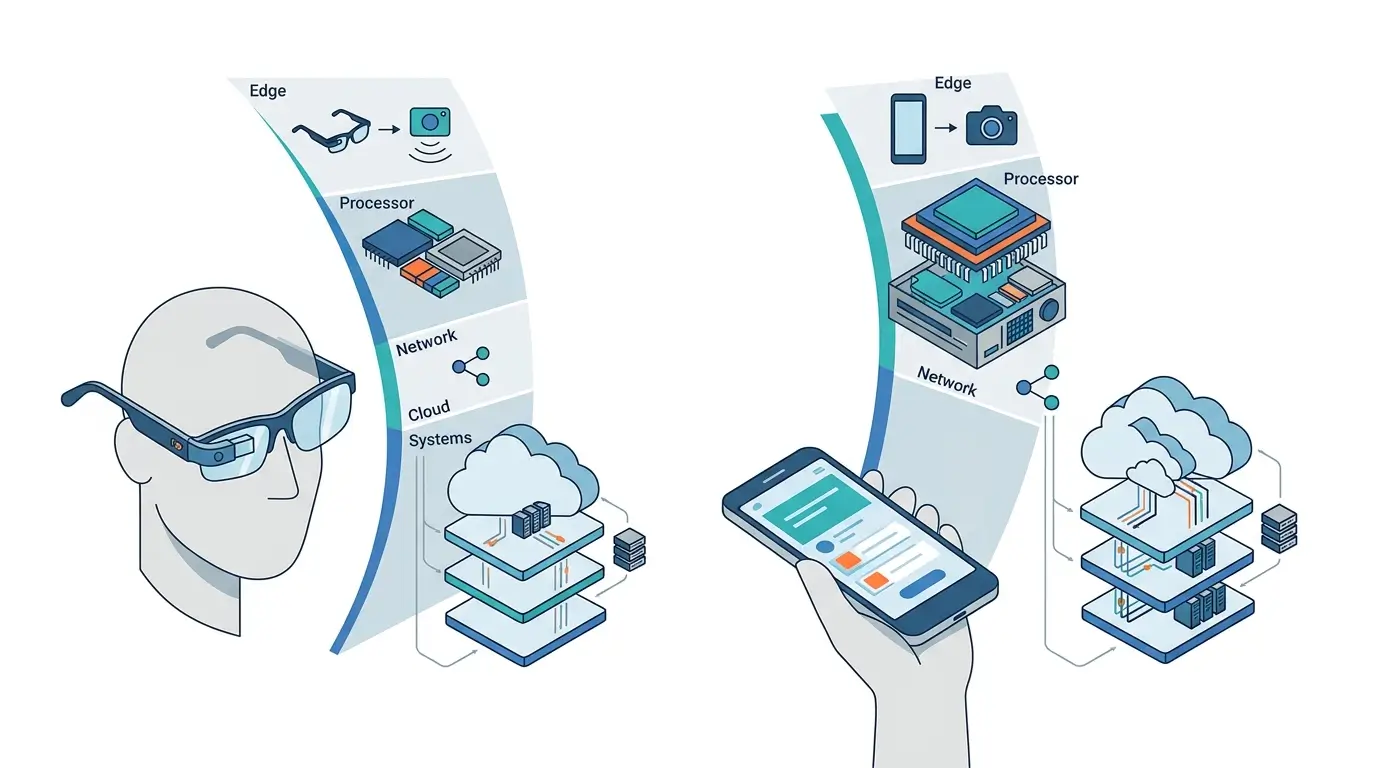

On-device sound adaptation in AI earbuds refers to the capability of the earbud’s internal processor to analyze, interpret, and modify audio signals locally without relying on external compute resources such as smartphones or cloud infrastructure. This concept is closely related to On-device AI vs Cloud AI, where processing decisions are executed directly on the device for faster response times.

This direct, in-situ processing is critical for features demanding millisecond-level responsiveness, where any round-trip latency to an external processor would degrade the user experience.

Core functionalities include:

- real-time environmental sound classification

- adaptive noise cancellation (ANC)

- dynamic equalization

- spatial audio rendering with head tracking

- advanced voice enhancement

The design is fundamentally driven by the need for immediate action on audio streams, making locality of compute a non-negotiable requirement for sustained performance.

How It Works

The operational principle relies on a continuous low-latency inference pipeline executed entirely within the earbud’s System-on-Chip (SoC). Microphones capture ambient sound and the user’s voice, which are then converted into digital signals by an Analog-to-Digital Converter (ADC). These raw audio streams are passed into specialized processing blocks inside the chip.

A Digital Signal Processor (DSP) typically performs the first stage of processing, including filtering, beamforming, and basic equalization. This stage prepares the audio data for more advanced machine-learning inference.

Next, a dedicated Neural Processing Unit (NPU) executes optimized and often quantized machine-learning models. These models can classify environmental noise, detect speech, or track head orientation for spatial audio. This process is closely related to On-device AI private voice assistants, where voice processing and recognition occur directly on the device instead of relying on cloud servers.

The NPU architecture is optimized for efficient matrix multiplications and convolution operations, allowing it to perform a large number of Multiply-Accumulate (MAC) operations per watt. This efficiency is essential for real-time audio processing while maintaining battery life.

The results of these AI inferences trigger immediate adjustments in the audio pipeline. For example, the system can generate anti-phase signals for active noise cancellation (ANC), dynamically modify equalization curves, or update spatial audio rendering based on head movements.

A lightweight Microcontroller Unit (MCU) coordinates the entire system by scheduling tasks, managing power states, and controlling data flow between the DSP and NPU. This coordination ensures that all processing stages meet strict real-time deadlines within the limited power budget of a wearable device.

Together, this combination of specialized hardware and optimized runtime scheduling enables seamless, responsive sound adaptation directly inside AI-powered earbuds.

Architecture Overview

The core of an AI earbud’s on-device adaptation capability is a highly integrated System-in-Package (SiP) or System-on-Chip (SoC) designed for ultra-low power and minimal form factor, similar to architectures used in wearable chip platforms like the Qualcomm AI Engine. This architecture consolidates multiple functional blocks onto a single die or package:

- Microcontroller Unit (MCU): The central control unit, managing system-level tasks, power management, peripheral interfaces, and orchestrating the data flow between other components. It handles the overall runtime scheduling of tasks across the specialized accelerators.

- Digital Signal Processor (DSP): Optimized for traditional audio processing algorithms. It performs tasks like audio filtering, equalization, and sample rate conversion, and often acts as a pre-processor for the NPU, preparing raw audio data for ML inference.

- Neural Processing Unit (NPU): A specialized hardware accelerator designed for efficient execution of machine learning inference, particularly neural networks. It provides high MAC/W efficiency for tasks such as environmental sound classification, voice activity detection, and noise reduction, offloading these compute-intensive operations from the MCU or DSP.

- Power Management Unit (PMU): Manages power delivery, voltage regulation, and fine-grained power gating to individual blocks, crucial for extending battery life in a continuous operation scenario.

- Radio Module (Bluetooth/BLE): Handles wireless communication with host devices for audio streaming, control signals, and Over-The-Air (OTA) firmware/model updates.

- Memory Subsystem: Primarily relies on on-chip SRAM for low-latency data access and minimal off-chip LP-DDR (Low-Power Double Data Rate) for larger model weights or temporary buffers, a direct consequence of space and power constraints.

This integrated design prioritizes space and power efficiency over raw computational flexibility, ensuring that the necessary compute for real-time ML is available within the earbud’s diminutive physical and power envelope.

| Component Type | Primary Function | Key Advantage in Earbuds |

|---|---|---|

| MCU | System control, task scheduling, power management | Orchestration, low-power idle states |

| DSP | Traditional audio processing, pre-ML processing | High efficiency for fixed-function audio algorithms |

| NPU | ML inference (neural networks) | High MAC/W efficiency for AI tasks, low latency |

| PMU | Power regulation, power gating | Maximizes battery life, fine-grained power control |

| On-chip SRAM | Fast data access, temporary buffers | Ultra-low power, minimal latency, compact footprint |

| Minimal LP-DDR | Larger model storage, context buffers | Higher capacity than SRAM, but still power-constrained |

Performance Characteristics

The performance of on-device AI in earbuds is defined by a critical balance of latency, power efficiency, and computational throughput, all within severe physical and thermal constraints.

- Real-time Latency: For features like ANC or spatial audio, the system must achieve end-to-end processing latencies in the order of milliseconds (e.g., <20ms for ANC, <10ms for head-tracked spatial audio). This necessitates dedicated hardware accelerators (DSPs, NPUs) and highly optimized runtime scheduling to minimize pipeline stalls and context switching overheads, directly impacting the responsiveness of the user experience.

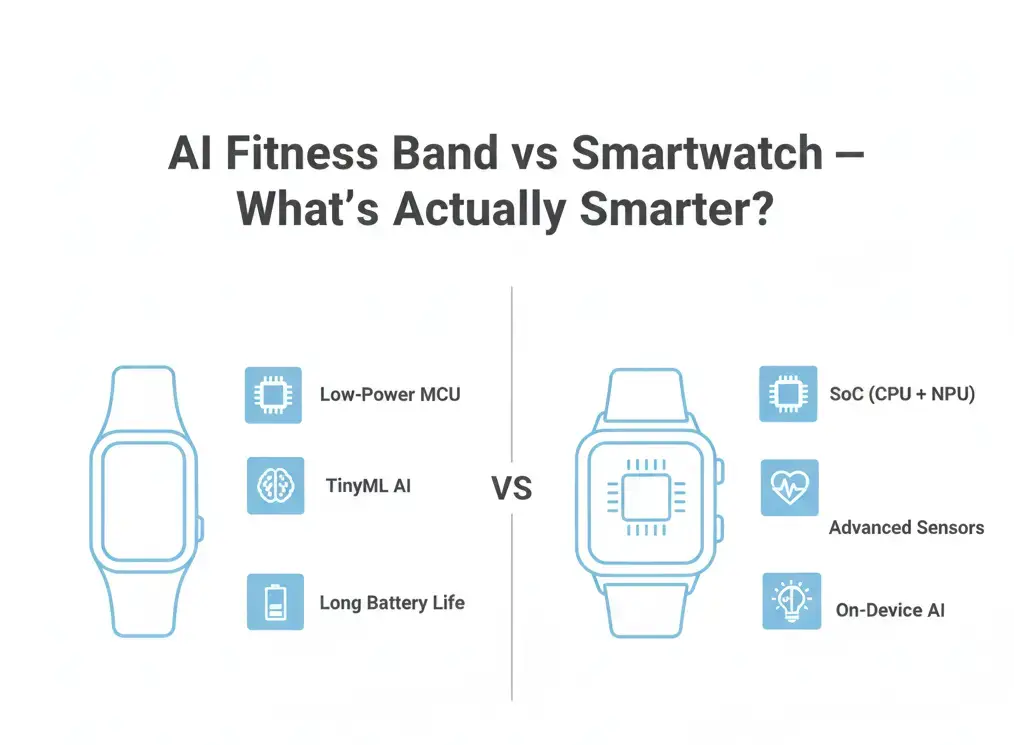

- Power Efficiency (MAC/W): Continuous ML inference demands exceptional power efficiency, which is why modern embedded processors increasingly rely on specialized accelerators for edge artificial intelligence. NPUs are designed to deliver orders of magnitude more Multiply-Accumulate operations per Watt compared to general-purpose CPUs, which is crucial for sustaining complex AI tasks within the earbud’s meager battery life (typically 4-8 hours of continuous use).

- Computational Throughput (TOPS): While not reaching datacenter-level TOPS, the NPU must provide sufficient throughput for concurrent ML models (e.g., environmental classification, voice isolation, spatial rendering). This is often achieved through highly parallelized architectures operating on quantized (INT8 or INT4) models.

- Memory Bandwidth and Capacity: The reliance on on-chip SRAM and minimal off-chip LP-DDR imposes strict limits. Models must be compact, and temporal context windows for ML tasks are often constrained to tens or hundreds of kilobytes, impacting the sophistication of algorithms that require longer historical data.

- Thermal Management: The ultra-compact form factor offers minimal surface area for heat dissipation. Sustained, high-intensity ML inference generates heat, which can lead to thermal throttling. The system’s runtime scheduler must dynamically adjust NPU clock frequencies or even temporarily offload tasks to lower-power DSPs/MCUs to prevent overheating, potentially impacting peak performance during prolonged heavy usage.

- Model Sophistication: Due to compute, memory, and power constraints, ML models are heavily optimized through pruning, quantization, and architectural simplification. This often means a “good enough” ceiling for model accuracy and complexity, trading off against the ability to run larger, more nuanced models.

Real-World Applications

On-device AI enables a suite of advanced audio features that improve the user experience by providing real-time, context-aware sound adaptation.

- Adaptive Noise Cancellation (ANC): Microphones continuously sample ambient sound. An on-device ML model classifies the noise (e.g., engine rumble, human speech, wind) and a DSP/NPU generates an anti-phase sound wave in real-time to cancel it. The “adaptive” aspect comes from the ML model’s ability to adjust cancellation parameters dynamically based on the changing environment, enhancing user comfort.

- Spatial Audio with Dynamic Head Tracking: Utilizing on-board inertial measurement units (IMUs) and ML models, the earbud tracks the user’s head movements. The NPU then re-renders the spatial audio scene in real-time, ensuring that sound sources remain fixed in space relative to the user’s perceived environment, rather than their head. This requires extremely low-latency sensor fusion and audio processing for an immersive experience.

- Voice Enhancement and Isolation: During calls, ML models analyze microphone input to differentiate the user’s voice from background noise. The NPU can then actively suppress ambient sounds while enhancing the clarity and presence of the user’s speech, even in challenging acoustic environments, significantly improving call quality.

- Personalized Audio Profiles: Over time, ML models can adapt to user preferences, hearing characteristics, and common listening environments. This data, processed locally for privacy, can inform dynamic equalization or soundstage adjustments to deliver a personalized audio experience.

- Environmental Awareness Modes: Features like “Transparency Mode” use ML to selectively amplify certain external sounds (e.g., human voices, traffic alerts) while maintaining a degree of noise cancellation for others, allowing users to remain aware of their surroundings without removing their earbuds, impacting safety and convenience.

These applications directly benefit from the on-device approach, ensuring consistent, private, and highly responsive performance regardless of network connectivity or the capabilities of a paired host device.

Limitations

Despite its advantages, the on-device AI paradigm in earbuds faces inherent limitations stemming directly from hardware constraints and runtime scheduling challenges.

- Model Sophistication Ceiling: The restricted memory (on-chip SRAM, minimal LP-DDR) and NPU compute capacity impose a “good enough” ceiling on ML model complexity. Highly sophisticated models requiring large parameter counts or extensive temporal context cannot be deployed, limiting the depth of environmental understanding or the nuance of adaptive features. This can lead to less precise adaptations compared to cloud-based AI.

- Architectural Lock-in: Dedicated NPUs, while power-efficient, are optimized for specific ML operations (e.g., convolutions, matrix multiplications). This specialization can lead to architectural lock-in, making it challenging to efficiently execute future ML paradigms (e.g., novel sparse networks, very large transformer models) that may have different computational patterns without significant hardware redesigns.

- Limited Contextual Awareness: The scarcity of on-chip SRAM restricts the amount of historical audio or sensor data that can be buffered. This limits the “memory” of ML models, potentially hindering performance in tasks requiring long-term temporal context for smoother, more predictive adaptations.

- Thermal Throttling: The minuscule earbud chassis provides minimal thermal dissipation. Continuous, high-intensity ML inference, even on efficient NPUs, generates heat. The runtime scheduler must implement aggressive thermal throttling, reducing NPU clock speeds or even temporarily disabling certain features to prevent overheating, which can lead to inconsistent peak performance.

- OTA Update Constraints: The limited flash memory capacity (typically 8-64MB) and the relatively low bandwidth of Bluetooth Low Energy (BLE) restrict the size and frequency of Over-The-Air (OTA) firmware and ML model updates. This slows down the pace of feature improvement and the deployment of major model overhauls.

- Development Complexity: Optimizing ML models for these highly constrained, specialized hardware architectures requires significant effort in model pruning, quantization, and custom kernel development. The tooling and software stacks for these embedded NPUs are often less mature than for general-purpose compute, increasing development time and complexity.

Why It Matters

The shift to on-device AI in earbuds is a critical engineering evolution that fundamentally redefines the user experience and the capabilities of personal audio devices. This architecture demonstrates how AI earbuds adapt sound using on-device AI processing to deliver responsive audio without relying on cloud inference.

Firstly, it ensures optimized real-time performance. For features like Adaptive Noise Cancellation or spatial audio, the millisecond-level latency achieved through local processing is non-negotiable for user comfort and immersion. Any reliance on external compute would introduce perceptible delays, rendering these features ineffective or even disorienting.

Secondly, it addresses the paramount concern of power efficiency. By leveraging specialized DSPs and NPUs, the system can execute complex ML inference with significantly higher MAC/W efficiency than general-purpose processors. This is a critical path to delivering continuous AI functionality within the meager power budget of an earbud battery, enabling all-day adaptive audio.

Thirdly, privacy and security are inherently enhanced. Processing sensitive audio streams (user voice, ambient environment) locally mitigates the risks associated with transmitting raw data to the cloud. This builds user trust and simplifies compliance with increasingly stringent data privacy regulations, positioning privacy as a core feature rather than an afterthought.

Finally, on-device AI provides strong robustness and autonomy. Features function reliably regardless of network connectivity or the processing power of a paired host device. This provides a consistent, premium user experience across diverse environments and usage scenarios, making the earbuds truly intelligent and independent audio companions. This engineering approach is not merely an optimization; it is an enabler for the next generation of personalized, context-aware audio experiences.

Key Takeaways

- Latency-Driven Design: On-device processing is mandated by the need for millisecond-level responsiveness in real-time audio adaptation features like ANC and spatial audio.

- Specialized Hardware for Efficiency: AI earbuds rely on highly integrated SoCs/SiPs with dedicated DSPs and NPUs to achieve exceptional power efficiency (MAC/W) for continuous ML inference within severe power budgets.

- Privacy and Autonomy: Local processing of sensitive audio data enhances user privacy and ensures robust feature operation independent of network connectivity or host device capabilities.

- Hardware Constraints Dictate ML: Memory limitations (on-chip SRAM, minimal LP-DDR) and NPU compute capacity necessitate highly compact, quantized ML models, imposing a “good enough” ceiling on model sophistication.

- Runtime Scheduling is Critical: The system’s MCU orchestrates tasks across specialized accelerators, managing data flow and power states to meet real-time deadlines and mitigate thermal throttling.

- Tradeoffs are Inherent: The design involves compromises between model complexity, sustained performance (due to thermal limits), and architectural flexibility for future ML paradigms.

Frequently Asked Questions

How do AI earbuds adapt sound using on-device AI?

AI earbuds adapt sound by running machine learning models directly on the earbud’s System-on-Chip (SoC). The device analyzes ambient noise and user voice through microphones, then dynamically adjusts audio processing such as adaptive noise cancellation, equalization, and spatial audio in real time.

Why do AI earbuds process audio on-device instead of in the cloud?

On-device AI allows sound processing to happen instantly without sending audio data to external servers. This reduces latency, improves privacy, and ensures features like noise cancellation and voice enhancement work even without an internet connection.

What hardware enables AI processing inside earbuds?

AI earbuds rely on specialized processors such as Digital Signal Processors (DSPs) and Neural Processing Units (NPUs) integrated within a System-on-Chip. These accelerators efficiently run machine learning models for tasks like environmental sound detection, voice isolation, and adaptive audio processing.