Table of Contents

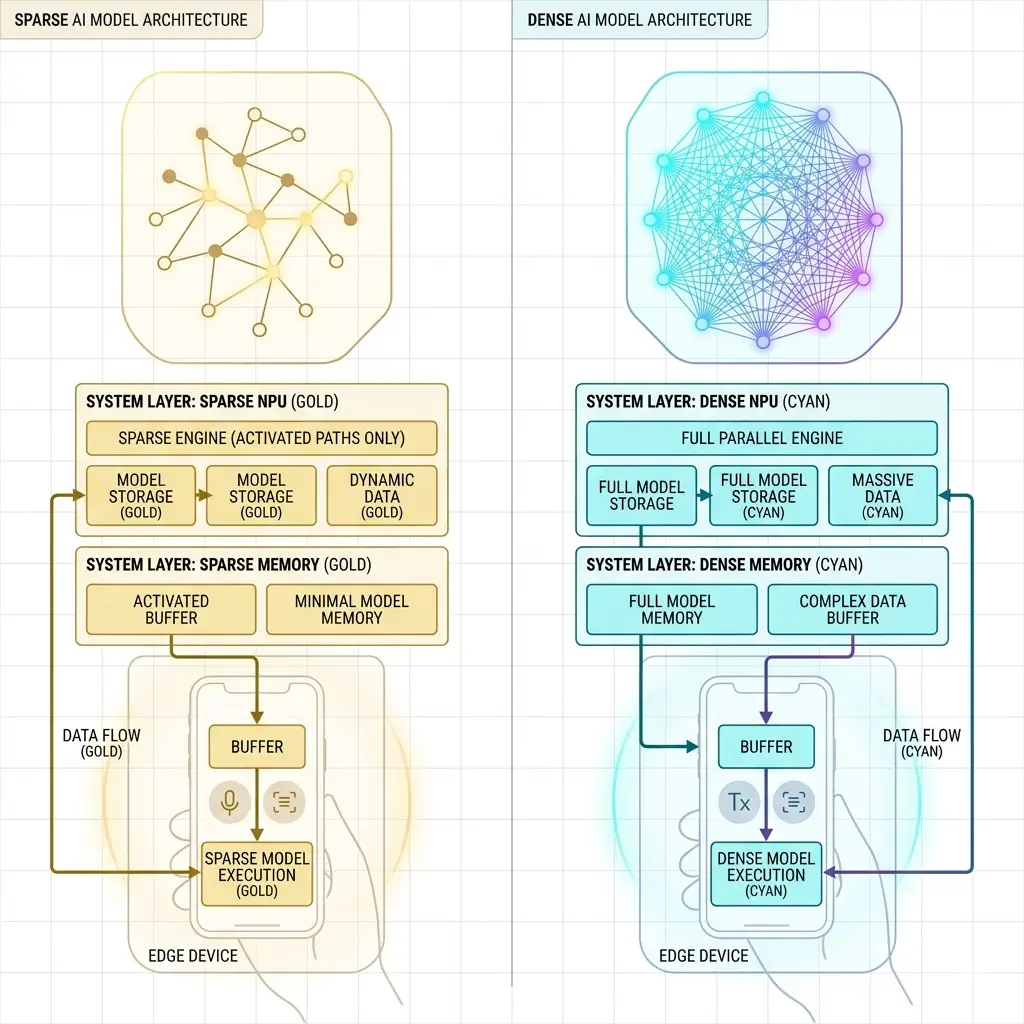

Sparse vs dense AI models differ in how they use computation. Dense models process all parameters, delivering predictable high throughput. Sparse models skip zero or low-value parameters, reducing memory usage and power consumption—making them ideal for edge AI systems.

In the landscape of on-device AI, the imperative to deploy increasingly complex neural networks within stringent power, memory, and thermal envelopes presents a formidable engineering challenge. As models scale, the sheer volume of parameters and the associated computational demands quickly exceed silicon constraints, particularly within the memory subsystem. This necessitates a critical architectural decision: whether to employ dense models, which process all data uniformly, or sparse models, which selectively engage compute resources based on data significance. Understanding the deep engineering implications of each approach is paramount for designing efficient, deployable AI accelerators.

What Are Dense and Sparse AI Models

Dense AI Models operate on the principle of uniform computation. Every parameter within a tensor, regardless of its numerical value, is typically involved in a multiply-accumulate (MAC) operation. This paradigm is well-suited for the highly parallel, synchronous MAC arrays and vector processing units that form the bedrock of modern AI accelerators. Data is generally structured as contiguous tensors, allowing for predictable memory access patterns and simplified dataflow management.

The core design philosophy prioritizes deterministic throughput and ease of hardware implementation by leveraging decades of optimization for matrix operations, even if this entails processing numerically insignificant values. This approach ensures consistent performance, but can lead to higher power consumption and thermal output, potentially limiting sustained performance on smaller devices.

Sparse AI Models, conversely, adopt an information-centric processing approach. They exploit the inherent redundancy in many neural networks, where a significant portion of weights or activations can be zero or near-zero without substantial impact on model accuracy—often achieved through techniques like neural network pruning. By identifying and skipping these non-salient values, sparse models aim to reduce the total number of MAC operations, the active memory footprint, and dynamic power consumption. This architectural pivot from brute-force computation towards dynamic resource engagement is a direct response to the memory wall (exceeding on-chip SRAM, high DRAM access) and the power wall (excessive energy consumption for redundant computations) encountered when scaling dense models on resource-constrained edge devices.

Sparse vs Dense AI Models: Key Differences

In sparse vs dense AI models, the differences span computation, memory access, and hardware efficiency.

| Feature | Dense AI Models | Sparse AI Models |

|---|---|---|

| Computational Paradigm | Brute-force, uniform computation | Information-centric, dynamic computation |

| Data Processing | All parameters active, fixed-size tensors | Only salient (non-zero) parameters are active |

| Hardware Alignment | Highly parallel, synchronous MAC arrays, vector units | Specialized control logic, index processing, scatter/gather units |

| Memory Access Pattern | Predictable, strong spatial/temporal locality | Irregular, non-uniform, index-driven |

| Core Challenge | Inherent inefficiency from processing redundant data | “Irregularity Tax” (control overhead, index bandwidth) |

Performance vs Efficiency Trade-offs

| Metric | Dense AI Models | Sparse AI Models |

|---|---|---|

| Throughput (MACs/cycle) | Predictable, high under full load | Potentially higher effective MACs/cycle (useful work) |

| Hardware Utilization | High for MAC arrays, predictable | Variable, potentially lower for MAC arrays due to irregular dataflow |

| Latency Predictability | High, consistent | Variable, dependent on the sparsity pattern and overhead |

| Efficiency Metric | GFLOPS (raw compute) | GOPS (useful operations), operations per Joule |

| Bottleneck | Memory bandwidth, thermal limits | Control overhead, index bandwidth, and random memory access |

Power & Thermal Behavior

Dense models exhibit a predictable and often high power consumption profile. In sparse vs dense AI models, this uniform computation leads to sustained high utilization of MAC arrays and memory bandwidth, translating into a consistent thermal load. While this allows for straightforward power budgeting, for larger models it often pushes devices toward their thermal design power (TDP) limits. This can necessitate aggressive thermal throttling, dynamically reducing the NPU’s clock frequency or voltage, thereby degrading sustained performance over time. The hidden cost in sparse vs dense AI models is the energy expended on processing numerically insignificant values.

Sparse models, conversely, aim for significantly lower average dynamic power consumption by skipping redundant computations. Power usage becomes more dynamic and potentially bursty, fluctuating based on the sparsity pattern of inputs and model weights.

This requires more sophisticated, fine-grained power gating and clock management at the architectural level to fully realize efficiency gains. These optimizations are especially important in modern AI hardware, where different accelerators handle workloads differently—particularly in architectures like NPU vs GPU vs CPU for AI inference.

While the overall energy footprint is reduced, the specialized control logic (index processing and address generation) required for sparse operations still consumes both static and dynamic power, contributing to the “irregularity tax.” Efficient sparse processing shifts the focus from peak throughput under full load to intelligent resource usage based on data significance within strict power constraints.

Memory & Bandwidth Handling

The memory subsystem is the primary battleground for AI model efficiency. In sparse vs dense AI models, dense models, with their contiguous tensor structures, benefit from strong spatial and temporal locality. This simplifies memory controller design, allows for efficient prefetching, and maximizes cache hit rates, leading to predictable and high effective bandwidth utilization for sequential accesses, as seen in architectures explained in NVIDIA GPU Memory Hierarchy. However, as model sizes grow, the sheer volume of parameters often exceeds the capacity of fast on-chip SRAM, forcing frequent, power-intensive, and latency-prone accesses to slower off-chip DRAM. This constant demand for data from off-chip memory constitutes the memory wall that dense models frequently encounter, often limited by memory bandwidth ceilings.

Sparse models directly confront the memory wall by reducing the active memory footprint. By storing only non-zero values, they significantly decrease total data volume. However, this comes with an “irregularity tax” on the memory subsystem.

Storing sparse data requires additional memory for indices (e.g., Compressed Sparse Row/Column formats), which can consume a non-trivial portion of the saved space. More critically, sparse memory access patterns are inherently irregular and non-uniform. This leads to lower cache hit rates, increased demand for random access, and a higher burden on memory controllers and address generation units.

Specialized hardware—such as scatter/gather units and dedicated index processors—is required to efficiently translate logical sparse operations into physical memory accesses. This becomes especially important in on-device AI vs cloud AI, where local inference is heavily constrained by memory bandwidth and latency.

The challenge is ensuring that the bandwidth saved from reduced data volume is not offset by the overhead of managing irregular index and data fetches. Otherwise, effective DRAM utilization may remain low despite reduced data movement. Interconnect limitations can also become a bottleneck, particularly in multi-core or multi-chip designs.

Software Ecosystem & Tooling

In sparse vs dense AI models, the software ecosystem differs significantly in complexity and maturity. Compilers (e.g., TVM, XLA, ONNX Runtime documentation) are highly optimized for dense matrix operations, vectorization, and parallel execution on general-purpose compute units like GPUs, CPUs, and NPUs. Dataflow is predictable, allowing for efficient graph optimization, memory allocation, and runtime scheduling. The toolchains are relatively straightforward, abstracting away much of the underlying hardware complexity for developers.

For sparse AI models, the software ecosystem is significantly more complex and still evolving. Efficient sparse execution requires specialized compilers and runtime schedulers that can:

- Identify and exploit sparsity: This involves static analysis of model weights and dynamic analysis of activations.

- Manage sparse data structures: Converting dense tensors to sparse formats (e.g., CSR, CSC, COO) and back, often requiring dynamic reordering.

- Generate efficient sparse code: Targeting specialized hardware units (index processors, scatter/gather) and optimizing for irregular memory access patterns.

- Handle dynamic sparsity: Adapting execution based on varying sparsity levels during inference. This complexity extends to the entire stack, from model training (e.g., pruning techniques) to deployment, demanding a tighter co-design between software and hardware to effectively manage the “irregularity tax” and realize the promised benefits. This can impact the ease of offline execution and model updates on deployed devices.

Real-World Deployment

In real-world deployment scenarios, the choice between dense and sparse models hinges on the specific constraints and performance requirements of the target device.

Dense models offer a simpler integration path due to their predictable performance and mature toolchains. They are often preferred where:

- Deterministic throughput is paramount: Applications requiring consistent, low-latency responses, especially for sustained workloads.

- Hardware resources are relatively abundant: Larger edge devices or cloud deployments where power/memory constraints are less severe or can be mitigated by aggressive quantization (e.g., INT8) without resorting to structural sparsity.

- simpler hardware/software stack is desired: Reducing development and maintenance overhead. However, for larger models on highly constrained edge devices (e.g., IoT sensors, microcontrollers), dense models quickly hit the memory wall and power wall, leading to performance degradation from thermal throttling or excessive off-chip memory accesses, impacting sustained performance.

Sparse models are critical for deployment on resource-constrained edge devices where memory and power bottlenecks are severe. They are employed when:

- Extreme memory footprint reduction is necessary: Enabling larger models to fit within limited on-chip SRAM.

- Aggressive power reduction is required: Extending battery life for always-on applications, within power envelope constraints, typically a few watts sustained for mobile.

- The “irregularity tax” can be effectively managed: This requires specialized hardware support (dedicated sparse compute units, intelligent memory controllers) and a sophisticated software stack to avoid negating the benefits. Deployment success for sparse models is highly dependent on the efficiency of the entire system, from the sparsity-aware model architecture to the specialized silicon and the optimizing compiler. Without these, a general-purpose dense NPU will struggle to process sparse data efficiently, leading to underutilization of its MAC arrays and potentially higher power consumption due to control overhead.

Which Design Is More Efficient

In sparse vs dense AI models, neither approach is inherently “more efficient” in all contexts; efficiency depends on the target architecture, workload, and system constraints.

Dense models are highly efficient in terms of raw MAC utilization on hardware designed for uniform matrix operations. Their efficiency shines when the memory subsystem can continuously feed the compute units without becoming a bottleneck, and when the power/thermal envelope can sustain high, predictable loads. They are efficient in exploiting parallelism for predictable throughput. However, they are inherently inefficient in terms of useful computation per Joule when processing redundant, near-zero values.

Sparse models offer superior efficiency in terms of useful computation per Joule and active memory footprint by eliminating redundant operations and data movement. They are designed to be efficient in information-centric processing. However, this efficiency comes at the cost of increased architectural complexity and the “irregularity tax.” If the overheads associated with managing sparse data (index storage, irregular memory access, control logic) outweigh the benefits of skipping zero operations, then sparse models can paradoxically be less efficient than a well-optimized dense model, especially on general-purpose dense accelerators. This inefficiency can manifest as increased latency behavior for critical inference tasks.

Ultimately, the most efficient design often involves a hybrid approach, leveraging quantization for dense models to reduce bit-width, and applying structured or unstructured sparsity where the architectural support exists to manage the irregularity overhead effectively.

Key Takeaways

- Memory Subsystem is King: The primary driver for Sparse vs Dense AI Models is the memory wall, aiming to reduce active memory footprint and off-chip DRAM accesses.

- Trade-offs are Inherent: Dense models offer architectural simplicity and predictable throughput at the cost of processing redundant data. Sparse models offer superior efficiency but incur an “irregularity tax” in control logic and memory access complexity.

- Hardware-Software Co-design is Critical: Realizing the benefits of sparse models demands specialized silicon (index processors, scatter/gather units) and a sophisticated software stack to manage irregular dataflow and memory access patterns.

- Deployment Context Dictates Choice: Dense models suit predictable, less constrained environments. Sparse models are essential for extreme power and memory-constrained edge devices, provided the system can effectively mitigate the overheads of irregularity.

- Efficiency is Contextual: “Efficiency” must be defined by the specific system constraints (e.g., operations per second, operations per Joule, memory footprint) rather than raw computational throughput alone.

- Ultimately, choosing between sparse vs dense AI models depends on system constraints, workload requirements, and efficiency goals.