Table of Contents

Edge AI is powering everything from smartphone cameras to self-driving cars—but running AI models on small, battery-powered devices is extremely challenging. These devices must process data quickly while staying within strict power and heat limits.

So how do they manage to run complex AI models efficiently? The answer lies in a key design choice: using integer arithmetic instead of floating point. In this article, we’ll break down why edge AI chips use INT8, how it works, and what it means for real-world performance and efficiency.

In simple terms, integer arithmetic uses smaller and simpler numbers. Smaller numbers are easier for hardware to process, which means faster computation and lower energy usage. That’s why edge devices like smartphones, cameras, and IoT systems rely on INT8 instead of complex floating-point calculations.

This article helps you understand how modern AI works on everyday devices simply and practically.

What Is Integer Arithmetic in Edge AI Chips?

Integer arithmetic refers to performing calculations using fixed-size numbers (like 8-bit integers) instead of floating-point numbers. Unlike FP32, which supports a very wide range of values, INT8 focuses on efficiency by using a smaller range of numbers. This allows hardware to perform operations faster while consuming less power. As a result, edge devices can run AI models longer without overheating or draining the battery.

How Edge AI Chips Use Integer Arithmetic in AI Processing

Before running AI models on edge devices, complex floating-point numbers are converted into simpler integer values. This process is called quantization.

Simple Idea

Think of it like compressing large, detailed numbers into smaller ones that are easier and faster for hardware to process.

How it works

- Floating-point values (FP32) → converted to integers (INT8)

- Large value range → mapped into a smaller fixed range

Two key components are used:

- Zero Point (Z): Ensures zero is represented correctly

- Scaling Factor (S): Controls how values are compressed

Example:

Imagine converting a value like:

0.75 → becomes → 95 (in INT8). This makes calculations faster because the chip now works with smaller numbers.

What happens during processing?

- AI operations use INT8 math (fast + efficient)

- Results are stored in higher precision (like INT32) to avoid errors

- Data is passed through layers efficiently

Frameworks like TensorFlow Lite documentation explain how integer quantization enables efficient AI inference on edge devices.

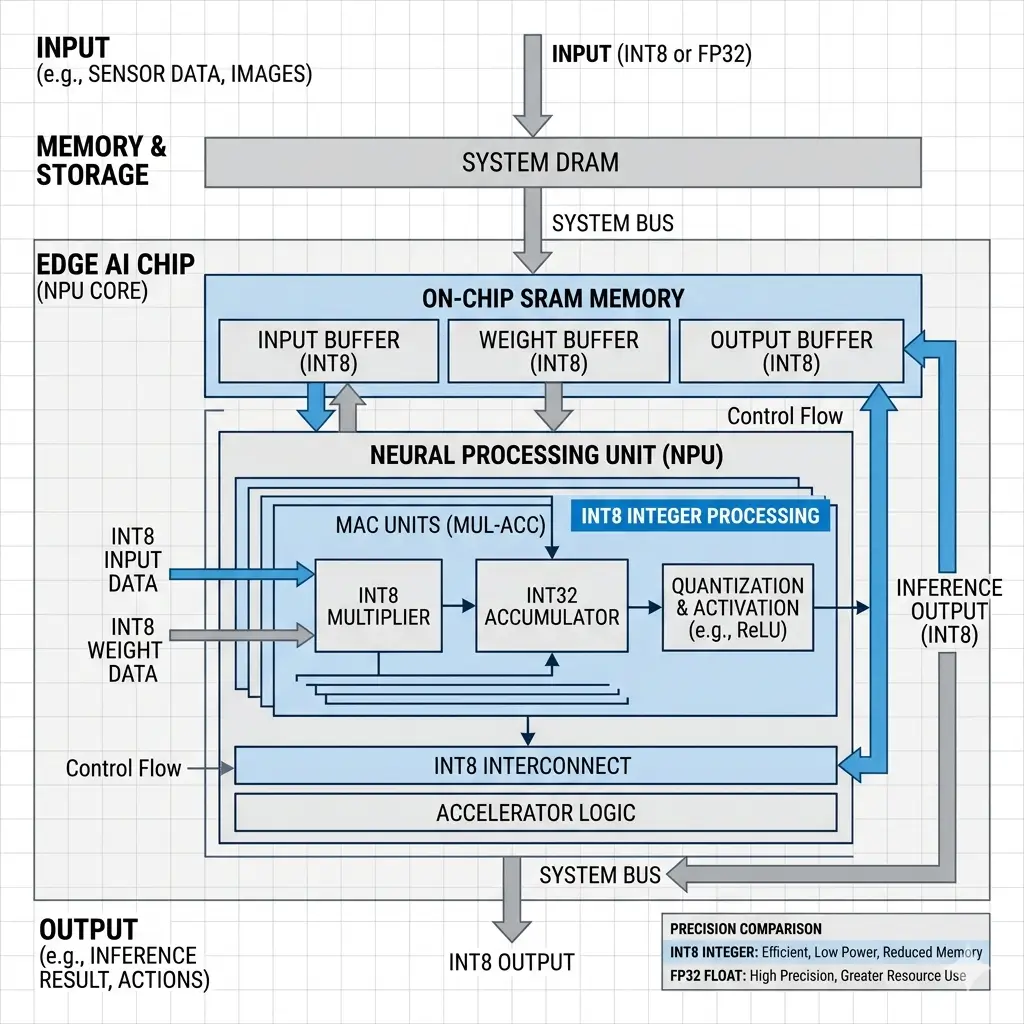

Architecture of Edge AI Chips Using Integer Arithmetic

The preference for integer arithmetic fundamentally shapes the compute architecture of edge AI chips, leading to specialized designs known as Neural Processing Units (NPUs) or AI accelerators. Tools such as ONNX Runtime documentation support optimized INT8 inference across different edge AI hardware platforms.

- Simplified Arithmetic Logic Units (ALUs): Integer ALUs are significantly less complex than Floating-Point Units (FPUs). An FP32 FPU requires complex logic for exponent alignment, mantissa multiplication, and normalization, leading to larger silicon area, higher transistor counts, deeper pipelines, and more clock cycles per operation. Integer ALUs, especially for INT8, are simpler, shallower, and can execute operations in fewer cycles, often in a single cycle for MACs.

- High-Density MAC Arrays: NPUs typically feature large arrays of parallel integer MAC units. These arrays are optimized for the specific data flow of neural networks, allowing thousands of INT8 MAC operations to be executed concurrently. The simplicity of integer MACs enables a much higher density of these units within a given silicon area compared to FP32 MACs.

- Reduced Data Path Widths: With INT8 data, the internal data paths, registers, and memory interfaces can be 8-bit wide, compared to 32-bit for FP32. This reduction in width directly translates to smaller interconnects, lower power consumption for data movement, and reduced silicon area, mitigating interconnect limitations.

- Optimized On-Chip Memory: Edge AI chips rely heavily on fast, on-chip SRAM to store weights and activations, minimizing costly and power-intensive accesses to slower off-chip DRAM. INT8 data is 4x smaller than FP32, allowing significantly more data to be stored in the same amount of SRAM. This maximizes on-chip memory utilization, reduces memory stalls, and ensures the compute units are continuously fed, thereby boosting overall system efficiency and throughput.

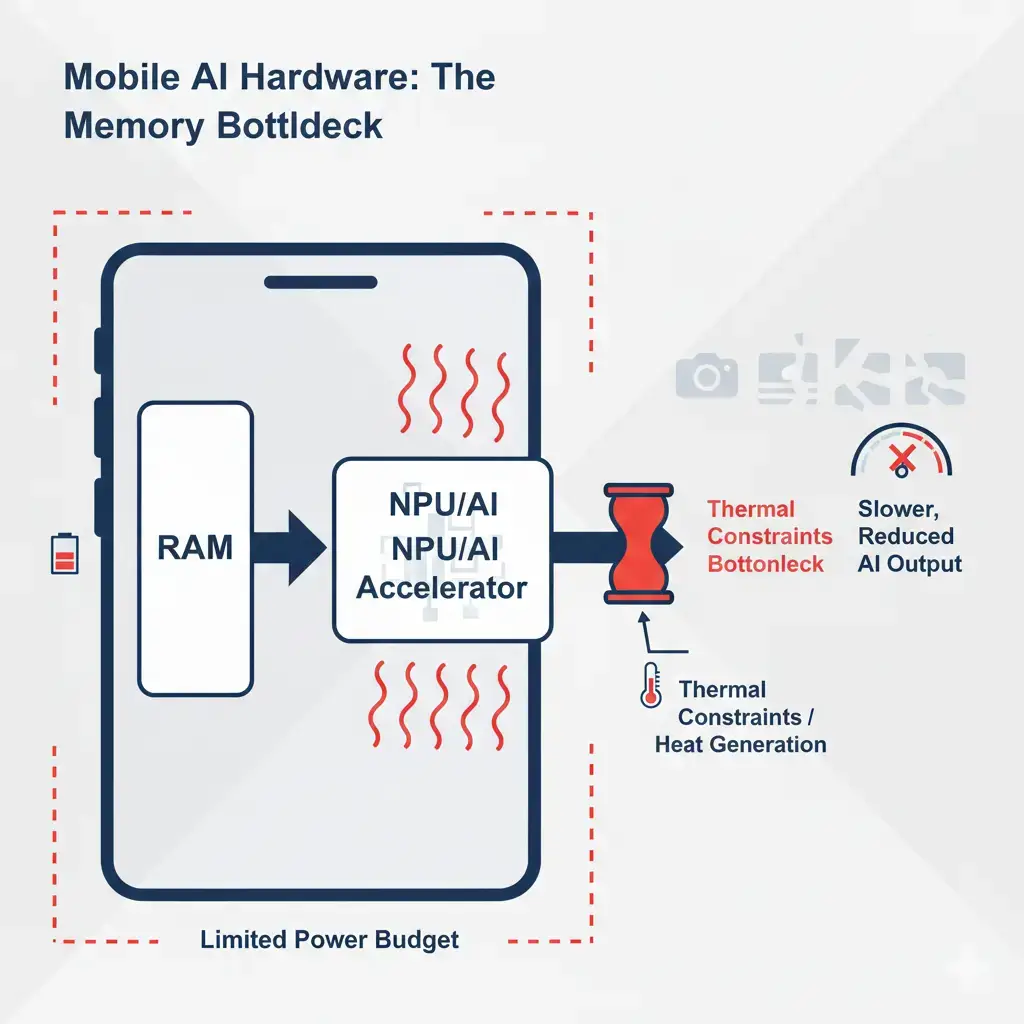

- Lower Thermal Footprint: The reduced complexity and power consumption of integer operations generate substantially less heat. This simplifies thermal management, allowing devices to operate at peak performance for extended periods without hitting thermal throttling limits, which is critical for sustained inference in passively cooled or compact enclosures.

Performance Benefits When Edge AI Chips Use Integer Arithmetic

The architectural choices driven by integer arithmetic yield profound performance benefits critical for edge deployments:

| Characteristic | Integer Arithmetic (e.g., INT8) | Floating-Point Arithmetic (e.g., FP32) |

|---|---|---|

| Power Efficiency | Extremely low (e.g., <1 pJ/op for MAC) | High (e.g., 5-20 pJ/op for MAC) |

| Inference Latency | Low, predictable (fewer cycles per operation, shallower pipelines) | Higher, less predictable (more cycles, deeper pipelines) |

| Throughput (TOPS) | Peak throughput is often limited by thermal throttling | Peak throughput often limited by thermal throttling |

| Silicon Area | Significantly smaller ALUs and data paths | Larger, more complex FPUs and wider data paths |

| Memory Bandwidth | Reduced requirements (4x smaller data) | Higher requirements |

| On-Chip Memory | Maximized utilization (more weights/activations in SRAM) | Limited utilization (fewer weights/activations in SRAM) |

- Extremely Low Power Consumption: Integer operations consume significantly less power per operation (pJ/op) than floating-point operations. This enables continuous AI capabilities and extends battery life for mobile and IoT devices within their power envelopes.

- Predictable, Low Latency: Simpler integer ALUs lead to shallower pipelines and fewer clock cycles per operation. This translates directly to lower and more predictable inference latency, crucial for real-time applications where immediate responses are paramount. Advanced optimization techniques for integer inference are also covered in the NVIDIA TensorRT optimization guide, which highlights real-world performance improvements.

- Sustained High Throughput: Lower power consumption means less heat generation, allowing the NPU to sustain its peak computational throughput (TOPS/MACs per second) for extended periods without thermal throttling. Understanding the thermal implications of such processing is key, as AI features can make smartphones heat up. This ensures consistent performance for continuous workloads.

- Maximized On-Chip Memory Utilization: The 4x reduction in data size for INT8 compared to FP32 allows more model weights and activations to reside in fast, on-chip SRAM. This minimizes costly off-chip DRAM accesses, reducing memory stalls and ensuring compute units are continuously fed, thereby maximizing their utilization and overall system efficiency.

Real-World Applications

The advantages of integer arithmetic are enabling a broader range of edge AI applications:

- Autonomous Navigation Systems: In vehicles, real-time object detection, lane keeping, and pedestrian recognition require immediate, low-latency inference. INT8 NPUs provide the necessary speed and power efficiency to process sensor data continuously without draining the vehicle’s power budget or overheating.

- Continuous Voice Assistants (Wake Word Detection): Devices like smart speakers or smartphones can continuously listen for wake words with minimal power draw. The NPU remains in a low-power state, executing INT8 inference, and only activates higher-power components upon detecting the trigger phrase.

- Industrial IoT and Predictive Maintenance: Sensors on factory floors or remote equipment perform continuous anomaly detection or condition monitoring. INT8 inference allows these battery-powered devices to run AI models for extended periods, identifying potential failures before they occur, without requiring frequent recharging or large power supplies.

- Smart Cameras and Video Analytics: For continuous video stream analysis (e.g., person detection, facial recognition, gesture recognition), INT8 inference enables high frame rates and sustained processing on-device, reducing the need to send all video data to the cloud, thereby saving bandwidth and improving privacy. This highlights a core advantage of on-device AI over cloud AI.

Limitations of Using Integer Arithmetic in Edge AI

While integer arithmetic (INT8) makes edge AI fast and efficient, it also comes with a few trade-offs that can affect real-world usage.

- 🎯 Slight accuracy loss: Because INT8 uses lower precision, some AI features—like health tracking or image recognition—may be a bit less accurate in certain situations.

- ⚡ Occasional performance issues: On devices without dedicated AI hardware, extra processing steps can sometimes cause small delays or less smooth performance.

- 🔄 Not ideal for complex tasks: Advanced AI tasks, like large language models or high-quality image processing, may not run well with low precision and might rely on the cloud instead.

- 🧩 Mixed precision usage: Some parts of AI models still need higher precision (FP16/FP32), which can increase power usage slightly in specific cases.

- 🔒 Device-dependent performance: AI performance can vary depending on the chip inside the device—premium devices usually handle INT8 much better than budget ones.

Real-World Impact on Users

Integer arithmetic doesn’t just improve performance—it makes everyday devices better to use.

💰 Lower cost: Less cloud usage reduces data and device costs

🔋 Longer battery life: Uses less power, so AI features can run longer

⚡ Faster response: Real-time processing without delays

📶 Works offline: No need for internet, improving reliability and privacy

Key Takeaways

- INT8 is faster and more power-efficient than FP32

- Reduces memory usage by 4×

- Enables real-time AI on edge devices

- Slight accuracy trade-offs but manageable

Frequently Asked Questions

Why do Edge AI chips use integer arithmetic?

Edge AI chips use integer arithmetic because it reduces power consumption and improves performance.