Table of Contents

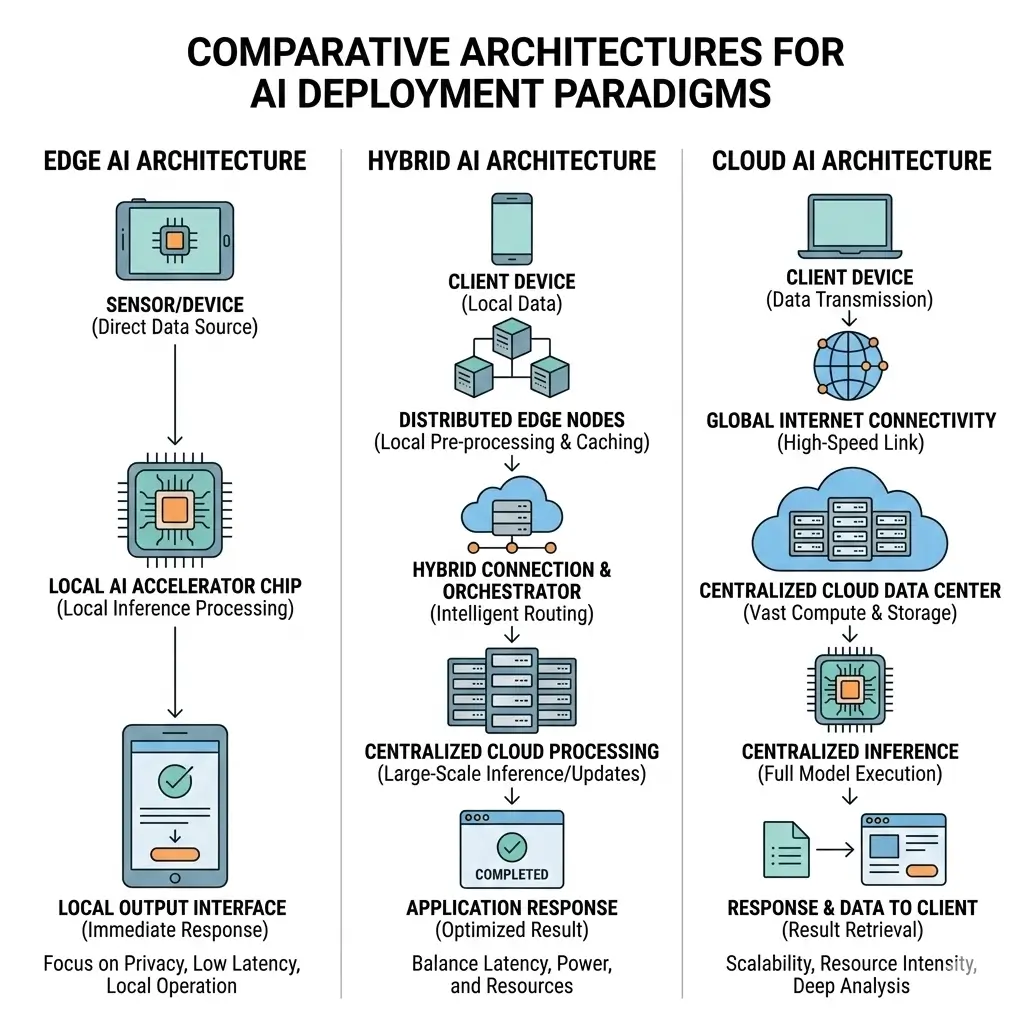

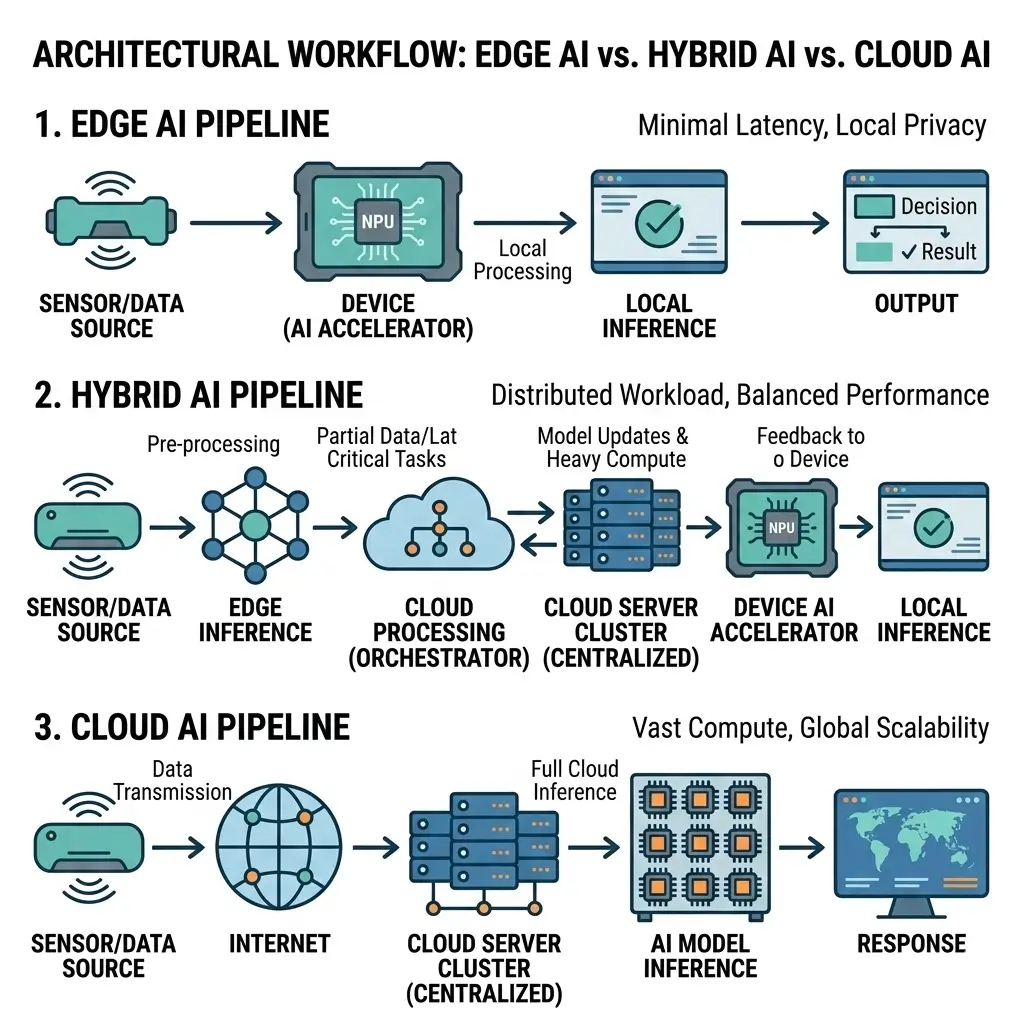

Edge AI vs Hybrid AI vs Cloud AI describes three different ways artificial intelligence workloads are deployed. Edge AI runs inference directly on local devices to minimize latency and power usage. Cloud AI processes data in centralized data centers with virtually unlimited compute resources for training and large-scale inference. Hybrid AI combines both approaches, distributing workloads between edge devices and cloud infrastructure to balance real-time responsiveness, scalability, and power efficiency.

In simple terms:

Edge AI: AI processing happens directly on devices such as smartphones, cameras, or IoT sensors.

Cloud AI: AI workloads run on remote servers inside hyperscale data centers.

Hybrid AI: AI tasks are distributed between edge devices and cloud infrastructure.

Modern AI systems don’t all run in the same place. Some process data directly on devices like smartphones, cameras, or industrial sensors, while others rely on massive cloud data centers for training and large-scale inference. This shift has created three major deployment models: Edge AI, Hybrid AI, and Cloud AI. Understanding Edge AI vs Hybrid AI vs Cloud AI is essential because each architecture makes different trade-offs in latency, power efficiency, scalability, and infrastructure cost.

What It Is

- Edge AI: Refers to AI processing performed directly on local devices (On-Device Ai Cloud Ai) at the periphery of the network, close to the data source. This includes devices ranging from IoT sensors and smartphones to industrial robots and autonomous vehicles.

- Cloud AI: Involves AI processing executed on remote, centralized servers within hyperscale data centers. This paradigm leverages vast, shared compute resources accessible via network infrastructure.

- Hybrid AI: Represents an integrated approach where AI workloads are intelligently distributed and orchestrated across both edge devices and cloud infrastructure. Decision-making regarding compute location is dynamic, based on factors like latency, data sensitivity, bandwidth, and computational intensity. Real-device implication: The fundamental distinction between these paradigms, particularly the choice between local and remote processing, directly influences factors like battery life in mobile devices and the real-time responsiveness of industrial control systems.

How It Works

- Edge AI: Data is captured and processed locally. Inference models, often highly optimized (quantized, pruned), run on dedicated AI accelerators (NPUs, DSPs, custom ASICs) embedded within the device. This minimizes data transmission to the cloud, reducing latency and bandwidth consumption. Model updates typically occur periodically via Over-The-Air (OTA) mechanisms.

- Cloud AI: Raw or pre-processed data is transmitted to the cloud. Large, complex models are trained on massive datasets using powerful GPU clusters or custom AI ASICs such as Google’s Tensor Processing Units (TPUs), which are purpose-built accelerators for large-scale machine learning workloads. Inference can also occur in the cloud for applications requiring extensive computing or access to global data. Resource allocation is elastic, scaling up or down based on demand.

- Hybrid AI: An intelligent orchestration layer determines where specific AI tasks are best executed. Low-latency, privacy-sensitive, or bandwidth-intensive inference tasks are offloaded to the edge. Complex model training, global analytics, large-scale data aggregation, and less time-critical inference are handled by the cloud. Data synchronization and model versioning are managed across both environments. Real-device implication: The choice of processing location directly impacts sustained performance; edge devices might offer immediate, burst performance but struggle with prolonged, heavy workloads, whereas cloud systems provide consistent, high-throughput processing for continuous operations.

Architecture Overview

The architectural distinctions primarily revolve around compute placement, data flow, and the underlying silicon optimization targets. Real-device implication: The thermal stability of a device is directly tied to its architecture; edge devices are designed for passive cooling in compact form factors, while cloud data centers rely on active, large-scale cooling solutions to prevent performance degradation.

Edge AI Architecture:

- Compute: Highly integrated, power-efficient System-on-Chips (SoCs) featuring specialized AI accelerators (NPUs, custom DSPs, reconfigurable logic). Often includes low-power microcontrollers for orchestration.

- Memory: On-chip or near-chip memory (e.g., LPDDR, HBM for higher-end edge) with strong emphasis on minimizing data movement energy. Processing-in-Memory (PIM) or near-memory compute architectures are emerging.

- Connectivity: Local wireless (BLE, Wi-Fi), cellular (5G/LTE), or wired interfaces for data ingestion and intermittent cloud communication.

- Power: Battery-powered or low-power line-powered, with aggressive power management (e.g., burst-and-sleep cycles, dynamic voltage and frequency scaling).

Cloud AI Architecture:

- Compute: Rack-scale deployments of high-performance servers, each containing multiple GPUs, custom AI ASICs (e.g., Google TPUs), or high-core-count CPUs. Liquid cooling is common for high-density racks.

- Memory: High-bandwidth memory (HBM) co-located with accelerators, large DRAM pools, and vast distributed storage systems (NVMeoF, object storage).

- Connectivity: High-speed, low-latency internal data center networks (InfiniBand, RoCE, custom interconnects like NVLink/NVSwitch, Google’s ICI) for inter-accelerator communication. External high-bandwidth fiber links.

- Power: Grid-scale power delivery, redundant power supplies, and massive cooling infrastructure. Power efficiency is measured at the data center level (PUE).

Hybrid AI Architecture:

- Compute: A combination of edge and cloud compute resources. Requires robust APIs and middleware for seamless interaction.

- Memory: Distributed memory management across edge and cloud. Data caching and synchronization mechanisms are critical.

- Connectivity: Secure, reliable network links between edge devices and cloud infrastructure, often leveraging VPNs, SD-WAN, or dedicated interconnects.

- Power: Optimized for overall system power efficiency by intelligently distributing workloads. For example, offloading simple inference to a low-power edge device rather than transmitting data to a high-power cloud GPU. This intelligent orchestration of workloads between edge and cloud is a hallmark of advanced AI deployments (Hybrid On-Device Cloud Ai Cameras).

Architectural Comparison

| Feature | Edge AI | Hybrid AI | Cloud AI |

|---|---|---|---|

| Primary Compute Location | Device-local | Distributed (Edge & Cloud) | Centralized (Data Center) |

| Typical Power Envelope | Milliwatts to tens of Watts | Variable (sum of edge + cloud components) | Kilowatts per server, Megawatts per data center |

| Latency Profile | Sub-millisecond to low milliseconds (local) | Variable (mix of local and network latency) | Tens to hundreds of milliseconds (network-bound) |

| Data Locality | High (data stays on device) | Distributed (data moves as needed) | Low (data transmitted to cloud) |

| Compute Scale | Limited per device, massive parallelization across many devices | Flexible, scales per workload | Virtually limitless |

| Primary Optimization | Performance/Watt, Real-time, Privacy | Optimal resource utilization, Flexibility | Throughput, Elasticity, Scale |

| Hardware Focus | Specialized Co-processors, PIM | Orchestration Layer, Secure Connectivity | High-density Accelerators, High-BW Interconnects |

Performance Characteristics

Power efficiency stands as a critical performance characteristic across all paradigms, though its manifestation and optimization strategies differ significantly.

Edge AI Performance:

- Power Efficiency: Achieved through highly optimized, often fixed-function or reconfigurable AI accelerators designed for maximum inferences per watt. Techniques like aggressive quantization (INT8, INT4), pruning, and sparsity exploitation are fundamental. Processing-in-Memory (PIM) architectures aim to drastically reduce the energy cost associated with data movement, a factor that can dominate total power consumption in traditional Von Neumann architectures.

- Latency: Extremely low due to local processing, avoiding network round-trip. This is crucial for real-time control systems, autonomous navigation, and immediate user interaction.

- Throughput: Limited by the individual device’s compute capacity, but can be scaled by deploying a multitude of devices in parallel.

- Model Complexity: Constrained by the device’s thermal design power (TDP) and memory footprint. Running large foundation models directly on edge devices is currently impractical without significant architectural breakthroughs.

Cloud AI Performance:

- Power Efficiency: While individual components (GPUs, ASICs) are highly optimized for FLOPS/Watt, the sheer scale of cloud operations means the cumulative energy footprint is enormous. Optimization focuses on data center-level efficiency (PUE), advanced cooling (liquid cooling), and maximizing utilization of expensive accelerators. The “dark cost” of data gravity—the energy expended in moving petabytes of data—becomes a significant, often underestimated, power consumer.

- Latency: Inherently higher due to network transmission delays. This makes it unsuitable for ultra-low-latency applications.

- Throughput: Massive, capable of processing petabytes of data and training models with billions or trillions of parameters simultaneously. This is achieved through parallel processing across thousands of accelerators, interconnected by high-bandwidth, low-latency networks (e.g., 800Gb/s InfiniBand, NVLink).

- Model Complexity: Virtually unconstrained, enabling the training and deployment of the largest, most complex AI models.

Hybrid AI Performance:

- Power Efficiency: Aims for system-level power efficiency by intelligently offloading tasks. For instance, simple, repetitive inference on the edge consumes far less power than continuously streaming raw sensor data to the cloud for processing. Conversely, training a large model in the cloud is more power-efficient than attempting to distribute it across many underpowered edge devices. The engineering challenge lies in optimizing the total energy budget, encompassing data transfer costs.

- Latency: Optimized by design; critical tasks run on the edge for low latency, while less time-sensitive tasks leverage cloud scale.

- Throughput: Adaptive, leveraging the strengths of both. Edge devices handle local bursts, while the cloud manages aggregate or complex workloads.

- Model Complexity: Flexible. Edge devices run optimized, smaller models, while the cloud handles larger, more complex models and retraining.

Power Efficiency and Performance Bottlenecks

| Paradigm | Primary Power Efficiency Strategy | Key Performance Bottleneck (Power/Thermal) | Hardware Impact | Hidden Tradeoffs |

|---|---|---|---|---|

| Edge AI | Maximize performance per watt through on-device inference using optimized models, quantization, and specialized accelerators (NPUs, DSPs). | Limited compute capacity and strict device thermal envelopes constrain model size and sustained workloads. | Hyper-specialized co-processors (domain-specific ASICs, neuromorphic chips, in-memory compute). Memory-compute co-design (PIM) reduces the energy cost of data movement. | Model staleness due to infrequent updates; energy and bandwidth tradeoffs for OTA model updates; heterogeneous hardware management complexity. |

| Cloud AI | Optimize throughput per watt across hyperscale GPU or ASIC clusters with advanced cooling and high utilization. | Data movement energy (“data gravity”) and large cooling requirements become major power bottlenecks at scale. | High-density GPU clusters, TPUs, and AI ASICs connected with high-bandwidth fabrics (NVLink, NVSwitch, InfiniBand). Software-defined infrastructure for elastic compute scaling. | Vendor lock-in, massive infrastructure cost, environmental footprint of hyperscale data centers, and network latency to edge devices. |

| Hybrid AI | Distribute workloads intelligently between edge devices and cloud infrastructure to balance latency and energy consumption. | Orchestration complexity, synchronization overhead, and secure communication between distributed systems. | Edge accelerators combined with cloud AI clusters. Requires orchestration layers, secure networking, and unified MLOps pipelines across heterogeneous hardware. | Larger attack surface, operational complexity, and challenges maintaining data consistency across distributed deployments. |

Real-World Applications

- Edge AI: Autonomous vehicles (real-time perception, decision-making), smart cameras (on-device object detection, privacy-preserving analytics), industrial IoT (predictive maintenance, anomaly detection at source), medical wearables (real-time health monitoring), smart appliances (local voice control).

- Cloud AI: Large language model training and inference, complex scientific simulations, global fraud detection, personalized recommendation engines, large-scale image/video processing for content moderation, and drug discovery.

- Hybrid AI: Smart cities (edge sensors for traffic, cloud for city-wide optimization), retail analytics (edge for in-store customer behavior, cloud for inventory and supply chain), remote healthcare (edge for patient monitoring, cloud for specialist consultation and record keeping), manufacturing (edge for real-time quality control, cloud for factory-wide process optimization).

Limitations

Each paradigm confronts inherent limitations that constrain its scalability and power efficiency.

Edge AI Limitations:

- Model Complexity Ceiling: Fundamental power and thermal envelopes limit model size and computational intensity. While optimizations help, there remains a hard limit to the computational intensity and model size that can be executed on small, battery-powered devices without significant accuracy loss or thermal throttling.

- Fragmented Ecosystem and Tooling: Diverse hardware and software stacks lead to fragmentation, hindering unified development, deployment, and security at scale. This fragmentation increases MLOps overhead and limits economies of scale.

- Cumulative Energy Footprint: While individual devices are low-power, scaling to billions of “always-on” edge devices creates a collective energy demand that is often underestimated and challenging to manage sustainably.

Cloud AI Limitations:

- Physical Limits of Hyperscale Data Centers: Land availability, energy grid capacity, and water resources impose practical limits on the physical expansion of hyperscale data centers. Scaling to even larger models and accommodating more users will necessitate fundamental shifts in data center design or a more distributed computing paradigm.

- Network Latency to the Edge: The speed of light fundamentally limits latency to geographically distant users or edge devices, constraining real-time, interactive AI applications that rely solely on cloud inference.

- Monopolization of AI Innovation: The immense capital required to build and operate hyperscale cloud AI infrastructure could concentrate AI innovation among a limited number of large players, potentially stifling competition.

Hybrid AI Limitations:

- Orchestration Complexity: Managing distributed MLOps pipelines across heterogeneous edge devices and diverse cloud services introduces significant operational complexity. This encompasses data pipelines, model versioning, security protocols, and lifecycle management.

- Security Surface Area: Expanding the deployment footprint to include numerous edge devices inherently expands the attack surface, necessitating robust, end-to-end security measures across a highly distributed environment.

- Data Consistency and Synchronization: Maintaining data consistency and synchronizing models and data across edge and cloud environments introduces overheads in terms of bandwidth, latency, and computational resources required for reconciliation.

Why It Matters

The selection of an AI deployment paradigm directly impacts the feasibility, cost, and sustainability of intelligent systems. Power efficiency, as a primary analysis lens, highlights critical engineering tradeoffs:

- For Edge AI: It dictates the form factor, battery life, thermal design, and ultimately the complexity of AI models that can be deployed. Pushing the limits of performance/watt is paramount for pervasive, real-time intelligence.

- For Cloud AI: It drives the design of hyperscale data centers, influencing cooling technologies, power delivery infrastructure, and the overall environmental impact. The “dark cost” of data movement, both within and to/from the cloud, represents a major power efficiency concern for petabyte-scale operations.

- For Hybrid AI: It underpins the intelligent distribution of workloads, ensuring that compute is performed at the most energy-efficient location for a given task, minimizing overall system power consumption while meeting performance requirements. This approach necessitates sophisticated resource management and orchestration capabilities.

A thorough understanding of these power-related bottlenecks and architectural implications is therefore crucial for designing scalable, reliable, and economically viable AI solutions for the future.

Key Takeaways

- Power Efficiency is Context-Dependent: Edge AI optimizes for performance/watt at the device level, Cloud AI for throughput/watt at the data center level, and Hybrid AI for overall system power efficiency through intelligent workload distribution.

- Silicon Specialization is Paramount: Edge AI drives the development of hyper-specialized, low-power AI co-processors and PIM. Cloud AI demands high-density, high-bandwidth accelerators and advanced interconnects. Hybrid AI necessitates robust orchestration of silicon and secure, efficient communication hardware.

- Trade-offs are Inherent: Edge AI faces model complexity limits and fragmentation. Cloud AI contends with data gravity, network latency to the edge, and the physical limits of data centers. Hybrid AI introduces significant orchestration and security complexity.

- Future Scalability Hinges on Continuous Optimization: Continued advancements in power-efficient silicon, memory-compute co-design, advanced cooling solutions, and intelligent workload orchestration are critical for overcoming inherent limitations and effectively scaling AI across all environments.