Table of Contents

The Intel Panther Lake NPU is a dedicated neural processing unit integrated into Intel’s Panther Lake client SoC, designed to accelerate on-device artificial intelligence workloads with up to 50 INT8 TOPS of inference performance. It enables low-latency, power-efficient execution of AI tasks such as real-time speech processing, computer vision, and local inference of large language models by offloading neural workloads from the CPU and GPU. The NPU forms a core component of Intel’s AI PC architecture, allowing sustained on-device AI execution within mobile power and thermal constraints.

The proliferation of artificial intelligence workloads on client devices necessitates specialized hardware acceleration to meet performance and power efficiency demands. Intel’s latest architectural iteration, the Panther Lake platform, introduces a highly capable Neural Processing Unit (NPU) designed precisely for this purpose. The Intel Panther Lake NPU is a critical component in defining the “AI PC” experience, providing a dedicated, power-optimized engine for neural network inference directly on the device. This analysis examines the engineering rationale, architectural specifics, and performance characteristics of the Intel Panther Lake NPU, exploring its role in enabling a new generation of intelligent applications.

Design Overview

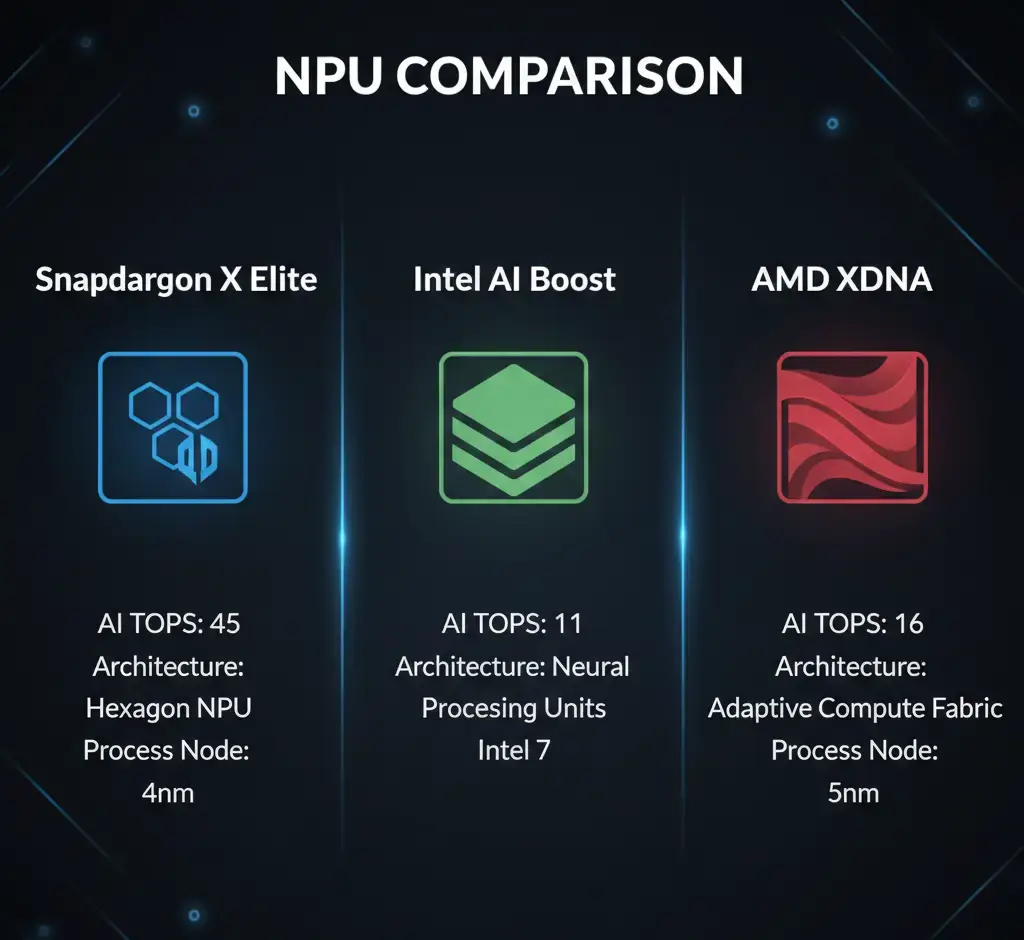

The Intel Panther Lake NPU is a purpose-built hardware accelerator integrated within the Panther Lake System-on-Chip (SoC). It is specifically designed to efficiently execute neural network models, offloading these computationally intensive tasks from the general-purpose CPU and integrated GPU. This dedicated silicon aims to deliver a peak performance of 50 INT8 TOPS, positioning Intel competitively within the rapidly evolving on-device AI landscape, a trend also evident in the detailed comparison of Snapdragon X Elite vs Intel AI Boost vs AMD XDNA. Its primary function is to enable sustained, real-time AI inference with significantly lower power consumption than traditional compute architectures, thereby extending battery life and enhancing the responsiveness of AI-driven features on client platforms.

How It Works

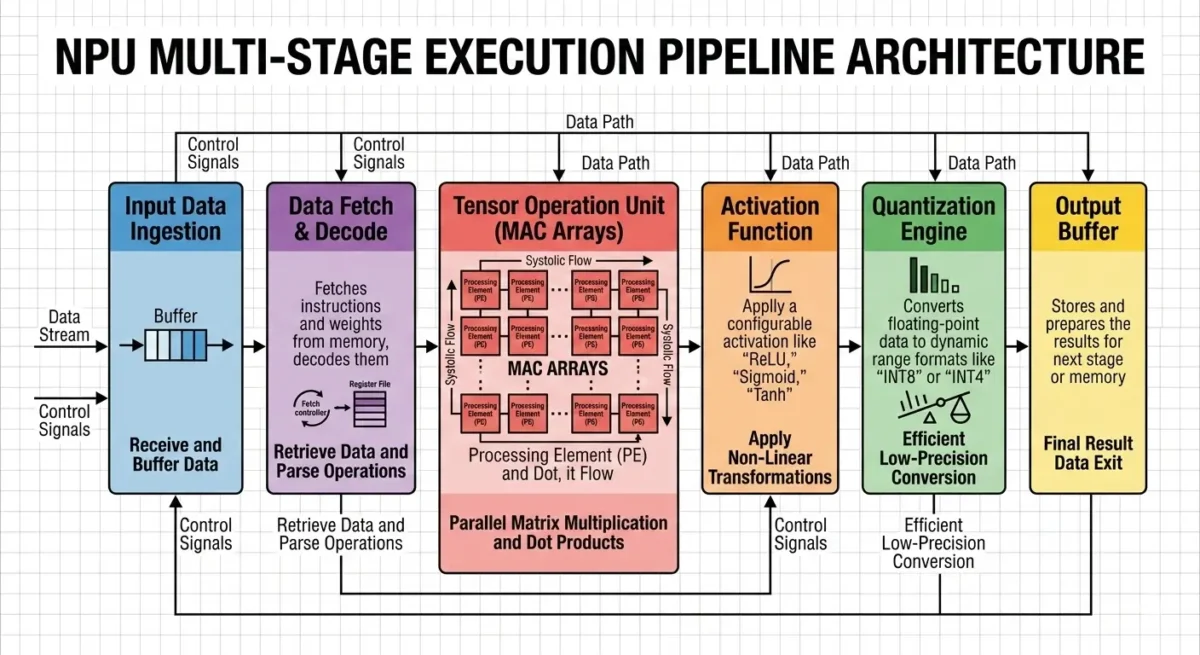

The Intel Panther Lake NPU operates by efficiently mapping and executing neural network graphs onto its specialized compute fabric. When an AI workload is offloaded to the NPU, a sophisticated software stack (including compilers and runtimes) translates the high-level model into a series of optimized operations for the NPU’s hardware, often leveraging tools detailed in the Intel OpenVINO toolkit documentation. Data is then streamed through a multi-level memory hierarchy, ensuring compute units are continuously supplied.

The NPU leverages highly parallelized processing elements, such as dense matrix multiplication arrays and vector processing units, to perform the vast number of multiply-accumulate (MAC) operations inherent in neural networks. A hardware-accelerated task scheduler orchestrates these operations, managing data flow and resource allocation to minimize latency and maximize throughput, particularly for low-batch inference. The architecture is heavily optimized for INT8 precision, which offers substantial performance and power benefits by reducing data movement and computational complexity.

Architecture Overview

The architecture of the Intel Panther Lake NPU balances specialized efficiency with a degree of programmability. Key elements include:

- Compute Clusters: At its core, the Intel Panther Lake NPU comprises multiple compute clusters, each housing a combination of specialized processing units.

- Dense MAC Arrays: Optimized for high-throughput matrix multiplication, these arrays are the workhorses for convolutional layers and transformer blocks, which dominate modern neural networks.

- Vector Processing Units (VPUs): Complementing the MAC arrays, VPUs handle element-wise operations, activations, and other vector-based computations, providing flexibility for diverse neural network layers.

- Fixed-Function Accelerators: Dedicated hardware blocks are included for common, highly repetitive operations, further enhancing efficiency for specific AI primitives.

- Programmable Micro-Engines: Small, embedded RISC-V cores are integrated within compute clusters. These provide a degree of programmability, allowing for custom kernel execution and adaptation to evolving AI models that might not perfectly fit the fixed-function pipeline.

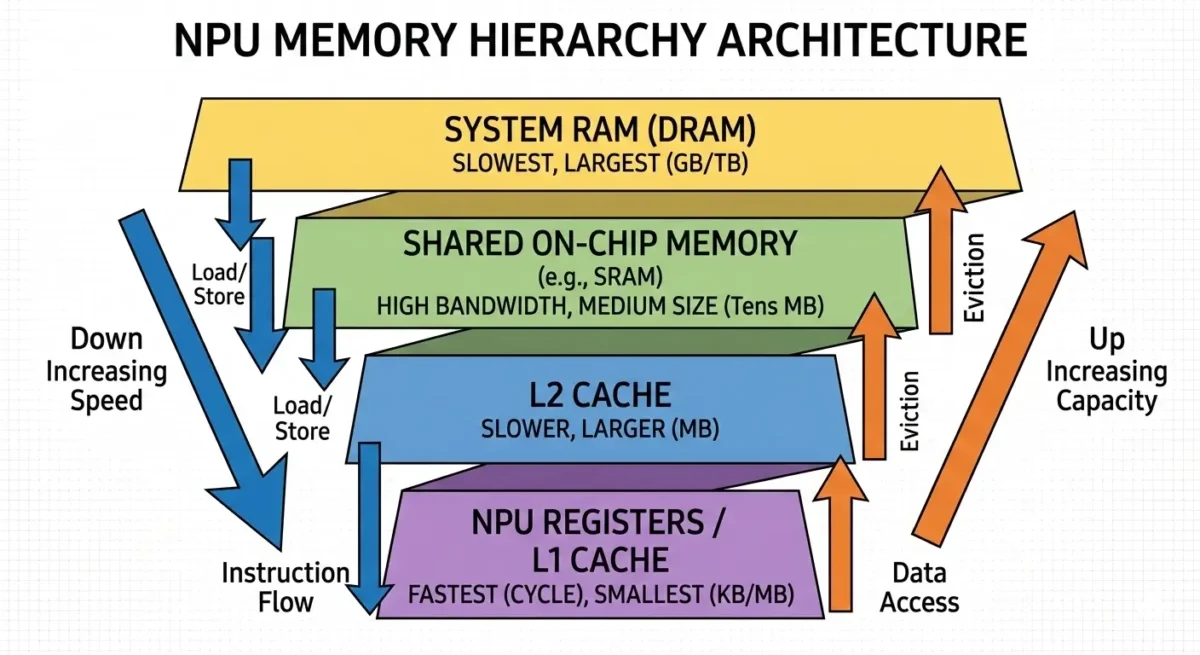

- Multi-Level Memory Hierarchy: To mitigate the “memory wall” bottleneck, the NPU features an elaborate on-chip memory system:

- Local Scratchpads: Dedicated, high-speed SRAM close to the compute units for immediate data access.

- Multi-Banked SRAM: Larger, shared on-chip SRAM pools provide higher capacity and bandwidth for intermediate results and model weights that fit within the NPU’s internal memory.

- Dedicated DMA Engines: High-bandwidth Direct Memory Access (DMA) engines manage efficient data transfers between the NPU’s internal memory and the slower, off-chip system DRAM (LPDDR5X/LPDDR6).

- High-Bandwidth Internal Interconnect: A robust internal fabric ensures rapid data movement between compute clusters, memory banks, and I/O interfaces, minimizing internal bottlenecks.

- Hardware-Accelerated Task Scheduler: This dedicated unit efficiently manages the execution of neural network graphs, dynamically allocating resources and orchestrating data flow to optimize for low-latency, batch=1 inference, which is critical for interactive AI experiences.

- Granular Power Management Unit (PMU) and DVFS: The NPU incorporates advanced power management capabilities, including Dynamic Voltage and Frequency Scaling (DVFS), to precisely control power consumption and thermal output, ensuring sustained performance within a tight power envelope.

Performance Characteristics

The Intel Panther Lake NPU is engineered for a specific performance profile tailored for client AI workloads:

- Peak Performance: The NPU is rated for 50 INT8 TOPS. This metric represents the theoretical maximum throughput for 8-bit integer operations, which is the primary data type for achieving peak efficiency.

- Sustained Performance: While the peak is 50 TOPS, real-world sustained performance is estimated to be in the range of 35-45 TOPS. This difference accounts for system-level factors such as memory bandwidth limitations, thermal constraints, and software overheads.

- Power Efficiency: A key design objective was extreme power efficiency, with the NPU achieving >15 INT8 TOPS/Watt. Its peak sustained power envelope is estimated at a low 3-3.5W, enabling extended AI workload execution within typical mobile device power and thermal budgets.

- Latency: The NPU prioritizes sub-millisecond inference latency for batch=1 workloads, especially when models can entirely reside within its extensive on-chip memory. This is crucial for real-time interactive AI applications.

- Data Type Support: While INT8 is the performance sweet spot, the Intel Panther Lake NPU also supports higher precision data types like FP16 and BF16. However, these come with a significant performance penalty, typically reducing throughput to 1/2 to 1/4 of the INT8 TOPS.

- Memory Bandwidth Dependency: For AI models exceeding the NPU’s on-chip memory capacity, performance becomes heavily reliant on the shared system LPDDR5X/LPDDR6 DRAM bandwidth (80-120 GB/s). This external memory access can become the ultimate bottleneck for larger, memory-bound models.

Real-World Applications

The capabilities of the Intel Panther Lake NPU directly translate into enhanced user experiences and new application possibilities for “AI PCs”:

- Always-On AI Features: Its low power consumption enables continuous operation of AI features like background blur, intelligent noise suppression during video calls, and gaze tracking, while maintaining reasonable battery life within typical usage scenarios, much like the advancements seen with NPUs in smartphones.

- Local LLM Inference: The 50 INT8 TOPS capability allows for efficient execution of smaller to medium-sized Large Language Models (LLMs) directly on the device, facilitating private, low-latency AI assistants and content generation without cloud dependency.

- Real-time Video and Audio Processing: Applications involving real-time video effects, object recognition, and advanced audio processing (e.g., voice isolation, transcription) benefit from the NPU’s dedicated acceleration, leading to smoother and more responsive interactions.

- Enhanced Productivity: Features like intelligent search, content summarization, and predictive text can leverage the NPU for faster, more context-aware operations, improving overall productivity.

- Gaming and Creative Workflows: While not a primary gaming accelerator, the NPU can offload AI tasks within games (e.g., NPC behavior, procedural generation) or creative applications (e.g., AI-powered image editing, style transfer), freeing up the GPU for graphics rendering.

Limitations

Despite its advanced design, the Intel Panther Lake NPU, like any specialized hardware, presents certain engineering tradeoffs and limitations:

- INT8 Quantization Accuracy: While 50 TOPS is an INT8 metric, the inherent quantization process can lead to subtle, model-dependent accuracy degradation. Developers must carefully evaluate if INT8 precision is acceptable for sensitive applications, or accept significant performance penalties when using FP16/BF16.

- On-Chip Memory Capacity: The extensive on-chip SRAM hierarchy, while crucial for performance, consumes substantial die area and adds to manufacturing cost. More critically, it imposes a hard capacity limit. Models exceeding this capacity will inevitably incur the latency and power penalties of accessing slower, off-chip system DRAM.

- Software Stack Maturity and Developer Ecosystem: The NPU’s full potential is critically dependent on a mature software ecosystem, including SDKs, compilers, and runtime frameworks such as OpenVINO and ONNX Runtime. Developer deployment increasingly relies on standardized operating-system AI interfaces provided through the Windows AI and Machine Learning platform, which manages workload distribution across CPU, GPU, and NPU accelerators. Gaps or complexities in this ecosystem can significantly hinder developer adoption and limit the NPU’s real-world impact, despite comprehensive resources like the Intel OpenVINO toolkit documentation.

- Architectural Rigidity vs. Model Evolution: While programmable micro-engines offer some flexibility, the NPU’s core architecture is optimized for current neural network paradigms. Rapid advancements in AI (e.g., novel sparse architectures, new data types beyond INT8, graph neural networks) could expose limitations, potentially requiring significant architectural overhauls in future generations.

- Persistent System Memory Bandwidth Ceiling: For increasingly larger AI models (e.g., next-generation LLMs with billions of parameters) that cannot fully reside on-chip, the shared system LPDDR5X/LPDDR6 DRAM bandwidth will remain a fundamental bottleneck, capping effective sustained performance regardless of internal NPU compute power.

- Competitive Obsolescence: The NPU market is intensely competitive, with rapid advancements from rivals. While 50 TOPS is a strong current offering, the pace of innovation means that competitive obsolescence in terms of raw TOPS or feature sets is a constant challenge.

System-Level Impact

The Intel Panther Lake NPU is a pivotal component for Intel’s strategy in the “AI PC” era. It matters for several key reasons:

- Defining the “AI PC” Experience: By delivering 50 INT8 TOPS with strong power efficiency, the NPU establishes a new baseline for what on-device AI acceleration can achieve, enabling a richer, more responsive, and always-on AI experience for end-users.

- Power Efficiency for Mobile Computing: Its low power envelope fundamentally transforms the feasibility of running complex AI workloads on thin-and-light laptops, extending battery life and enabling silent, cooler operation for AI-intensive tasks.

- Enabling New Application Classes: The NPU facilitates a shift from cloud-centric AI inference to edge computing, unlocking new possibilities for privacy-preserving, low-latency AI applications that operate entirely on the device.

- Competitive Positioning: The Intel Panther Lake NPU directly addresses the escalating NPU performance race, ensuring Intel’s client platforms remain highly competitive against offerings from other major silicon vendors.

- Foundation for Future Innovation: This architecture provides a robust foundation for future generations of AI hardware, allowing Intel to iterate and adapt to the evolving demands of artificial intelligence workloads.

Key Takeaways

- The Intel Panther Lake NPU is a dedicated hardware accelerator delivering 50 INT8 TOPS for on-device AI inference.

- It is designed for extreme power efficiency (>15 INT8 TOPS/Watt) with a low 3-3.5W peak sustained power envelope, crucial for client platforms.

- Its architecture features specialized compute units (MAC arrays, VPUs, micro-engines) and an elaborate on-chip memory hierarchy to mitigate the memory wall.

- The NPU enables always-on, real-time AI features, local LLM inference, and enhanced multimedia processing with reduced impact on battery life within typical usage scenarios.

- Key limitations include potential INT8 quantization accuracy tradeoffs, finite on-chip memory capacity, and critical dependency on a mature software development ecosystem.

- The Intel Panther Lake NPU is strategically vital for defining the “AI PC” experience, ensuring competitive leadership, and enabling a new generation of power-efficient, responsive AI applications on client devices.