Table of Contents

INT8 vs FP16 vs INT4 inference represents a fundamental engineering trade-off among computational efficiency, memory footprint, power consumption, and model accuracy on modern edge AI hardware. FP16 offers a pragmatic balance for initial adoption with moderate efficiency, INT8 provides a strategic sweet spot for pervasive, optimized efficiency with good accuracy. At the same time, INT4 pushes for extreme ultra-low power and silicon density. This pursuit of lower precision often comes at the cost of significant accuracy challenges and specialized hardware/software complexity. Each step down in bit-width demands greater architectural specialization and a higher “quantization engineering tax” to enable wider deployment of AI capabilities, directly impacting a device’s battery life and sustained operational duration.

The proliferation of Artificial Intelligence (AI) into edge devices—from smart sensors to autonomous vehicles—has exposed critical limitations in traditional compute architectures. Power, memory bandwidth, and thermal dissipation are no longer secondary concerns but primary design constraints. To overcome these silicon-level barriers, the semiconductor industry has progressively moved towards lower numerical precision for AI inference, specifically from FP16 (16-bit floating-point) to INT8 (8-bit integer) and now increasingly to INT4 (4-bit integer).

This evolution is not merely a data type reduction; it signifies a strategic shift in compute architecture, demanding specialized hardware and sophisticated software techniques to maintain model efficacy while drastically improving efficiency. This directly influences a device’s thermal stability under continuous AI workloads. Understanding INT8 vs FP16 vs INT4 inference is essential for engineers designing efficient AI workloads on edge devices, where power efficiency and memory constraints dominate system architecture.

What Is INT8 vs FP16 vs INT4 Inference

In the context of AI inference, numerical precision refers to the number of bits used to represent weights, activations, and intermediate computations within a neural network.

- FP16 (Half-Precision Floating-Point): Uses 16 bits to represent numbers, comprising a sign bit, exponent, and mantissa. It offers a wide dynamic range suitable for general-purpose computation and is a direct reduction from the standard FP32 (single-precision) used in training.

- INT8 (8-bit Integer): Uses 8 bits to represent numbers as integers. Since neural network values are typically floating-point, INT8 inference requires a process called quantization, where floating-point values are mapped to a fixed range of 256 integer values (e.g., -128 to 127 or 0 to 255) using a scale factor and a zero-point.

- INT4 (4-bit Integer): Uses 4 bits to represent numbers as integers, mapping floating-point values to a fixed range of 16 integer values. This extreme reduction in bit-width places significant constraints on the representable range and precision, making accurate quantization substantially more challenging. The choice of precision directly impacts the physical memory footprint of the model on the chip, influencing overall device size and cost.

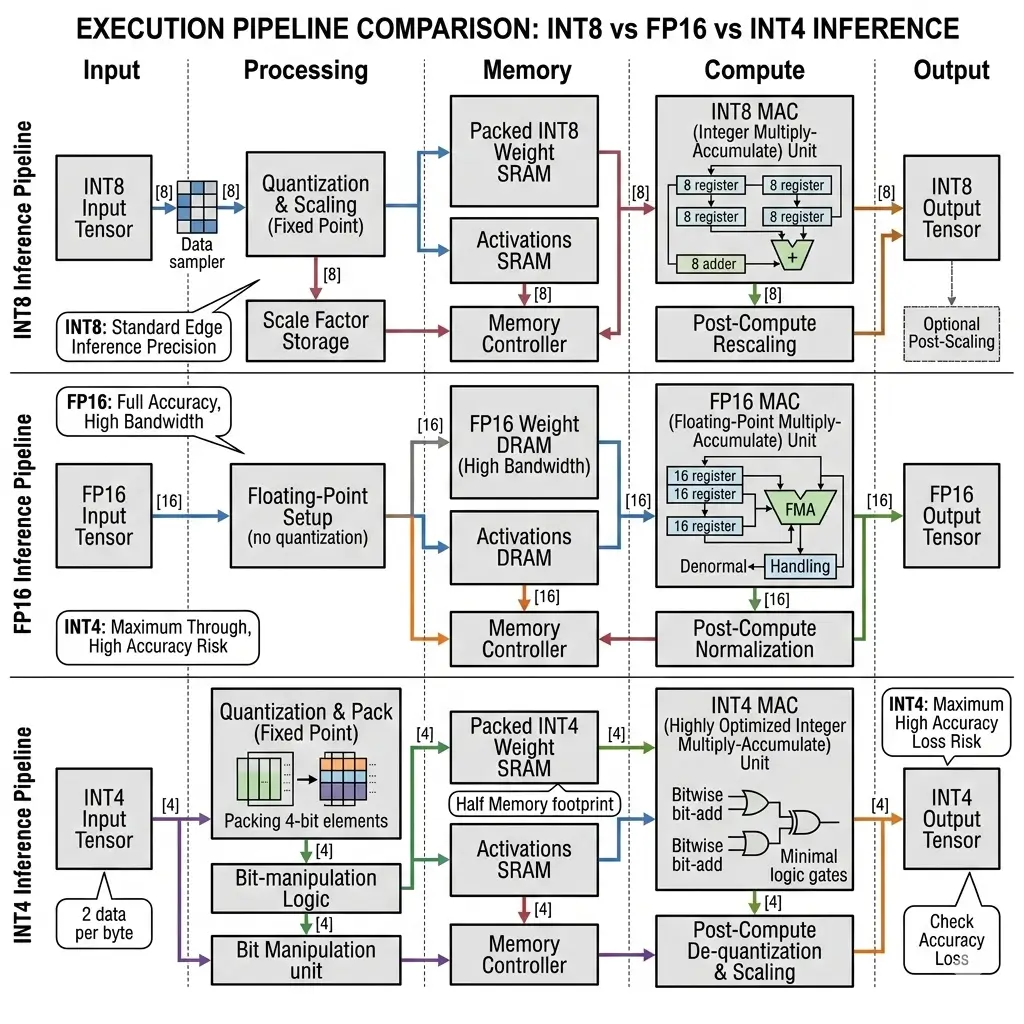

How INT8 vs FP16 vs INT4 Inference Works

To understand INT8 vs FP16 vs INT4 inference behavior, it is important to examine how quantization and integer arithmetic transform floating-point neural network computations into efficient hardware operations. The core principle behind lower precision inference is to reduce the amount of data that needs to be stored, moved, and processed.

For FP16, the conversion from FP32 is largely straightforward, involving a direct mapping of the floating-point representation. Hardware typically has native FP16 arithmetic logic units (ALUs) that can perform operations directly.

For INT8 and INT4, the process is more involved:

- Quantization: During or after model training, floating-point weights and activations are converted to their integer equivalents. This involves determining a

scalefactor and azero-pointfor each tensor (or groups of tensors) to map the floating-point range to the integer range.q = round(fp / scale + zero_point)

- Integer Arithmetic: The neural network operations (primarily multiply-accumulate, MAC) are then performed using integer arithmetic. This is significantly simpler and faster for hardware to execute than floating-point operations.

- Dequantization (Implicit/Explicit): Intermediate results might be dequantized back to a wider integer format (e.g., 32-bit integer for accumulation) to prevent overflow and maintain precision, before being re-quantized for the next layer. The final output might be dequantized back to floating-point if required by the application.

The challenge intensifies with INT4 due to the extremely limited range (16 values). Maintaining accuracy often necessitates more granular quantization (e.g., per-channel or group-wise scales), mixed-precision strategies (using INT8 or FP16 for sensitive layers), and techniques such as quantization-aware training for efficient integer-arithmetic-only inference, where the quantization process is simulated during model training to make the model more robust to precision loss. The shift to integer arithmetic significantly reduces the power consumption per operation, directly improving offline execution impact by extending battery life for devices performing inference without external power.

Edge AI Architecture for INT8 vs FP16 vs INT4 Inference

The shift to lower precision has profound implications for silicon architecture, driving the design of specialized processing units.

- FP16: Leverages existing floating-point units found in GPUs and Digital Signal Processors (DSPs). While these units can perform FP16 operations, they are generally more complex and occupy more silicon area per MAC operation compared to integer units. Their design prioritizes dynamic range and precision.

- INT8: Drives the design of highly dense, specialized Neural Processing Units (NPUs) or Tensor Cores. These architectures feature arrays of dedicated 8-bit integer MAC units, which are significantly smaller and more power-efficient than FP16 units. A critical architectural consideration for INT8 is the need for wider accumulators (typically 32-bit) to prevent overflow during the accumulation of many 8-bit products. This accumulator stage can become a hidden power sink and area constraint, subtly limiting ultimate density. Modern NPUs, such as those found in Snapdragon X Elite, Intel AI Boost, and AMD XDNA, are designed with these INT8 optimizations in mind.

- INT4: Represents the ultimate pursuit of silicon density and ultra-low power. It implies even more specialized, potentially non-standard hardware architectures designed to pack an unprecedented number of 4-bit MACs. The extreme bit-width reduction opens the door to DRAM-less designs, where entire models can reside on-chip in SRAM, fundamentally altering device architecture by eliminating the external memory bottleneck. This requires sophisticated hardware to manage the complex scale factors and zero-points associated with fine-grained INT4 quantization. This architectural specialization directly impacts the sustained performance of AI tasks, allowing for longer periods of high-throughput computation without thermal throttling.

Performance Characteristics

In practical deployments, the performance differences between INT8 vs FP16 vs INT4 inference become visible in latency, memory bandwidth consumption, and power efficiency across edge AI hardware. The following table summarizes the key engineering characteristics and performance implications across different precisions, based on observed data and architectural principles.

| Characteristic | FP16 Inference | INT8 Inference | INT4 Inference |

|---|---|---|---|

| Latency | Higher | Significantly Lower than FP16 | Potentially Lowest (if native HW & efficient data paths) |

| TOPS (Arithmetic Density) | Baseline (e.g., 1x) | ~2x FP16 TOPS (for same MAC array) | ~2x INT8 TOPS / ~4x FP16 TOPS (for same MAC array) |

| Power Consumption (per Op) | Higher | Lower than FP16 | Lowest (minimal data movement, simpler logic) |

| Memory Footprint | Baseline (e.g., 1x model size) | ~1/2 of FP16 model size | ~1/4 of FP16 model size |

| Memory Bandwidth Demand | Higher | Reduced vs FP16 | Lowest (enables higher cache utilization) |

| Accuracy Retention | Generally excellent (minimal deviation from FP32) | Generally good (<1% Top-1 drop typical) | Highest risk of degradation; requires advanced techniques |

| Hardware Support | Widespread (GPUs, DSPs) | Widespread (NPUs, Tensor Cores, Hexagon, Ethos-U) | Emerging (custom ASICs, newer Hexagon/Ethos-U roadmaps) |

| Quantization Effort | Minimal (direct FP32->FP16 conversion) | Moderate (PTQ/QAT, calibration) | High (QAT, group-wise, mixed-precision, calibration) |

Real-World Applications

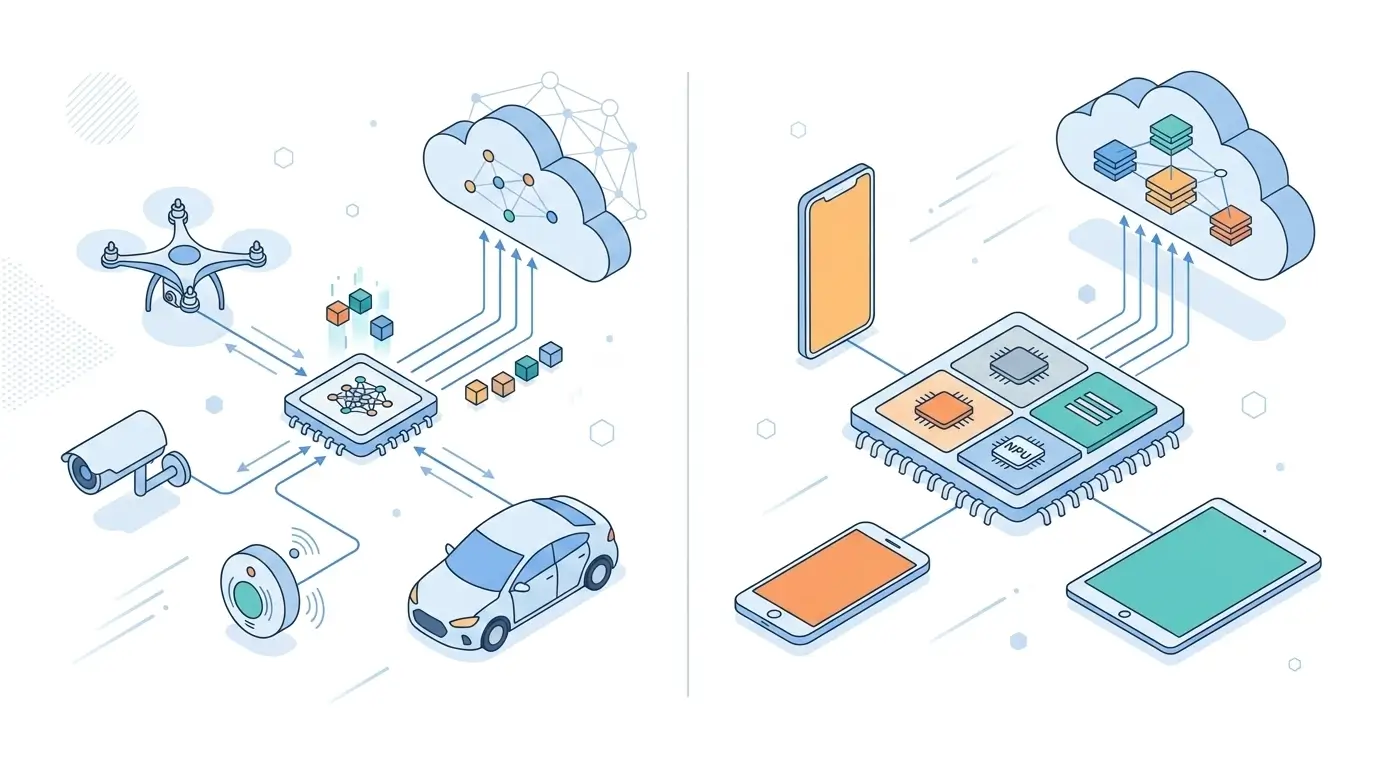

- FP16: Primarily used in higher-power edge devices (e.g., NVIDIA Jetson platforms, higher-end automotive ECUs) where accuracy is paramount, and the power budget allows for more robust thermal management. It serves as a pragmatic bridge for migrating existing FP32 models with minimal development overhead.

- INT8: The workhorse for mainstream edge AI. It enables efficient inference for applications like real-time object detection, speech recognition, and natural language processing on power-constrained devices such as smartphones, smart cameras, and drones. Its balance of efficiency and accuracy makes it critical for enabling the broad deployment of AI capabilities within typical power and thermal envelopes.

- INT4: Targets ultra-low power, ultra-compact, and potentially DRAM-less inference scenarios. This includes micro-sensors, wearables, and other deeply embedded systems where every milliwatt and square millimeter of silicon is critical. It is particularly valuable for models that can fit entirely into on-chip SRAM, enabling truly private On-Device AI Voice Assistants with minimal power draw.

Limitations

Each precision level comes with inherent engineering tradeoffs and limitations that impact design choices and scalability.

| Precision | Primary Strategic Driver | Hidden Tradeoff / Engineering Challenge | Sustained Performance Impact |

|---|---|---|---|

| FP16 | Rapid market entry, incremental scalability | Thermal inefficiency, larger silicon area per MAC | Significant degradation due to thermal throttling |

| INT8 | Pervasive efficiency, optimized scalability | “Quantization Engineering Tax,” 32-bit accumulator bottleneck | Robust and predictable; bottleneck shifts to external memory |

| INT4 | Ultra-low power, enabling highly pervasive AI, DRAM-less | “Accuracy Cliff,” conversion overhead paradox | High sustained performance (for on-chip models), but inconsistent with mixed-precision overheads |

- FP16: The “Performance Illusion” of Thermal Limits. While offering good peak performance, FP16 units generate more heat per operation due to their complexity. On passively cooled edge devices, this leads to thermal throttling, causing significant degradation in sustained performance and limiting its scalability for continuous, high-throughput operation. Its future is limited by fundamental power and memory bandwidth demands.

- INT8: The “Quantization Engineering Tax” and Accumulator Bottleneck. The efficiency gains of INT8 come with a non-trivial cost in developing robust quantization workflows (PTQ/QAT) and ensuring high-quality calibration. Furthermore, while MACs are 8-bit, the necessity for 32-bit (or wider) accumulators to prevent overflow means a significant portion of the compute pipeline still operates on wider data. This subtly limits the effective power and area savings, as the accumulator stage can become a hidden power sink and area constraint, impacting ultimate density. INT8 also faces an “accuracy ceiling” for increasingly complex or novel model architectures.

- INT4: The “Accuracy Cliff” and Conversion Overhead Paradox. The most significant hidden tradeoff for INT4 is the high risk of catastrophic accuracy degradation, often termed the “accuracy cliff,” which is difficult to predict and mitigate without specialized techniques (e.g., group-wise quantization, QAT, architectural modifications). To achieve acceptable accuracy, INT4 often necessitates mixed-precision strategies, introducing frequent de/re-quantization and precision conversion overheads between layers. If not perfectly optimized in hardware and software, these conversions can introduce significant latency, power consumption, and complexity, negating some of the bit-width reduction benefits and limiting effective throughput scalability. Its widespread adoption hinges on breakthroughs in quantization techniques and the standardization of robust mixed-precision hardware/software stacks.

Why It Matters

The progression from FP16 to INT8 to INT4 is a critical enabler for expanding the reach and efficiency of AI deployment. It directly addresses the fundamental silicon limitations of power, memory, and thermal management that dictate the feasibility of deploying AI at scale on the edge.

- Enabling Ubiquitous Intelligence: Lower precision allows AI to move into smaller form factors, with longer battery life, and into environments where active cooling or external DRAM are prohibitive. This enables new applications in smart sensors, wearables, and truly embedded systems, pushing intelligence to the very edge of the network.

- Economic Scalability: By reducing memory footprint and bandwidth, lower precision allows for more models to be deployed on a single chip, or for larger models to be deployed on existing hardware. The increased arithmetic density translates to more TOPS per watt and per square millimeter of silicon, driving down the cost of AI inference.

- Strategic Commitment: Each step down in precision represents an escalating commitment to specialized hardware and a calculated gamble on software complexity. It forces semiconductor architects to innovate at the silicon level, designing dedicated accelerators that are fundamentally more efficient than general-purpose processors for AI workloads. This strategic pivot is essential for maintaining competitive advantage and driving advancements in AI hardware and software.

Key Takeaways

In summary, choosing between INT8 vs FP16 vs INT4 inference depends on the balance between efficiency and accuracy required by the target hardware platform. While FP16 offers simplicity and compatibility, INT8 provides the best balance for most edge deployments, and INT4 represents the frontier of ultra-efficient AI execution for next-generation embedded systems.

- Precision vs. Efficiency: Lower precision (INT8, INT4) drastically improves compute efficiency, reduces memory footprint, and lowers power consumption compared to FP16.

- Architectural Specialization: Achieving these gains requires dedicated hardware (NPUs, Tensor Cores) with specialized integer MAC units, moving away from general-purpose floating-point units.

- Accuracy-Efficiency Tradeoff: Each reduction in bit-width introduces a higher risk of accuracy degradation, demanding increasingly sophisticated quantization techniques (PTQ, QAT, mixed-precision) and careful calibration.

- Hidden Costs: The benefits of lower precision come with a “quantization engineering tax” (development effort), potential accumulator bottlenecks, and conversion overheads in mixed-precision schemes.

- Strategic Imperative: The drive towards INT4 is a significant architectural shift aimed at ultra-low power, DRAM-less designs, and highly pervasive AI, fundamentally altering device architectures and enabling AI in new form factors.