Summary

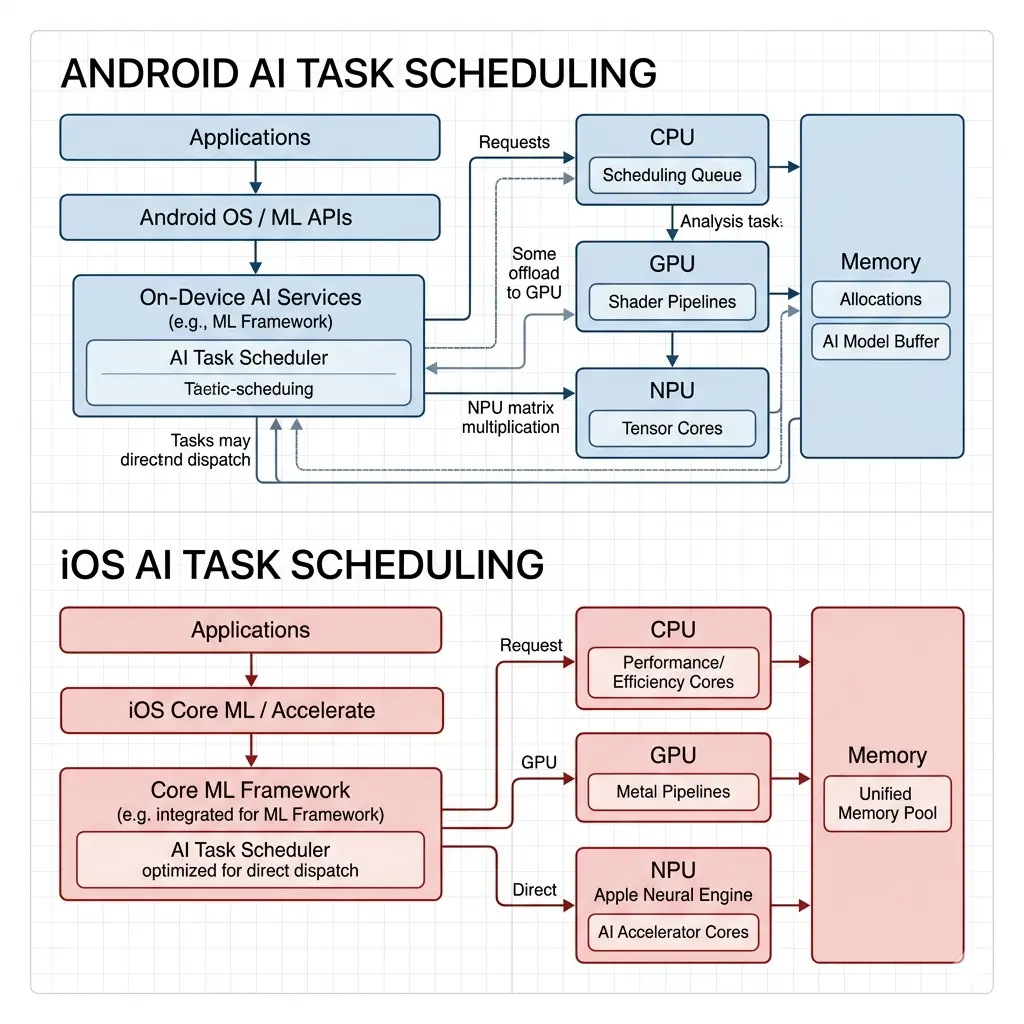

How Android and iOS schedule AI tasks differs in design and efficiency. Android uses NNAPI with HAL across fragmented hardware, while iOS uses Core ML with unified memory and Apple Neural Engine (ANE) for faster, more efficient AI processing.

Table of Contents

How Android and iOS schedule AI tasks differ in design. Android uses NNAPI with HAL across fragmented hardware, while iOS uses Core ML with unified memory and Apple Neural Engine (ANE) for faster, more efficient AI processing.

When you tap the camera on your phone and instantly see features like portrait blur, night mode, or real-time object detection, you’re witnessing on-device artificial intelligence in action. These AI tasks happen in milliseconds—without sending data to the cloud. But behind this seamless experience lies a complex system deciding where and how each AI task runs—whether on the CPU, GPU, or a dedicated neural processor, as explained in our guide on CPU vs GPU vs NPU for AI workloads.

As modern smartphones rely more on AI for everyday experiences like voice assistants, photography, and live translation, efficient scheduling of these workloads becomes critical. Poor scheduling can lead to battery drain, overheating, or lag, while optimized systems deliver smooth, real-time performance.

This is where the difference between platforms becomes clear. This article breaks down how Android and iOS schedule AI tasks, comparing Android’s flexible NNAPI-based ecosystem with iOS’s tightly integrated Core ML approach. These design choices directly impact power efficiency, memory architecture, latency, and overall user experience. Understanding how Android and iOS schedule AI tasks also helps explain why performance and efficiency vary across devices.

What is AI task scheduling on mobile devices?

AI task scheduling on mobile devices refers to the system-level process of determining which available hardware accelerator (e.g., Neural Processing Unit (NPU), Graphics Processing Unit (GPU), Central Processing Unit (CPU)) should execute a specific segment of a neural network model. This decision-making process is crucial for optimizing performance, minimizing power consumption, and ensuring low-latency inference for real-time AI applications. Both Android and iOS provide frameworks—NNAPI and Core ML, respectively—that abstract the underlying hardware, allowing developers to deploy models without needing deep knowledge of specific silicon architectures.

How Android Schedules AI Tasks Using NNAPI

This section explains how Android and iOS schedule AI tasks differently, starting with Android’s NNAPI-based approach. Android’s approach centers around the Neural Networks API (NNAPI), which serves as a common interface for machine learning frameworks (like TensorFlow Lite, PyTorch Mobile) to access hardware acceleration. Underneath NNAPI operates the Hardware Abstraction Layer (HAL). Each System-on-Chip (SoC) vendor (e.g., Qualcomm, Google, Samsung, MediaTek) implements their own HAL, exposing the capabilities of its proprietary neural processing units, GPUs, and optimized CPU pathways.

When an AI task is submitted via NNAPI, the Android runtime performs a heuristic-based selection. It queries the available HALs for their reported capabilities and estimated performance for the given model operations. Based on static profiles and a best-effort estimation, it delegates the task to what it perceives as the most suitable accelerator, often without real-time awareness of current system load or thermal state. If an operation is not supported by any accelerator, it falls back to CPU execution.

This model necessitates explicit data movement between the fragmented memory domains of different accelerators (NPU scratchpad, GPU caches, shared LPDDR DRAM), which can introduce significant latency and power overhead, directly impacting the thermal stability of the device during sustained AI operations.

How iOS Schedules AI Tasks with Core ML

To understand how Android and iOS schedule AI tasks, it is important to examine how iOS optimizes execution using Core ML and the Apple Neural Engine. iOS employs Core ML as its primary framework for integrating machine learning models into applications. Core ML operates within a tightly integrated hardware-software stack. When an AI task is submitted, Core ML’s intelligent dispatch mechanism dynamically analyzes the model’s structure, the current system load, and the capabilities of Apple’s co-designed silicon components: the Apple Neural Engine (ANE), the integrated GPU, and the CPU.

The ANE is a custom, fixed-function accelerator specifically designed for common neural network operations, offering exceptional power efficiency. For operations not optimally suited for the ANE, or when the ANE is saturated, Core ML seamlessly offloads to the highly optimized GPU (via Metal Performance Shaders, MPS) or, as a last resort, the CPU. A key architectural advantage is Apple’s unified memory architecture, where the CPU, GPU, and ANE share a single, coherent memory pool. This significantly reduces explicit data copies between processing units, drastically lowering latency and power consumption associated with data movement, which directly translates to improved battery impact for AI-intensive applications.

NNAPI vs Core ML: Key Differences in AI Scheduling

This comparison highlights how Android and iOS schedule AI tasks differently, especially in handling performance, memory, and power efficiency.

| Feature | Android (NNAPI) | iOS (Core ML) |

|---|---|---|

| Architectural Philosophy | “Lowest Common Denominator” (Fragmented Ecosystem) | “Vertically Integrated Performance Ceiling” (Co-designed) |

| Hardware Abstraction | NNAPI with Vendor-Specific HALs | Core ML leveraging Apple Silicon (ANE, GPU, CPU) |

| Compute Units | Diverse Vendor NPUs, Adreno/Mali/etc. GPUs, CPUs | Apple Neural Engine (ANE), Apple GPU, Apple CPU |

| Memory Architecture | Fragmented Memory Domains (Explicit Data Movement) | Unified Memory Architecture (Shared, Coherent Pool) |

| Task Dispatch | Heuristic-Based Selection (Best-Effort Delegation) | Intelligent Dispatch (System-Level Optimization) |

| Silicon Constraints | Diverse NPU/GPU microarchitectures, varying interconnects, driver quality | Specialized ANE, tightly coupled CPU/GPU/ANE, high-bandwidth unified memory |

| Runtime Execution | Dynamic graph partitioning, potential for significant data copies, HAL overhead | Seamless offloading, minimal data copies, deep compiler/runtime optimization |

| System Bottlenecks | Interconnect bandwidth (LPDDR saturation), driver quality, thermal throttling from inefficient delegation | Architectural rigidity for novel AI paradigms, potential ANE saturation for very large models |

Android: The “Heuristic Lottery” and Interconnect Bottleneck

Android’s AI task performance is highly variable, often described as an “AI Performance Chasm.” The heuristic-based selection can lead to a “Heuristic Lottery,” where the runtime’s best-effort guess might not be optimal for the current system state (e.g., thermal load, real-time interconnect congestion). This can result in tasks being dispatched to a theoretically faster NPU capable of high peak performance that is already thermally constrained, or a GPU path that incurs substantial memory copies.

Developers can sometimes influence this behavior through specific API calls, as detailed in the Qualcomm AI Engine SDK documentation. These explicit data movements across fragmented memory domains saturate the shared LPDDR bandwidth, consuming significant power and increasing latency. Under sustained AI workloads, Android devices often hit a “Throttling Cliff,” where aggressive clock speed reductions across CPU, GPU, and NPU lead to a dramatic and sudden drop in sustained performance due to rapid thermal budget consumption, typically within the mobile power envelope of a few watts sustained.

iOS: The “Black Box of Optimal” and Graceful Plateau

iOS delivers a consistently optimized AI experience with predictable low-latency and high performance-per-watt. The intelligent dispatch mechanism, while a “Black Box of Optimal” to developers (limiting fine-grained control), ensures highly efficient resource utilization. The unified memory architecture significantly reduces memory copy overheads, lowering latency for real-time AI tasks. The ANE, being a highly power-efficient, fixed-function accelerator, generates less heat. When ANE capacity is reached, Core ML seamlessly offloads to the highly optimized GPU, which benefits from the unified memory. This results in a more “Graceful Plateau” under sustained AI workloads, where performance degrades gradually and predictably rather than abruptly.

How Android and iOS Use AI Task Scheduling in Real-World Applications

Android

On Android, the variability means that AI-powered features like advanced camera processing (e.g., Night Sight, Portrait Mode), real-time voice transcription, or on-device language translation can perform exceptionally well on flagship devices with highly optimized NPUs and HALs, but may exhibit noticeable latency, reduced quality, or higher battery drain on mid-range or older devices. Developers must often optimize for the lowest common denominator or implement device-specific optimizations.

iOS

On iOS, the consistent performance ensures that features like Face ID’s depth mapping, real-time video effects, Siri’s on-device intelligence, or advanced photo editing (e.g., Semantic Segmentation for subject isolation) deliver a consistently smooth, low-latency, and power-efficient experience across all modern devices. This predictability simplifies development and quality assurance, as developers can rely on a stable performance envelope.

Limitations of Android and iOS AI Task Scheduling

Android

The primary limitation for Android is hardware fragmentation. The reliance on vendor-specific HALs means that the quality and optimization of AI acceleration vary wildly across devices. This creates an “AI Performance Chasm,” where the theoretical capabilities of NNAPI are often bottlenecked by inefficient HAL implementations or suboptimal interconnects. The “Heuristic Lottery” can lead to suboptimal runtime decisions, exacerbating power consumption and thermal issues. Developers face challenges ensuring consistent performance and user experience across the vast Android ecosystem, particularly impacting offline execution impact where consistent performance without cloud assistance is critical.

iOS

While highly efficient, iOS’s approach has its own limitations. The architectural rigidity of the ANE, being a specialized, fixed-function accelerator, means it is optimized for current dominant neural network architectures. If future AI paradigms (e.g., highly sparse networks, novel activation functions, graph neural networks) fundamentally shift, Apple’s hardware might face a multi-year lag in silicon adaptation, relying on less efficient GPU/CPU fallbacks in the interim, potentially affecting latency behavior for new model types. Furthermore, the “Black Box of Optimal” means developers have limited transparency or fine-grained control over dispatch decisions, which can hinder niche, highly specialized optimizations. The closed ecosystem also means no third-party hardware integration.

Why It Matters

Understanding how Android and iOS schedule AI tasks is essential for developers aiming to optimize performance, efficiency, and scalability in AI-driven mobile applications. The divergent strategies of Android and iOS in scheduling AI tasks have profound implications for the entire mobile ecosystem. For developers, it dictates the complexity of optimizing AI-powered applications, balancing broad compatibility against peak performance. For OEMs and SoC vendors in the Android space, it drives the imperative to develop highly efficient NPUs and robust HALs as a key differentiator.

For Apple, it reinforces its strategy of vertical integration, ensuring a consistent and optimized user experience that leverages deep hardware-software co-design. Ultimately, these architectural choices directly influence device battery life, responsiveness, the perceived “intelligence” of the device, and the pace of innovation in on-device AI capabilities.

Key Takeaways

These insights clearly show how Android and iOS schedule AI tasks differently across hardware architectures and real-world scenarios.

- Android uses NNAPI and a HAL model to abstract diverse vendor hardware, leading to fragmented performance and reliance on heuristic dispatch.

- iOS employs Core ML with a vertically integrated stack (ANE, GPU, CPU) and unified memory, enabling intelligent dispatch and consistent, high performance-per-watt.

- Silicon constraints on Android manifest as interconnect bottlenecks and variable NPU efficiency, while iOS leverages co-designed components to eliminate data movement overheads.

- Runtime execution on Android can suffer from a “Heuristic Lottery” and “Throttling Cliff,” whereas iOS offers a “Graceful Plateau” due to deep optimization and unified memory.

- These architectural choices fundamentally shape the developer experience, OEM differentiation, and the end-user’s perception of on-device AI capabilities.

FAQs

What is AI task scheduling on mobile devices?

AI task scheduling determines whether CPU, GPU, or NPU runs a machine learning model for optimal performance and efficiency.

Why is iOS better at AI power efficiency?

Because of unified memory and Apple Neural Engine optimization, data movement and power usage are reduced.

Why is Android AI performance inconsistent?

Due to hardware fragmentation and vendor-specific HAL implementations.

How do Android and iOS schedule AI tasks differently?

Android uses NNAPI with vendor-specific hardware abstraction layers, while iOS uses Core ML with unified memory and Apple Neural Engine for more efficient and consistent AI task scheduling.