Table of Contents

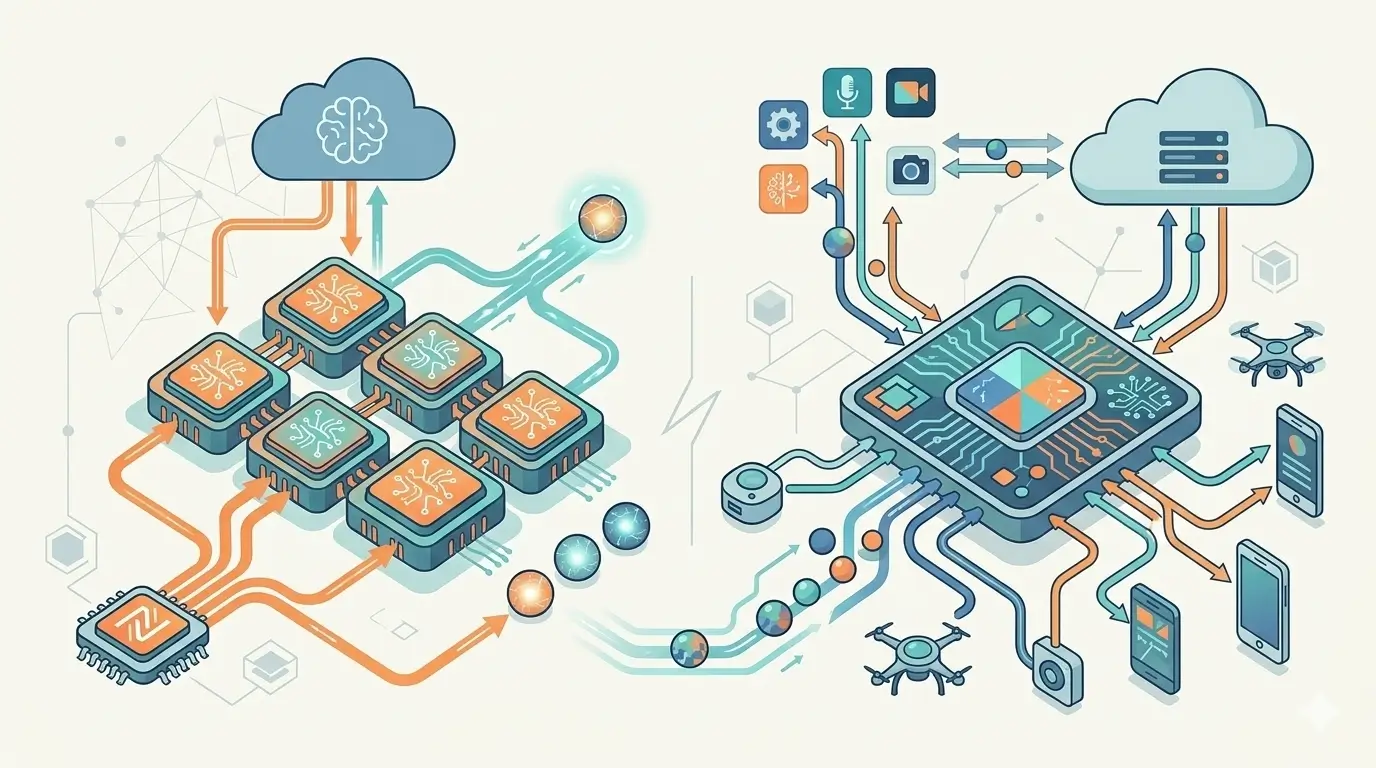

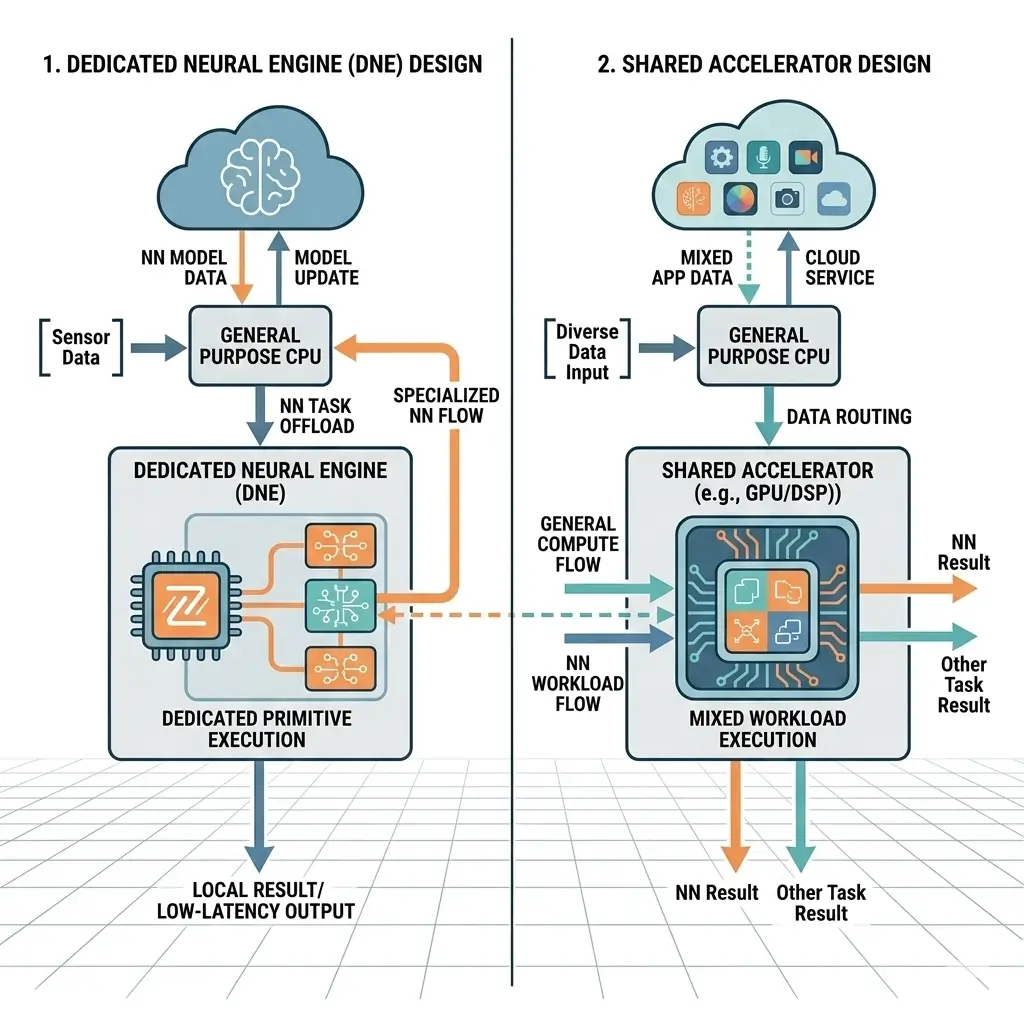

Dedicated Neural Engine vs Shared Accelerators represents a fundamental architectural trade-off in modern AI hardware. Dedicated Neural Engines (DNEs) are specialized silicon blocks engineered for high power efficiency and low-latency execution of neural network (NN) primitives, offloading general-purpose compute units. Shared accelerators, typically GPUs or DSPs, execute NN workloads alongside other tasks using general-purpose compute resources, prioritizing flexibility and adaptability. The core trade-off lies between the DNE’s superior efficiency scaling for targeted tasks and the shared accelerator’s broader paradigm scalability for evolving AI models, with important implications for memory subsystem design and runtime bottlenecks under sustained workloads.

The architectural choice between Dedicated Neural Engine vs Shared Accelerators is a pivotal engineering problem in modern AI systems. It dictates how silicon resources are allocated, how power budgets are managed, and ultimately, the future scalability of AI functionality. This decision directly impacts system bottlenecks, particularly within the memory subsystem, and determines the runtime execution characteristics critical for deploying AI from the edge to the data center.

Dedicated Neural Engine vs Shared Accelerators: Architecture Comparison for AI Chips

A Dedicated Neural Engine (DNE) is a purpose-built hardware block designed from the ground up to accelerate specific neural network operations, such as matrix multiplications, convolutions, and activation functions. Its primary function is to execute these NN primitives with maximum power efficiency and minimal latency, offloading the CPU and GPU. This specialization enables ubiquitous, always-on AI features in power-constrained environments by providing a highly optimized, fixed-function or configurable compute fabric, similar to a Neural Engine in smartphones.

A Shared Accelerator, conversely, refers to general-purpose compute units—most commonly Graphics Processing Units (GPUs), but also Digital Signal Processors (DSPs) or advanced CPUs with extensive vector units—that are programmed to execute AI workloads. These accelerators are designed for flexibility, supporting a wide array of computational tasks beyond neural networks. They leverage existing hardware investments and mature software ecosystems, allowing for adaptability to diverse and evolving computational demands.

Dedicated Neural Engine vs Shared Accelerators: Core Architecture

The architectural divergence between DNEs and Shared Accelerators is profound, particularly in their compute fabric, instruction sets, and memory hierarchies. These fundamental differences directly influence a device’s sustained performance under heavy AI workloads, as DNEs are less prone to thermal throttling for their specific tasks compared to general-purpose shared accelerators.

| Feature | Dedicated Neural Engine (DNE) | Shared Accelerator (e.g., GPU) |

|---|---|---|

| Compute Fabric | Highly specialized MAC arrays, systolic arrays, tensor cores | General-purpose Streaming Multiprocessors (SMs), SIMD/vector units |

| Instruction Set | Domain-specific, often microcode-driven or fixed-function | General-purpose ISA (e.g., CUDA, OpenCL, SYCL) |

| Data Types | Optimized for INT8, FP16, BFloat16 | Supports wider range (FP32, FP64, INT32, etc.) alongside NN types |

| On-chip Memory | Tightly coupled scratchpads, local buffers, input/output FIFOs | Multi-level caches (L1, L2, L3), shared memory |

| Control Flow | Simplified, data-parallel, less branch-heavy | Complex, supports general-purpose branching, synchronization |

| Silicon Area Justification | Specialized efficiency for defined NN tasks | General-purpose flexibility amortizes cost across diverse workloads |

DNEs are characterized by their streamlined data paths and minimal control logic, focusing on high throughput for specific NN operations. Their instruction sets are typically limited to NN primitives, allowing for extremely compact and power-efficient implementations. Shared accelerators, in contrast, feature more complex control units, wider instruction sets, and deeper pipelines to handle the variability of general-purpose code.

Performance Comparison

The performance characteristics of DNEs and Shared Accelerators are best understood through the lens of efficiency versus flexibility.

Dedicated Neural Engine (DNE):

- Pros: Exhibits superior efficiency scaling (operations per watt) for its targeted neural network tasks. Predictable, low-latency execution is a hallmark, as it’s isolated from other system contention. High peak throughput for dense matrix operations and convolutions is achieved through specialized hardware and can be sustained within its power envelope. This offloads general-purpose units, allowing them to scale their primary functions without being bogged down.

- Cons: Suffers from limited paradigm scalability. If fundamental NN primitives or model architectures (e.g., sparse models, dynamic graphs, novel attention mechanisms) evolve significantly, DNEs accumulate “architectural debt.” This can lead to reduced efficiency for new paradigms or necessitate costly re-architecting. The “compiler chasm”—the immense complexity for compiler developers to map increasingly complex and dynamic NN graphs onto a fixed, specialized architecture—can become a significant bottleneck for effective performance scalability. “Feature creep,” where DNEs attempt to support a broader range of NN types, can dilute their specialized efficiency.

Shared Accelerator (e.g., GPU):

- Pros: Offers high paradigm scalability, adapting readily to new NN models and diverse workloads due to its general-purpose programmability. It incurs lower upfront architectural debt, as its flexibility allows it to evolve with software. Can leverage large compute resources for very high absolute peak throughput in data centers, with sustained performance subject to thermal and power limits.

- Cons: Generally exhibits lower efficiency scaling (operations per watt) for specific NN tasks compared to a DNE, due to the overhead of general-purpose logic, wider instruction sets, and more complex memory hierarchies. Performance can be variable due to resource contention with other workloads (CPU, graphics, etc.). Higher power consumption for equivalent NN tasks is typical.

Power & Thermal Behavior

Dedicated Neural Engine (DNE): DNEs are fundamentally designed for stringent power budgets, making them ideal for mobile, IoT, and edge devices. Their specialization allows for extremely high operations per watt by eliminating unnecessary general-purpose logic and optimizing data paths for NN data types. This results in lower power density, which simplifies thermal management and enables smaller form factors without requiring active cooling solutions. DNEs can often operate in ultra-low-power states for continuous inference within their specified power envelope.

Shared Accelerator (e.g., GPU): Shared accelerators, particularly high-performance GPUs, consume significantly more power due to their general-purpose nature, larger silicon area, and often higher clock frequencies. The complex control logic, extensive memory subsystems, and wider instruction sets contribute to higher static and dynamic power dissipation. This higher power density necessitates robust active cooling solutions (fans, liquid cooling), which limit their deployment in passively cooled or ultra-small form factor devices. While power gating and dynamic voltage and frequency scaling (DVFS) are employed, the baseline power consumption for NN tasks remains higher than a specialized DNE.

Memory & Bandwidth Handling

The memory subsystem is a critical differentiator and often the primary bottleneck in both DNE and Shared Accelerator designs.

Dedicated Neural Engine (DNE):

- Internal Bandwidth: DNEs feature extremely high internal bandwidth within their compute fabric (e.g., systolic arrays) and tightly coupled, small, fast on-chip memories like scratchpads or local buffers. These memories are designed to feed the compute units with minimal latency, maximizing data locality for core NN loops and minimizing data movement.

- External Bandwidth: The primary bottleneck for scaling to larger, more sophisticated models under sustained load often shifts to the external memory bandwidth between the DNE’s local buffers and system DRAM. Frequent transfers for large models or batch sizes can introduce significant latency and reduce effective throughput, even with highly optimized Direct Memory Access (DMA) engines. This silicon constraint means that while internal compute is efficient, the overall system performance can be bottlenecked by the rate at which data can be moved on and off-chip.

- Memory Access Patterns: DNEs are designed for predictable, sequential, often block-based memory access patterns, allowing for aggressive prefetching and specialized memory controllers that are highly optimized for NN data flows.

Shared Accelerator (e.g., GPU):

- Internal Bandwidth: Shared accelerators utilize multi-level caches (L1, L2, L3) and shared memory, offering high internal bandwidth. However, this bandwidth is general-purpose, designed to handle diverse access patterns and maintain cache coherence across many compute units, which introduces overheads not present in a specialized DNE.

- External Bandwidth: These accelerators rely on very high-bandwidth external memory (HBM, GDDR) to feed their numerous compute units. This bandwidth is shared across diverse workloads (graphics, compute, AI), leading to potential memory contention and less predictable performance during runtime execution. The memory consistency models are more complex than in DNEs due to the general-purpose nature and concurrent access from various parts of the system.

- Memory Access Patterns: Shared accelerators must accommodate more varied and less predictable memory access patterns, which can lead to cache misses and suboptimal memory utilization if not carefully managed by software. While capable of high throughput, the general-purpose memory subsystem can become a system bottleneck for specific NN workloads that could benefit from DNE-like specialized memory access.

Software Ecosystem & Tooling

Dedicated Neural Engine (DNE): The software ecosystem for DNEs often involves a specialized toolchain, including compilers, runtime libraries, and SDKs. These tools are crucial for mapping complex neural network graphs onto the DNE’s fixed or configurable hardware architecture. This “compiler chasm” poses a significant engineering challenge for achieving scalable performance, especially as NN models become less static and more heterogeneous. Users typically interact with higher-level APIs (e.g., TensorFlow Lite, Core ML) that abstract the hardware specifics, which simplifies development but limits fine-grained control and debugging capabilities.

Shared Accelerator (e.g., GPU): Shared accelerators benefit from mature, extensive software ecosystems built over decades of general-purpose computing. Programming models like CUDA, OpenCL, and SYCL provide robust frameworks for low-level control and custom kernel development. High-level AI frameworks such as TensorFlow, PyTorch, and ONNX Runtime offer deep integrations, allowing developers to leverage extensive libraries and optimization tools, including NVIDIA TensorRT. This maturity leads to lower development complexity for new models and provides comprehensive debugging and profiling tools, benefiting from years of general-purpose compute development. The maturity of these ecosystems significantly impacts the time-to-market for new AI applications, as developers can rapidly prototype and deploy models without extensive low-level hardware optimization.

Real-World Deployment

Dedicated Neural Engine (DNE): DNEs are predominantly deployed in power-constrained edge devices, mobile phones, IoT sensors, wearables, and automotive ADAS systems. Their justification lies in enabling ubiquitous, low-latency AI functionality within strict power budgets. They allow AI to scale into new device categories previously unfeasible due to power or form factor limitations. However, the risk of obsolescence or reduced efficiency is high if core NN paradigms shift rapidly, requiring costly re-architecting.

Shared Accelerator (e.g., GPU): Shared accelerators find their primary deployment in data centers, high-end workstations, cloud AI inference and training platforms, and high-performance computing (HPC) environments. Their value proposition is flexibility, diverse workload support, and high absolute throughput. They amortize silicon development costs across multiple functions and adapt well to unforeseen AI innovations, leveraging existing infrastructure and mature software ecosystems. The trade-off is their higher power consumption and larger physical footprint, making them less suitable for ultra-low-power, small form factor edge deployments.

Which Design Is More Efficient

The question of which design is “more efficient” hinges on the definition of efficiency.

- Dedicated Neural Engine (DNE): Is generally more power-efficient and latency-efficient for its specific, targeted neural network workloads. It achieves a significantly higher operations-per-watt ratio for these tasks due to its specialized design, minimal overhead, and optimized data paths. This enables efficiency scaling for a defined problem space.

- Shared Accelerator: Is more silicon-area efficient if it reuses existing general-purpose blocks, avoiding the need for additional dedicated silicon. It is also more developer-time efficient for new or evolving NN models due to its flexibility and mature tooling. Critically, it offers higher paradigm scalability, making it more efficient at adapting to unforeseen AI algorithms and future innovations that may not map well to fixed-function DNEs.

Ultimately, the choice between Dedicated Neural Engine vs Shared Accelerator is a fundamental engineering trade-off between specialized, dedicated efficiency for known problems and general-purpose, adaptable efficiency for unforeseen challenges. This fundamental trade-off directly influences a device’s battery impact for AI tasks and its long-term relevance as AI models continue to evolve.

Key Takeaways

The architectural decision between a Dedicated Neural Engine and a Shared Accelerator is a critical silicon limitation analysis, balancing specialized performance against general-purpose flexibility. DNEs excel in power efficiency and predictable, low-latency execution for specific NN tasks, making them ideal for power-constrained edge deployments, but they face architectural debt and compiler bottlenecks for evolving AI paradigms.

Shared accelerators offer superior adaptability and leverage mature ecosystems, suitable for diverse, high-throughput workloads, but at the cost of higher power consumption and potential resource contention. The memory subsystem is a primary runtime bottleneck for both: DNEs are limited by external DRAM bandwidth for large models, while shared accelerators manage complex general-purpose memory hierarchies. The optimal choice depends on the specific application’s power budget, latency requirements, and tolerance for future algorithmic shifts.