Table of Contents

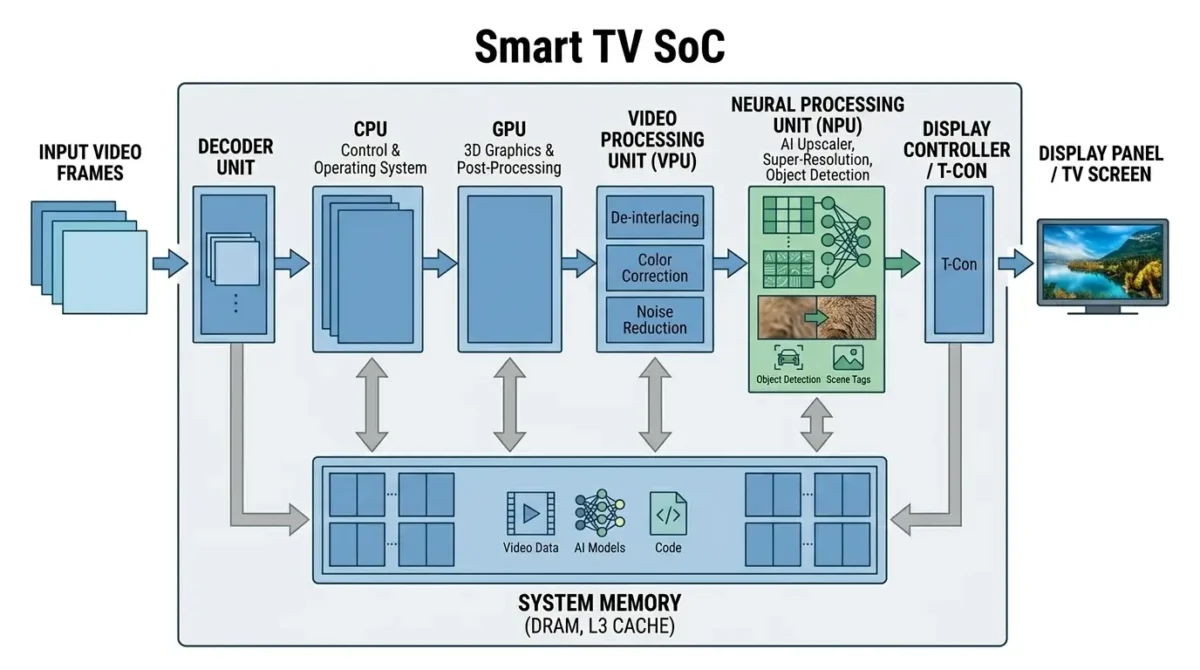

AI in Smart TVs uses dedicated Neural Processing Units (NPUs) inside the System-on-Chip (SoC) to run real-time AI inference for video and audio processing. These models enable features such as AI upscaling (super-resolution), scene detection, and automatic picture optimization. High-bandwidth system memory feeds large video frames and AI model data to the NPU, enabling efficient processing of 4K and 8K content within strict power and thermal constraints.

The evolution of display technology, particularly the proliferation of 4K and 8K panels, has created a significant engineering challenge: bridging the resolution gap between available content and native display capabilities. Traditional video processing pipelines, reliant on fixed algorithms, struggle to synthesize plausible detail from lower-resolution sources or adapt effectively to diverse content types. This problem is addressed by integrating AI into Smart TVs, transforming them from passive display devices into active, adaptive image and sound processors. The core engineering problem lies in executing complex AI models in real-time on high-resolution video streams within the stringent power, thermal, and cost constraints of consumer electronics, with the memory subsystem often emerging as the primary performance bottleneck.

What AI in Smart TVs Is

AI in Smart TVs refers to the application of machine learning models, primarily deep neural networks, to enhance the viewing and listening experience dynamically. This goes beyond static picture modes or simple interpolation. Key functionalities include:

- AI-driven Upscaling (Super-Resolution): Rather than simple interpolation, modern televisions rely on AI super-resolution technology trained on large datasets to reconstruct high-frequency details and textures. This creates a perceptually sharper and more detailed image that more closely matches the native panel resolution.

- Dynamic Scene Detection and Optimization: AI models process incoming video frames in real-time to identify content characteristics, such as “sports,” “movie,” “game,” “dark scene,” or “face close-up.” Based on this classification, the system dynamically adjusts hundreds of underlying video processing parameters—including noise reduction, sharpening, local dimming, tone mapping, and motion interpolation—to optimize the picture for that specific content. This capability is analogous to AI scene detection in phone cameras, which also adapts settings based on visual context.

- AI-powered Audio Enhancement: Similar principles apply to audio, where AI models classify speech, music, or ambient sounds to optimize equalization, spatialization, and dialogue clarity.

This evolution enables a continuously adaptive and refined user experience, moving away from generic, one-size-fits-all processing. The tangible result is a more immersive and satisfying viewing experience, directly impacting user satisfaction and perceived value.

How AI in Smart TVs Works

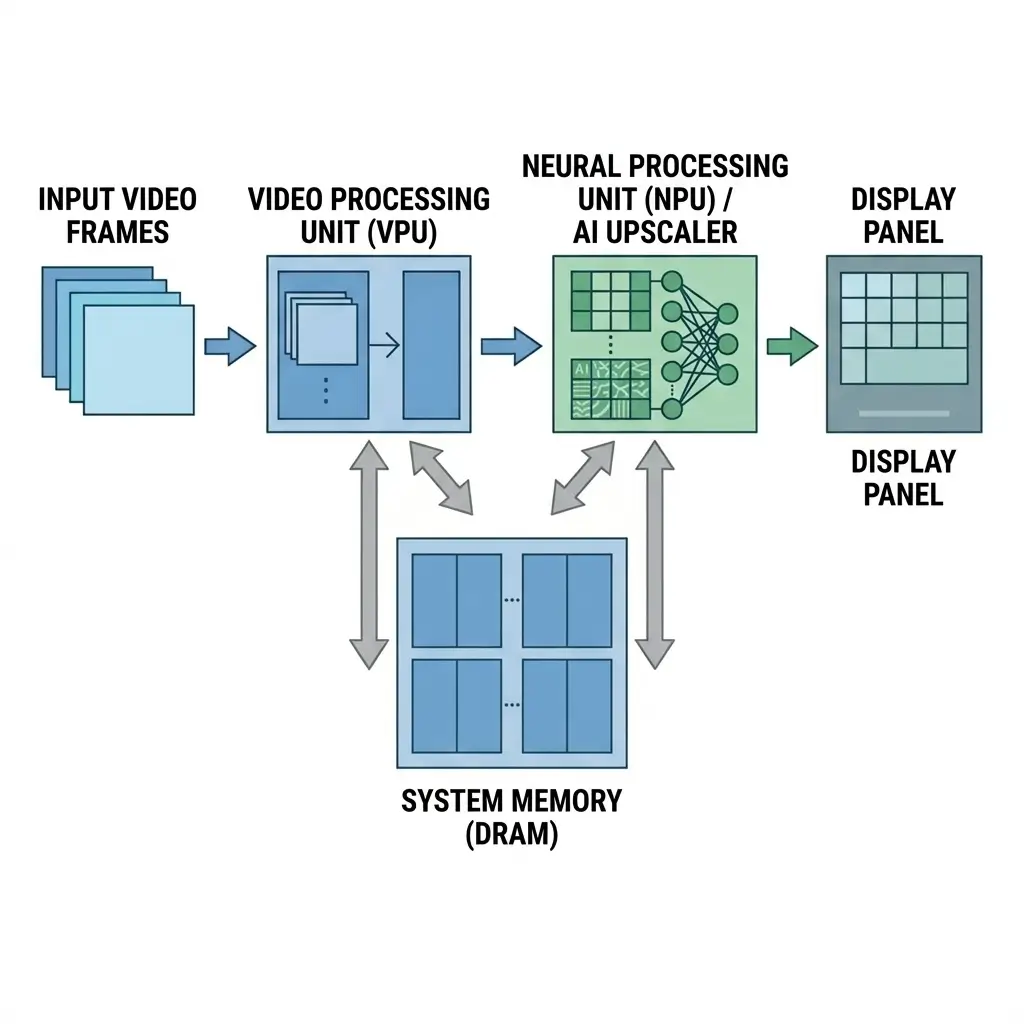

At its core, AI in Smart TVs operates by feeding raw video and audio data through specialized neural networks for inference. The process typically involves:

- Input Acquisition: The video processing unit receives compressed video streams such as H.264, HEVC, or the AV1 video codec standard used by modern streaming platforms like broadcast tuners, HDMI, or streaming apps. It then decodes these streams into raw pixel data.

- Pre-processing: Raw video frames are often pre-processed—for example, through color space conversion or downsampling if the AI model operates at a lower internal resolution—before being fed to the AI engine.

- AI Inference: The pre-processed frames are then passed to a dedicated Neural Processing Unit (NPU) within the SoC. The NPU executes pre-trained AI models, such as convolutional neural networks for super-resolution, recurrent neural networks for temporal processing, or classifiers for scene detection. This involves numerous matrix multiplications and activation functions, processing the input data against stored model weights, similar to how AI glasses process vision in real time for immediate environmental understanding.

- Output Generation: For super-resolution, the NPU outputs an upscaled, enhanced frame. For scene detection, it outputs classification probabilities or parameter adjustment values.

- Post-processing and Display: The enhanced frames or adjusted parameters are then fed back into the VPU or display controller for final processing—such as color grading, gamma correction, or local dimming control—before being sent to the display panel.

- Memory Interaction: Throughout this process, the memory subsystem (DDR) is constantly engaged, buffering input frames, storing AI model weights, holding intermediate tensor data during inference, and buffering output frames. The sheer volume of high-resolution video data, coupled with the iterative nature of neural network operations, places immense pressure on memory bandwidth and latency. Inadequate memory performance can lead to dropped frames or reduced AI processing quality, directly impacting the fluidity and visual fidelity of the displayed content.

Performance Characteristics of AI in Smart TVs

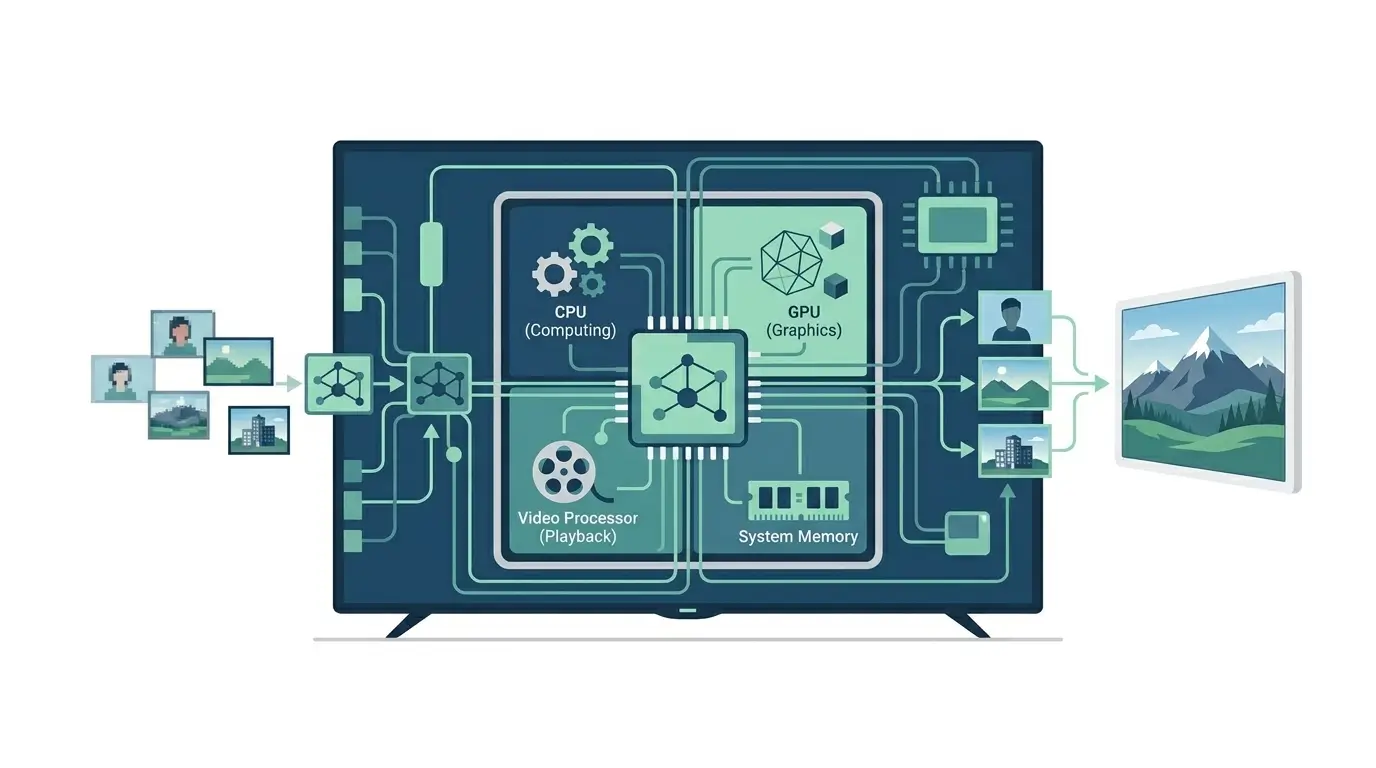

The architecture of an AI-enabled Smart TV SoC is a highly integrated system designed for real-time multimedia processing.

| Component | Primary Function | Memory Interaction Focus |

|---|---|---|

| CPU (Central Processing Unit) | System control, OS, user interface, general tasks | OS kernel, application code, network stacks, lower-priority data |

| GPU (Graphics Processing Unit) | UI rendering, some legacy video effects, and gaming | Frame buffers for UI, texture data, and shader code |

| VPU (Video Processing Unit) | Video decoding/encoding, scaling, de-interlacing | Raw video frame buffers (input/output), pre/post-processing data, display pipelines |

| NPU (Neural Processing Unit) | Dedicated AI model inference (Super-Resolution, Scene Detection) | AI model weights, intermediate tensors, input/output feature maps, temporal buffers |

| DDR (Dynamic Random Access Memory) | High-speed main system memory | Central bottleneck for all components, especially NPU and VPU. Stores all active data. |

| Display Controller | Panel interface, timing, and local dimming control | Final display frame buffer, timing parameters |

The NPU is the cornerstone of AI acceleration, designed specifically for matrix operations common in neural networks using modern Neural Processing Unit architecture. Unlike general-purpose CPUs or GPUs, NPUs are architected specifically for the parallel matrix operations common in neural networks, offering significantly higher performance per watt. This is crucial for sustaining real-time 4K/8K AI processing within the TV’s power and thermal envelope.

The memory subsystem, typically LPDDR5/5X or multi-channel DDR5, is paramount. High-resolution video frames (e.g., 8K 60Hz 10-bit) demand gigabytes per second of raw data throughput. When an NPU processes these frames, it requires not only the input frame but also access to potentially large AI model weights and numerous intermediate tensors generated during inference. This results in multiple reads and writes to DDR per frame, making memory bandwidth and latency critical determinants of overall AI performance and the maximum resolution/frame rate the system can handle. Efficient memory controllers and sophisticated buffer management within the SoC are essential to prevent the DDR from becoming a bottleneck.

Performance Characteristics

AI in Smart TVs must operate under strict performance constraints:

- Real-time Processing: This is the most critical characteristic. AI models must process 4K/8K video streams at high frame rates (e.g., 60Hz, 120Hz) with minimal end-to-end latency. Any noticeable delay between input and display is unacceptable for a fluid viewing experience.

- Memory Bandwidth: This is often the primary bottleneck. A single 8K 60Hz 10-bit video stream requires approximately 5 GB/s of raw data. When AI models process this, the NPU needs to read the input frame, potentially multiple past frames for temporal processing, access model weights (which can be hundreds of megabytes or even gigabytes), and write intermediate and output tensors. This can easily push total memory bandwidth requirements into the tens or even hundreds of GB/s, necessitating advanced DDR technologies (LPDDR5/5X) and wide memory buses.

- Power and Thermal Envelopes: Smart TVs are consumer devices with limited space for active cooling. The NPU must perform its intensive computations within a strict power budget to prevent excessive heat generation, which could lead to throttling or component degradation. This drives the need for highly energy-efficient NPU architectures.

- Latency: For interactive content like gaming, AI processing must not introduce significant latency. This often means trading off some AI complexity or temporal processing for faster inference times.

- Model Size and Complexity: The size of the AI model (number of parameters) directly impacts its memory footprint and inference speed. Larger, more complex models can yield better results but demand more memory bandwidth and NPU compute cycles.

Real-World Applications

- Super-Resolution (Upscaling): Transforms 1080p or 4K content to fill an 8K display with synthesized detail, making lower-resolution content appear sharper and more immersive.

- Dynamic Tone Mapping: AI models process HDR content frame-by-frame, optimizing brightness, contrast, and color rendition to match the specific capabilities of the display panel and the content’s artistic intent, thereby enhancing perceived dynamic range.

- Object-Based Picture Enhancement: AI models classify specific objects—such as faces, text, or landscapes—within a scene and apply targeted enhancements (e.g., sharpening text, smoothing skin tones, enhancing foliage detail) without affecting other areas.

- AI Motion Compensation: Provides advanced interpolation of intermediate frames to reduce motion blur and judder, especially in fast-moving scenes, by predicting object trajectories more accurately than traditional algorithms.

- AI Sound Pro/Adaptive Sound: AI models process audio content (dialogue, music, effects) to dynamically adjust equalization, vocal clarity, and virtual surround sound profiles, creating a more immersive and intelligible audio experience.

Limitations

Despite their advancements, AI in Smart TVs faces several engineering limitations and inherent tradeoffs:

- AI Quality vs. Sustained Thermal Budget: The most sophisticated AI models, offering superior perceptual quality, are computationally intensive. Sustained execution at 4K/8K 120Hz generates significant heat within the NPU. To manage thermals, TVs often dynamically throttle NPU clock speeds, switch to less complex (and thus lower quality) AI models, or reduce the frequency of AI processing (e.g., processing every other frame). This means peak AI performance is often not sustained performance, especially under prolonged heavy load.

- Latency vs. Temporal Coherence: Incorporating temporal information (multiple frames) for better upscaling or scene detection can improve visual stability and detail by reducing flickering and improving consistency. However, this requires buffering multiple frames, directly increasing end-to-end latency. For gaming or fast-action content, this can be detrimental, as the tradeoff is between visual stability/detail and input responsiveness, requiring careful system-level synchronization and buffer management.

- Model Robustness vs. “AI Hallucination”: While AI models can generate plausible detail, they can also generate incorrect detail (hallucination), especially with highly compressed or artifact-ridden source material. This can manifest as unnatural textures, distorted edges, or flickering artifacts. Engineers must tune models to avoid visually jarring artifacts, even if it means less aggressive upscaling in some scenarios, balancing aggressive detail synthesis with fidelity to the original content.

- Cost vs. AI “Intelligence”: The capabilities of a TV’s “AI Processor” are directly tied to the underlying NPU’s size, architecture, and the associated DDR bandwidth. A smaller, cheaper NPU might only run a lightweight super-resolution model and basic scene classification. In contrast, a premium NPU can handle multiple, more complex models for granular object detection, advanced temporal super-resolution, and sophisticated parameter adjustment. The effectiveness of “AI” therefore, scales directly with hardware cost and the available memory subsystem resources.

- Generalization vs. Specificity: AI models trained on broad datasets may struggle with highly unique or niche content, potentially leading to suboptimal enhancements or even artifacts in specific scenarios not well represented in the training data.

Importance of AI in Smart TVs

The integration of AI into Smart TVs represents a fundamental shift in display technology, moving beyond static hardware capabilities to a dynamic, software-upgradable platform. This matters for several reasons:

- Future-Proofing and Continuous Improvement: NPU-centric designs enable over-the-air (OTA) updates, allowing manufacturers to deploy new, improved AI models, bug fixes, and entirely new features post-purchase. This extends the useful lifespan of the TV’s core value proposition—its picture and sound quality—without requiring hardware replacement.

- Enhanced User Experience: AI delivers a perceptually superior viewing experience by dynamically adapting to content, display capabilities, and even ambient room conditions based on processed patterns, offering a level of customization and optimization not achievable with traditional fixed-function pipelines.

- Bridging Content Gaps: AI-driven upscaling is critical for making lower-resolution legacy and streaming content look compelling on high-resolution 4K and 8K displays, thereby accelerating the adoption of these advanced panels.

- Efficiency and Performance: Dedicated NPUs provide superior performance per watt for AI inference compared to general-purpose CPUs or GPUs, making real-time, high-resolution AI processing feasible within the strict power and thermal constraints of consumer electronics.

- Competitive Differentiation: AI capabilities are a key differentiator in a mature market, driving innovation and providing tangible benefits that consumers can perceive.

Key Takeaways

- AI in Smart TVs uses dedicated NPUs for real-time super-resolution and dynamic scene detection, moving beyond fixed-function video processing.

- The memory subsystem, particularly high-bandwidth LPDDR5/5X, is a critical bottleneck due to large video frame sizes and AI model data requirements.

- Architectures integrate NPUs, VPUs, and CPUs on an SoC, with the NPU optimized for power-efficient neural network inference.

- Performance is constrained by real-time processing demands, memory bandwidth, and strict power/thermal envelopes.

- Key tradeoffs include AI quality versus thermal management, latency versus temporal processing, and model robustness versus potential “hallucinations.”

- AI enables future-proofing through OTA updates, continuous improvement, and enhanced user experiences, making it a vital component for modern display technology.