Table of Contents

Neuromorphic vs Traditional AI Chips: Traditional AI chips use Von Neumann architecture for dense, high-performance computing, but consume more power due to data movement. Neuromorphic chips use event-driven, in-memory processing for sparse workloads, enabling ultra-low power and low latency—making them ideal for edge AI applications.

Neuromorphic vs Traditional AI Chips is becoming a critical topic as edge AI continues to grow, especially in power-constrained wearable devices. This shift is exposing new performance bottlenecks and runtime challenges in semiconductor design. Traditional AI accelerators, while powerful, often struggle to meet always-on, ultra-low-power demands due to architectural limitations.

This guide explores the key differences, trade-offs, and real-world impact of these two approaches for efficient AI processing.

Traditional vs Neuromorphic AI Chips: What They Do

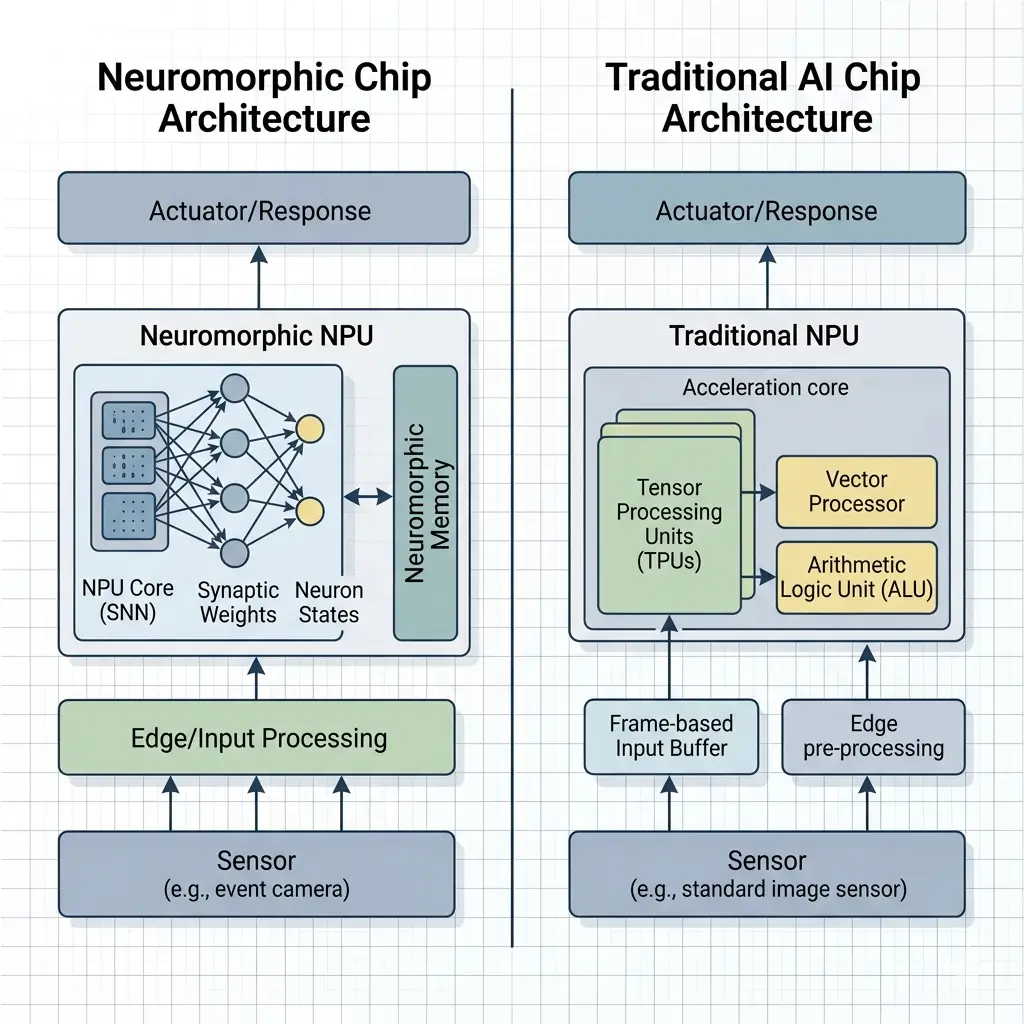

Traditional AI Chips (NPU/DSP-based). These architectures are purpose-built to accelerate Artificial Neural Networks (ANNs), which rely on dense matrix multiplication and accumulation (MAC) operations. They use a Von Neumann architecture, separating processing units (NPUs, DSPs) from memory (SRAM and off-chip DRAM). This design enables high-throughput, synchronous tensor computations, making them effective for models like CNNs and Transformers.

However, this approach requires frequent data movement between memory and compute units, which increases power consumption. As a result, these chips can drain battery quickly in continuous AI workloads, especially in on-device AI systems.

Neuromorphic AI Chips Neuromorphic designs aim to emulate the brain’s event-driven, asynchronous, and parallel processing. They are fundamentally non-Von Neumann, integrating computation directly with memory (in-memory compute) to minimize data movement. These chips primarily accelerate Spiking Neural Networks (SNNs), where information is encoded in the timing of discrete “spikes” rather than continuous values. Their core function is to process sparse, event-driven data streams with extremely low power and latency, activating only the necessary “neurons” and “synapses” when an event occurs, rather than continuously clocking all units. This event-driven processing significantly extends battery life in always-on sensor applications, like smart rings.

Architectural Differences Between Neuromorphic and Traditional AI Chips

The architectural divergence between these two paradigms is profound, dictating their suitability for different AI workloads and runtime scheduling strategies.

| Feature | Traditional AI Chips (NPU/DSP-based) | Neuromorphic AI Chips |

|---|---|---|

| Core Architecture | Von Neumann (separate compute & memory) | Non-Von Neumann (in-memory compute, processing-in-memory) |

| Computational Paradigm | Dense, synchronous tensor operations (MACs) | Sparse, asynchronous, event-driven spiking computations |

| Data Representation | Floating-point or fixed-point tensors | Discrete spikes, event streams |

| Memory Access | Explicit data movement between registers, caches, off-chip DRAM | Localized memory access, computation at memory location |

| Clocking | Global synchronous clock | Asynchronous, event-driven communication (no global clock) |

| Power Model | Data movement dominant, high static power | Computation dominant, ultra-low dynamic power, near-zero static |

| Primary Optimization | Throughput for dense matrix operations | Energy efficiency and latency for sparse, event-driven data |

| Learning Paradigm | Primarily inference (pre-trained models), some on-chip training | Inference, strong potential for on-chip unsupervised/supervised learning |

Performance Comparison: Speed, Latency, and Throughput

Traditional AI Chips: These chips deliver high peak performance for dense workloads. Their strength lies in rapidly processing large batches of data, achieving high MACs/second. However, this peak performance is often bursty. For continuous, complex tasks in wearables, sustained performance is limited by thermal dissipation capabilities. Runtime scheduling often involves waking the NPU, loading data, executing a burst, and returning to a low-power state. The latency for sporadic tasks includes wake-up time, data transfer, and processing, which can introduce noticeable delays.

Neuromorphic AI Chips: Neuromorphic chips offer ultra-low latency and power for specific event-driven tasks. Because computation happens where data resides and only when an event occurs, they can respond almost instantaneously to sensor inputs. Their performance shines in continuous, sparse data streams (e.g., audio, vision events). For dense, traditional ANN workloads, direct mapping can be inefficient or require complex conversion, potentially leading to lower effective throughput compared to specialized NPUs. However, for their target applications, their “always-on” responsiveness at micro-watt levels is highly advantageous.

Power Efficiency and Thermal Behavior

Traditional AI Chips: Power consumption is dominated by data movement, particularly accessing off-chip DRAM. Even with aggressive power gating, the static power floor from a vast number of transistors and complex clock distribution networks limits true “always-on” efficiency. High clock frequencies and simultaneous activation of many MAC units lead to significant power density and localized hot spots. In compact wearable form factors, this necessitates aggressive thermal management (throttling, passive/active cooling), directly impacting sustained performance and battery life. Runtime scheduling must carefully manage these power states to balance performance and energy.

Neuromorphic AI Chips: These chips are designed for extreme energy efficiency. By integrating compute and memory and operating asynchronously, they significantly reduce the energy cost of data movement across large distances. Only the “neurons” and “synapses” involved in processing an event consume power, leading to ultra-low dynamic power consumption. The absence of a global clock and the sparse, event-driven nature result in significantly lower static power. This makes them ideal for truly “always-on” applications in wearables, where continuous, low-power operation is paramount, and thermal dissipation is a major constraint.

Memory Architecture and Bandwidth Optimization

Traditional AI Chips: Memory is a critical bottleneck. Model weights and activations often reside in off-chip DRAM, requiring high-bandwidth interfaces (e.g., LPDDR5). The Von Neumann architecture dictates constant data movement between memory and compute units, consuming significant energy and bandwidth. On-chip SRAM caches and scratchpads mitigate this, but for larger models or continuous data streams, off-chip access is unavoidable. Runtime scheduling must optimize data locality and minimize external memory transactions to improve efficiency. High bandwidth requirements can increase manufacturing costs and power consumption, impacting the bill of materials for edge devices.

Neuromorphic AI Chips: Memory and compute are co-located, often at the synapse level, largely bypassing the Von Neumann bottleneck. Data is processed in-place, and communication between “neurons” is typically local and event-driven, requiring minimal global bandwidth. This architecture inherently reduces the need for large, high-bandwidth off-chip memory, leading to a much smaller memory footprint and significantly lower energy consumption associated with data transfer. This enables highly compact system designs suitable for wearables.

Software Ecosystem and Development Challenges

Traditional AI Chips: The software ecosystem is mature and robust. Developers benefit from widely adopted frameworks like TensorFlow, PyTorch, and ONNX, along with extensive libraries, compilers, and optimization tools provided by chip vendors, such as the Intel OpenVINO toolkit documentation. This allows for relatively straightforward deployment of complex, pre-trained ANN models with established workflows and a large talent pool. Runtime scheduling is often handled by sophisticated drivers and AI runtimes that manage resource allocation, memory, and power states. The mature ecosystem allows for faster time-to-market for new AI features in consumer electronics.

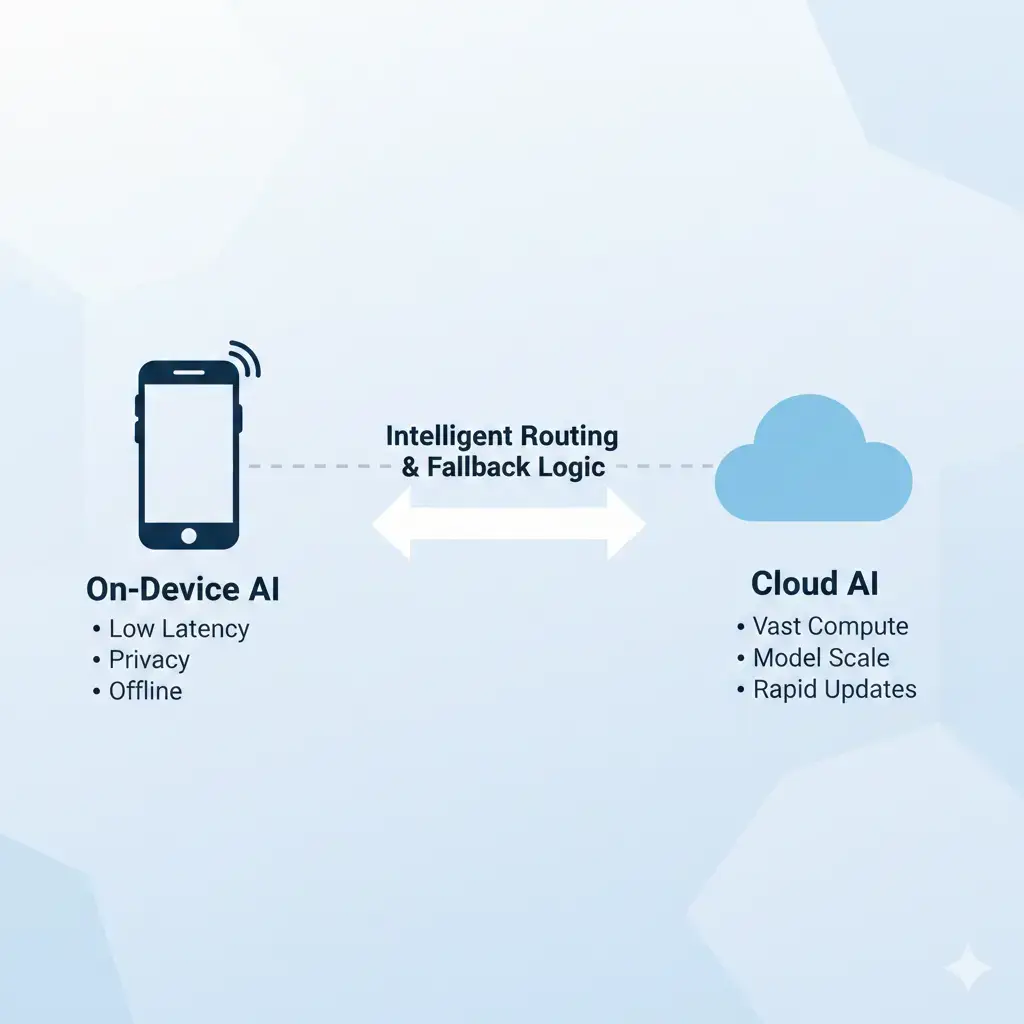

Neuromorphic AI Chips: The software ecosystem is nascent and evolving. Programming SNNs and mapping them to neuromorphic hardware requires specialized knowledge and different programming paradigms. While frameworks like Lava (Intel) and snnTorch are emerging, they are less mature and widely adopted than traditional ANN tools. Converting existing ANNs to SNNs can be complex, often involving accuracy tradeoffs and specialized training techniques. This represents a higher barrier to entry for developers and requires a shift in AI model development and runtime scheduling philosophy, impacting strategies for Edge AI, hybrid AI, and cloud AI architectures. The nascent tooling can delay product development cycles for novel neuromorphic applications.

Real-World Applications and Deployment

Traditional AI Chips: Ideal for applications requiring high accuracy and complex AI models, often with bursty data processing. Examples include high-resolution image recognition, natural language processing (e.g., local LLM inference), and complex sensor fusion where occasional, intense computation is acceptable. In wearables, they are typically used for “wake-word” detection followed by a burst of more complex processing, or for tasks where latency isn’t ultra-critical. Their general-purpose acceleration makes them versatile for a wide range of AI tasks.

Neuromorphic AI Chips: Best suited for continuous, ultra-low-power, event-driven tasks where real-time responsiveness and minimal energy consumption are paramount. Examples include always-on keyword spotting, anomaly detection in sensor streams (e.g., industrial IoT, predictive maintenance), bio-signal analysis, and real-time gesture recognition. In wearables like smart rings, they enable truly “always-on” sensing and initial processing without significant battery drain, acting as an intelligent pre-processor or a standalone low-power AI engine.

Which Design Is More Efficient

The “more efficient” design depends entirely on the workload characteristics and system constraints, particularly regarding runtime scheduling.

- Traditional AI Chips are more efficient for dense, bursty, high-accuracy ANN workloads where peak throughput is critical, and the system can tolerate intermittent high power draw and thermal events. Their efficiency is measured in operations per second per watt for these specific tasks, assuming the data is already on-chip. However, their overall system efficiency for “always-on” scenarios is often hampered by data movement and static power overhead, requiring complex hierarchical runtime scheduling.

- Neuromorphic AI Chips are more efficient for sparse, continuous, event-driven Spiking Neural Networks (SNNs) workloads where ultra-low power, minimal latency, and truly “always-on” operation are non-negotiable. Their efficiency is measured in events processed per joule, or sustained operations per watt at extremely low power levels. They excel where the energy cost of waking up a traditional NPU and moving data would far exceed the actual computation, simplifying runtime scheduling for continuous monitoring.

For wearable AI, a hybrid approach is often the most pragmatic, leveraging a neuromorphic chip for always-on, ultra-low-power event detection and pre-processing, which then selectively wakes a traditional NPU for more complex, bursty inference tasks. This hierarchical runtime scheduling optimizes for both continuous vigilance and high-fidelity processing, highlighting the nuanced trade-offs between Neuromorphic vs Traditional AI Chips.

Key Takeaways

- Traditional AI Chips excel at dense, synchronous ANN computations, offering high peak performance but facing energy and thermal bottlenecks from data movement and static power, especially for “always-on” wearable applications.

- Neuromorphic AI Chips leverage an event-driven, in-memory compute paradigm for sparse SNNs, achieving ultra-low power and latency by minimizing data movement, making them ideal for continuous, real-time sensor processing.

- Runtime scheduling is fundamentally different: Traditional chips manage bursty activity and power states, while Neuromorphic chips are inherently “always-on” and event-responsive.

- The choice between Neuromorphic vs Traditional AI Chips is a critical architectural tradeoff driven by the specific workload’s density, sparsity, and the system’s power, latency, and thermal constraints.

- For extreme edge AI in wearables, a hybrid architecture often provides the best balance, combining the strengths of both paradigms through intelligent hierarchical runtime scheduling.