Table of Contents

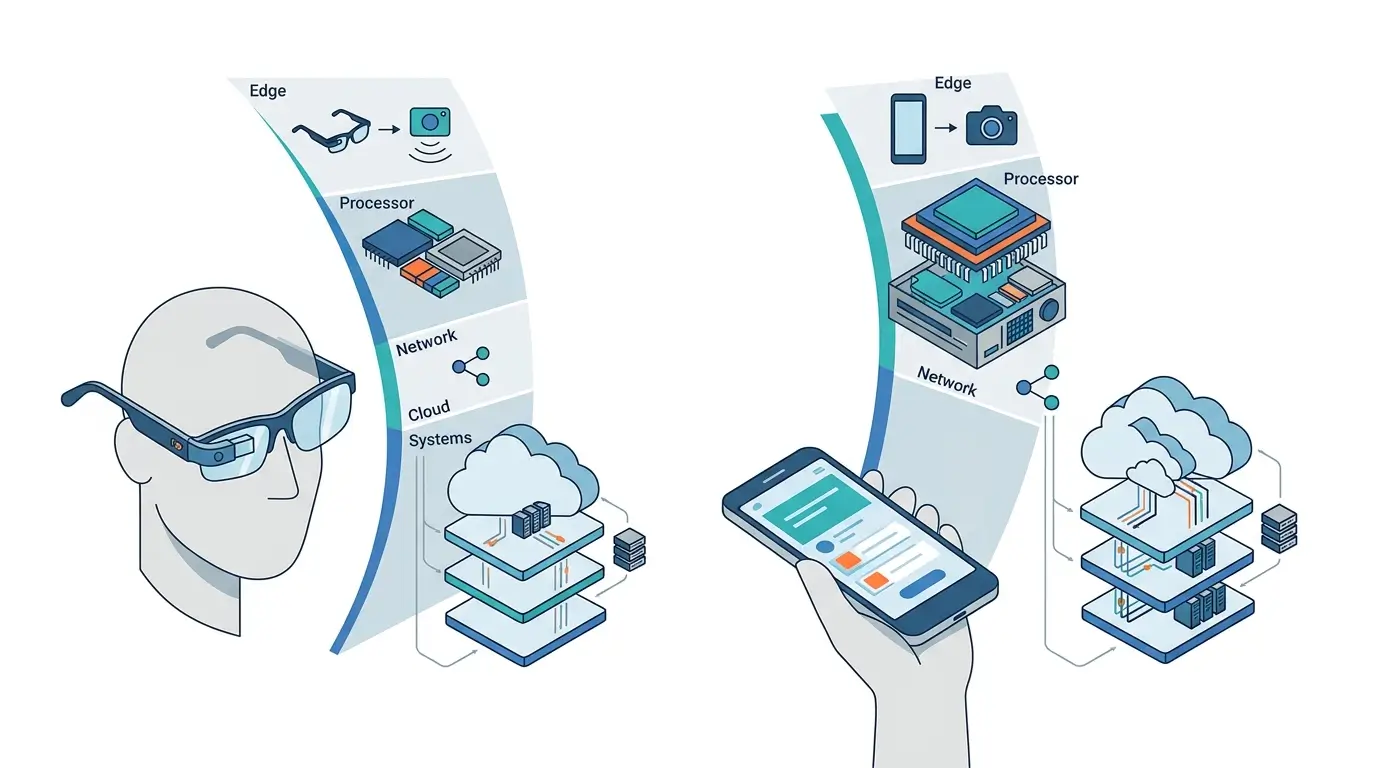

The future of personal AI leverages a distributed compute system, highlighting the distinct, synergistic roles in the AI Glasses vs Smartphones dynamic. AI glasses prioritize ultra-low-latency, egocentric perception, and immediate feedback within severe power and thermal envelopes. Smartphones serve as the backend for complex, sustained AI workloads, leveraging their superior compute, power, and thermal management to create a system that balances immediacy with advanced intelligence.

The evolving landscape of personal artificial intelligence is increasingly defined by the interplay between AI glasses and smartphones. Understanding AI Glasses vs Smartphones requires examining how these devices distribute computational workloads to overcome the physical constraints of silicon—particularly limitations in power consumption, thermal dissipation, and compact form factors.

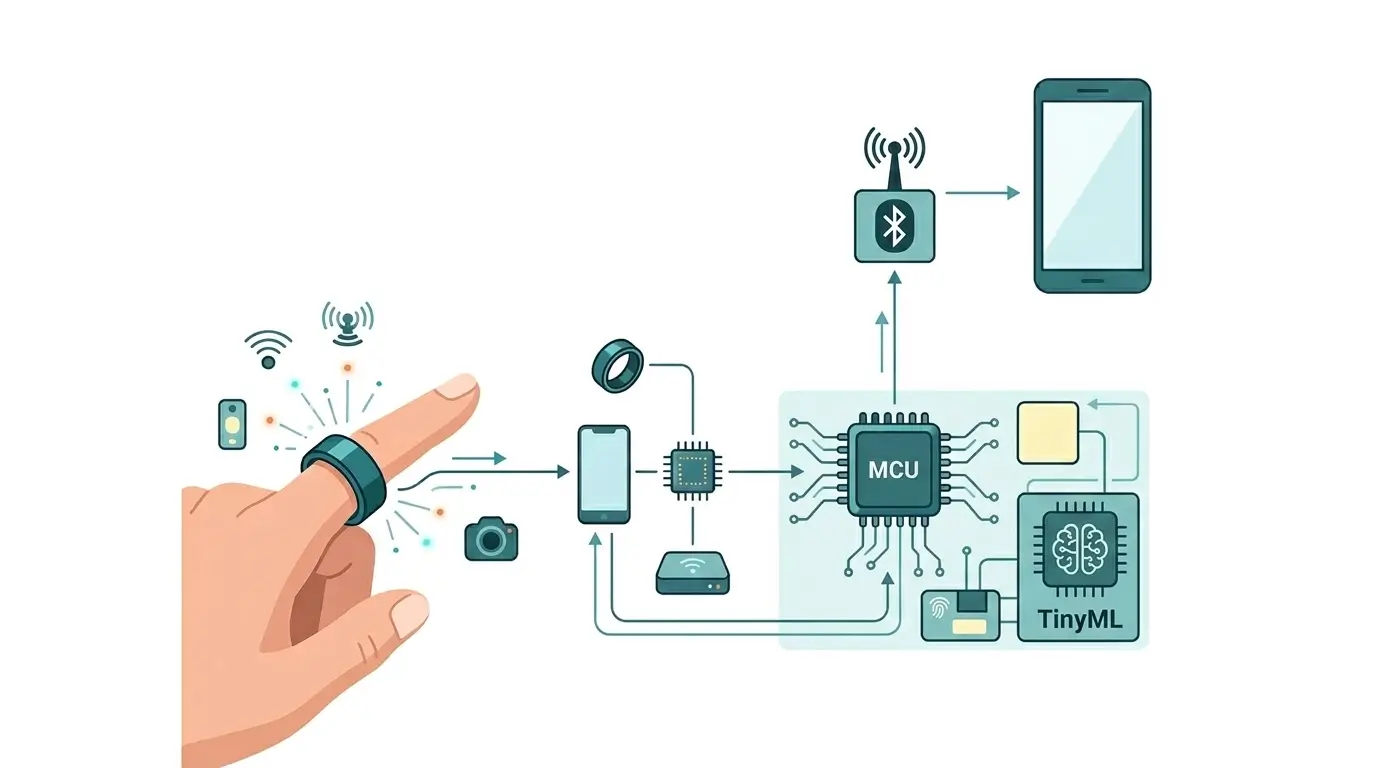

Integrating sophisticated AI capabilities into wearable devices presents a formidable engineering challenge. Monolithic designs struggle to support always-on, real-time interaction while handling the computational intensity of modern AI models. As a result, emerging AI ecosystems rely on distributed architectures, where AI glasses handle sensing and user interaction while smartphones provide the heavy computational backend.

This analysis explores how the AI glasses–smartphone pairing forms a heterogeneous system that overcomes these constraints, enabling a performant and power-efficient personal AI experience.

What Each Architecture Does

AI Glasses: AI glasses function as the primary egocentric interface and sensor hub for the user. Their core purpose is to provide ultra-low-latency, real-time perception and immediate feedback. This includes tasks such as gaze tracking, voice activity detection (VAD), simple object recognition, and rendering augmented reality (AR) overlays or audio cues. The on-device compute is highly specialized, optimized for burst-mode execution of highly quantized models (INT4/INT8 inference) on dedicated ultra-low-power Digital Signal Processors (DSPs) and Neural Processing Units (NPUs). Their runtime environment is meticulously designed for rapid context switching and event-driven processing, minimizing quiescent power consumption, which is crucial for offline AI execution scenarios where continuous cloud connectivity isn’t guaranteed.

Smartphones (as a Distributed Personal AI System Component): Within this distributed paradigm, the smartphone acts as the robust computational backend. It is engineered for sustained, complex AI workloads that exceed the glasses’ on-device capabilities. This encompasses tasks like multi-modal generative AI, sophisticated scene understanding, large language model (LLM) inference, and long-term memory retrieval. Smartphones leverage their high-performance multi-TOPS NPUs, substantial RAM, and advanced thermal management to support these power-intensive computations, ensuring sustained performance for demanding AI tasks. They also provide the necessary high-bandwidth wireless connectivity (5G, Wi-Fi 6E/7) to offload tasks to cloud AI services when local processing is insufficient.

AI Glasses vs Smartphones: Architectural Differences

The fundamental architectural divergence between AI glasses and smartphones stems from their primary design objectives and physical constraints.

| Feature | AI Glasses | Smartphone (as Distributed AI Backend) |

|---|---|---|

| Form Factor | Extreme miniaturization, unobtrusive wearability | Larger, screen-centric, designed for handheld use |

| Thermal Envelope | Milliwatts to low single-digit watts (constrained) | Tens of watts (more robust thermal management) |

| Power Budget | Ultra-low power, always-on for critical tasks | High-capacity battery for sustained, power-intensive tasks |

| Primary Compute | Dedicated, ultra-low-power DSPs/NPUs | High-performance multi-TOPS NPUs, CPUs, GPUs |

| Model Quantization | Highly optimized, INT4/INT8 | INT8/FP16/FP32 capable, larger model support |

| Memory | Minimal on-device RAM (e.g., LPDDR4X, SRAM) | Large RAM capacity (8GB-16GB+ LPDDR5/5X) |

| Connectivity | Bluetooth LE, short-range Wi-Fi (to smartphone) | 5G, Wi-Fi 6E/7, advanced Bluetooth |

| Role in AI System | Egocentric sensor, immediate feedback, burst AI | Complex AI processing, long-term memory, cloud gateway |

AI Glasses vs Smartphones Performance Comparison

AI Glasses: AI glasses excel in performance for ultra-low-latency, reactive tasks. Their direct sensor integration minimizes data transfer latency to the on-device NPU, enabling near-instantaneous responses for actions like gaze-driven UI or wake-word detection. However, their performance is severely bottlenecked by limited computational throughput (TOPS) and memory, preventing the execution of large, complex AI models. Sustained inference workloads quickly trigger thermal throttling, illustrating the gap between Sustained Ai Performance Vs Peak Tops in compact edge AI devices.

Smartphones (as a Distributed AI Backend): Smartphones offer high sustained computational performance, capable of executing larger, more complex models such as local LLMs or multi-modal encoders. Their robust thermal management allows for longer periods of high-performance computing without significant throttling. The primary performance limitation in a distributed context is the inherent latency introduced by wireless communication when processing data streamed from the glasses.

Distributed System Performance: The distributed system achieves a synergistic performance profile. Time-critical, simple tasks are handled on the glasses, ensuring immediacy. Complex, latency-tolerant tasks are offloaded to the smartphone, leveraging its superior compute. The critical performance bottleneck for the overall system lies in the efficiency of inter-device communication (latency, bandwidth) and the intelligence of the workload offloading scheduler. Inefficient scheduling or excessive data transfer overhead can introduce perceived latency, degrading the user experience despite the raw compute power available.

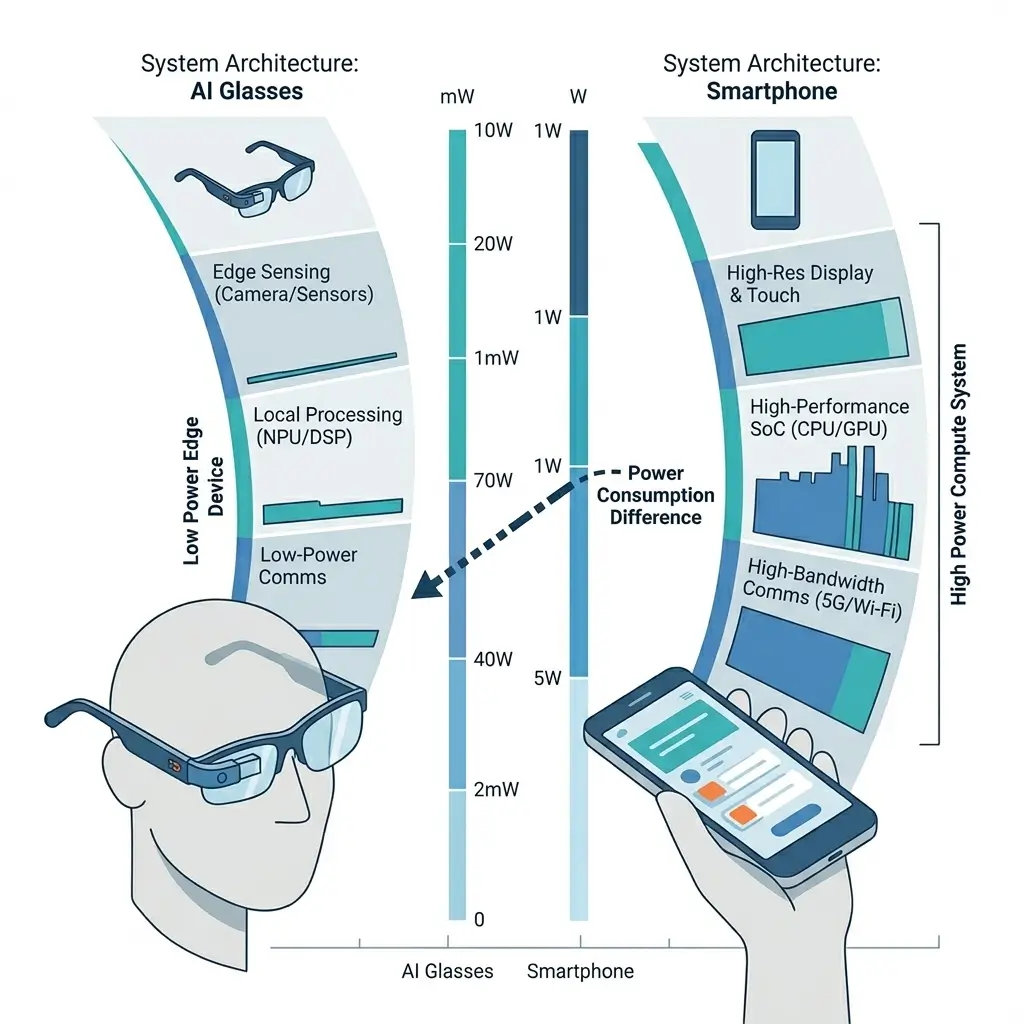

Power & Thermal Behavior

AI Glasses: AI glasses are architected for extreme power efficiency, prioritizing ultra-low quiescent power and highly bursty active power consumption. Silicon design emphasizes low-power process nodes, aggressive voltage/frequency scaling, and millisecond-level power gating to minimize energy draw during idle periods and optimize for rapid inference bursts. The thermal envelope is severely restricted, typically allowing only milliwatts to low single-digit watts of sustained power dissipation. Exceeding this limit quickly triggers thermal throttling, which reduces performance or leads to uncomfortable device heating.

Smartphones (as Distributed AI Backend): Smartphones, with their larger form factor and battery capacity, accommodate significantly higher power consumption (peaking in the tens of watts). They incorporate sophisticated Power Management Units (PMUs) and advanced cooling solutions (e.g., vapor chambers, graphite sheets) to manage thermal dissipation and sustain high-performance computing for extended durations.

Distributed System Power: Achieving power efficiency in the distributed system requires a holistic power budgeting strategy. While offloading compute to the smartphone can save power on the glasses, the energy cost of high-bandwidth wireless communication and sustained smartphone NPU usage must be considered. An aggressive offloading strategy might deplete the smartphone battery faster than if the glasses attempted more local processing within their limits. The system’s overall power efficiency is contingent on an intelligent, power-aware scheduler that dynamically balances the energy cost of data transfer, inference on each device, and their respective idle states, leveraging Dynamic Voltage and Frequency Scaling (DVFS) and power gating across both devices.

Memory & Bandwidth Handling

AI Glasses: Memory on AI glasses is minimal, typically comprising a small amount of LPDDR4X or embedded SRAM for local model weights and immediate sensor data buffers. Memory bandwidth is optimized for rapid, burst access. The limited memory capacity is a fundamental constraint, preventing the execution of large foundation models locally. External bandwidth to the smartphone is constrained by the wireless link, with Bluetooth LE often used for control signals and Wi-Fi for higher-resolution sensor data streams.

Smartphones (as Distributed AI Backend): Smartphones feature substantial LPDDR5/5X RAM (8GB-16GB or more) to accommodate large model weights, intermediate activations, and multi-modal data buffers. High internal memory bandwidth is critical for maximizing NPU performance. Externally, smartphones offer high-bandwidth connectivity via 5G and Wi-Fi 6E/7, facilitating rapid data ingress/egress and cloud communication.

Distributed System: A significant challenge in the distributed system is the efficient transfer of high-bandwidth sensor streams (e.g., camera video, multi-channel audio) from the glasses to the smartphone. This necessitates optimized wireless protocols, efficient data compression, and potentially zero-copy buffer mechanisms to minimize latency and overhead. The wireless link’s latency and throughput can become a bottleneck for real-time, high-resolution multi-modal AI tasks, and the serialization/deserialization overhead for data crossing the device boundary adds both latency and computational cost.

Software Ecosystem & Tooling

AI Glasses: The software ecosystem for AI glasses is nascent and highly specialized. It typically involves low-level SDKs for direct hardware access to DSPs, NPUs, and sensors. Development focuses on highly optimized embedded AI frameworks (e.g., TensorFlow Lite Micro, custom inference engines) and specialized toolchains for model quantization, pruning, and deployment to resource-constrained edge devices. Debugging and profiling in such a constrained, real-time environment are inherently complex, increasing development cost and time.

Smartphones (as Distributed AI Backend): The smartphone ecosystem is mature and vast, supported by robust AI frameworks such as TensorFlow, PyTorch Mobile, Core ML, and ONNX Runtime documentation on Android and iOS. Developers benefit from extensive tools for debugging, profiling, and standardized APIs for NPU/GPU access, which significantly improve development efficiency.

Distributed System: The distributed architecture, central to the AI Glasses vs Smartphones paradigm, demands sophisticated cross-device AI middleware and a unified runtime scheduler. This middleware must abstract hardware differences, intelligently manage dynamic workload offloading, handle secure and efficient inter-device communication, and present a cohesive API to developers. A critical gap exists in mature, standardized tooling for profiling, debugging, and optimizing AI applications across heterogeneous, wirelessly connected devices, contributing to development complexity and time-to-market. The development of such tools is paramount for widespread adoption and efficient system development.

Real-World Deployment

AI Glasses: AI glasses are deployed for specific, immediate use cases where ultra-low latency and egocentric context are paramount, such as real-time translation, navigation cues, or quick information retrieval. Their deployment is constrained by limited battery life (typically hours), thermal limits, and their inherent reliance on the smartphone for advanced AI features.

Smartphones (as Distributed AI Backend): In this context, the smartphone serves as the indispensable compute hub, enabling the glasses to perform advanced AI tasks. Its deployment is constrained by the requirement for physical proximity, sufficient battery charge, and a stable wireless connection to the glasses.

Distributed System: This distributed approach significantly accelerates the time-to-market for advanced AI glasses by leveraging existing, high-performance smartphone compute. It provides a scalable personal AI system, capable of handling simple on-device tasks on the glasses and complex, cloud-backed AI via the smartphone. The user experience critically depends on seamless integration and intelligent task routing to minimize perceived latency and ensure fluid interaction. System reliability is directly tied to the stability of the wireless link and the operational status of both devices; loss of connection or smartphone battery depletion severely curtails the glasses’ advanced AI capabilities.

Which Design Is More Efficient

Neither AI glasses nor smartphones are inherently “more efficient” in isolation for the broad spectrum of personal AI tasks. Instead, the distributed system (AI glasses + smartphone) represents the most pragmatically efficient engineering solution for delivering a comprehensive personal AI experience, given current silicon constraints.

- AI Glasses (isolated): Highly power-efficient for their specific, limited, burst-mode tasks. However, they are fundamentally inefficient for complex, sustained AI due to severe thermal and power limitations.

- Smartphones (isolated): Power-efficient for their range of complex, sustained tasks within their larger thermal and battery envelopes. They are inefficient for egocentric, ultra-low-latency, always-on perception due to form factor and the power draw required for simple, continuous monitoring.

Distributed System Efficiency: The distributed architecture achieves systemic efficiency by intelligently partitioning workloads. Glasses handle ultra-low-power, immediate tasks, avoiding the need for over-provisioned, power-hungry compute. Smartphones handle power-intensive, complex tasks, leveraging their robust resources. This optimizes the utilization of each device’s strengths.

The primary bottleneck to this systemic efficiency lies within the “intelligent workload offloading” middleware. Inefficient scheduling, excessive data transfer, or suboptimal power management across devices can negate the benefits, leading to higher overall system power consumption or perceived latency. The energy cost of wireless communication itself is a non-trivial factor that must be accounted for in the holistic power budget.

The distributed design is the most pragmatic and efficient engineering solution for balancing form factor, immediacy, and complex AI capabilities. Its ultimate efficiency is critically dependent on highly sophisticated runtime and scheduling intelligence that dynamically manages resources across the heterogeneous compute fabric.

Key Takeaways

- The distributed architecture of AI glasses paired with smartphones is a pragmatic engineering solution to balance the conflicting demands of compact form factor, real-time immediacy, and complex AI processing.

- AI glasses are optimized for ultra-low-power, egocentric, burst-mode tasks due to severe thermal and power constraints, acting as the primary sensor and immediate feedback interface.

- Smartphones provide the necessary sustained, high-performance computing, larger memory, and robust power delivery for complex AI workloads, effectively serving as the “pocket supercomputer.”

- Key engineering challenges include developing intelligent workload offloading middleware, managing inter-device communication latency, implementing holistic power budgeting across both devices, and fostering a unified software ecosystem.

- Overall system efficiency is achieved through intelligent resource orchestration, leveraging the distinct strengths of each device, rather than through the isolated efficiency of a single component.