Table of Contents

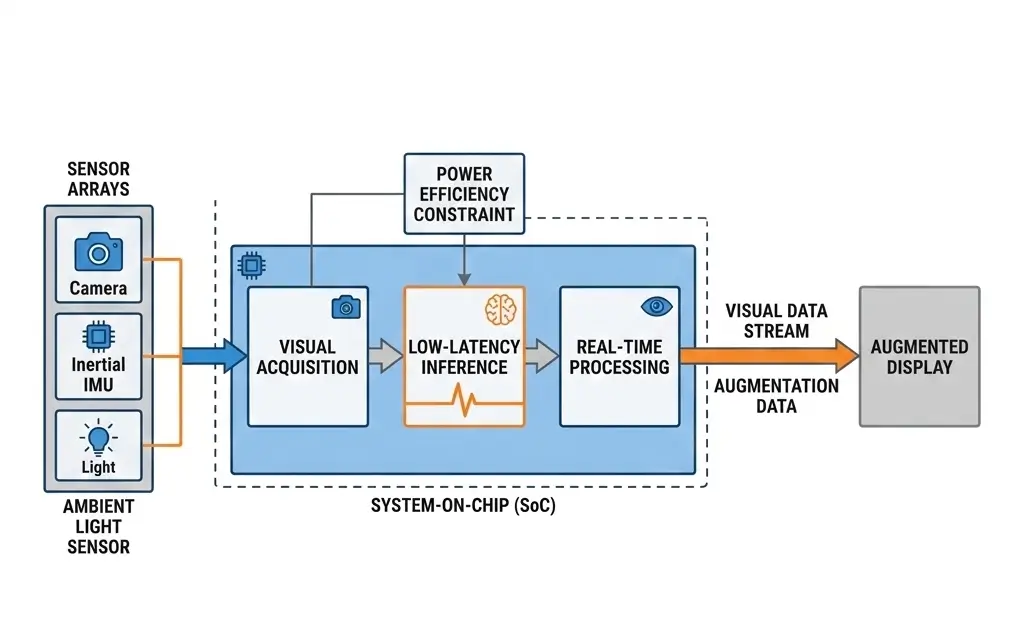

How AI glasses process vision in real time depends on ultra-low-power System-on-Chips (SoCs) that combine Image Signal Processors (ISPs), Neural Processing Units (NPUs), and optimized memory pipelines for real-time AI inference. This architecture prioritizes stringent power efficiency, sub-100ms latency, and passive thermal management, enabling complex visual inference directly on-device. Aggressive quantization and dynamic power management are critical to operate within the severe power and thermal envelopes of a wearable form factor, directly impacting battery life and sustained performance.

Understanding how AI glasses process vision in real time requires examining the specialized hardware pipelines that enable ultra-low-latency computer vision directly on wearable devices. Engineers must navigate a demanding landscape defined by extreme miniaturization, ultra-low power budgets, and passive thermal dissipation, all while maintaining sub-100ms latency for seamless user interaction. This necessitates a significant evolution in conventional computing architectures, pushing the state-of-the-art in heterogeneous integration and power-efficient silicon design. Understanding how AI glasses process vision in real time is essential for designing wearable AI systems that can deliver instant visual intelligence while maintaining strict power and thermal limits.

What AI Glasses Vision Processing Means

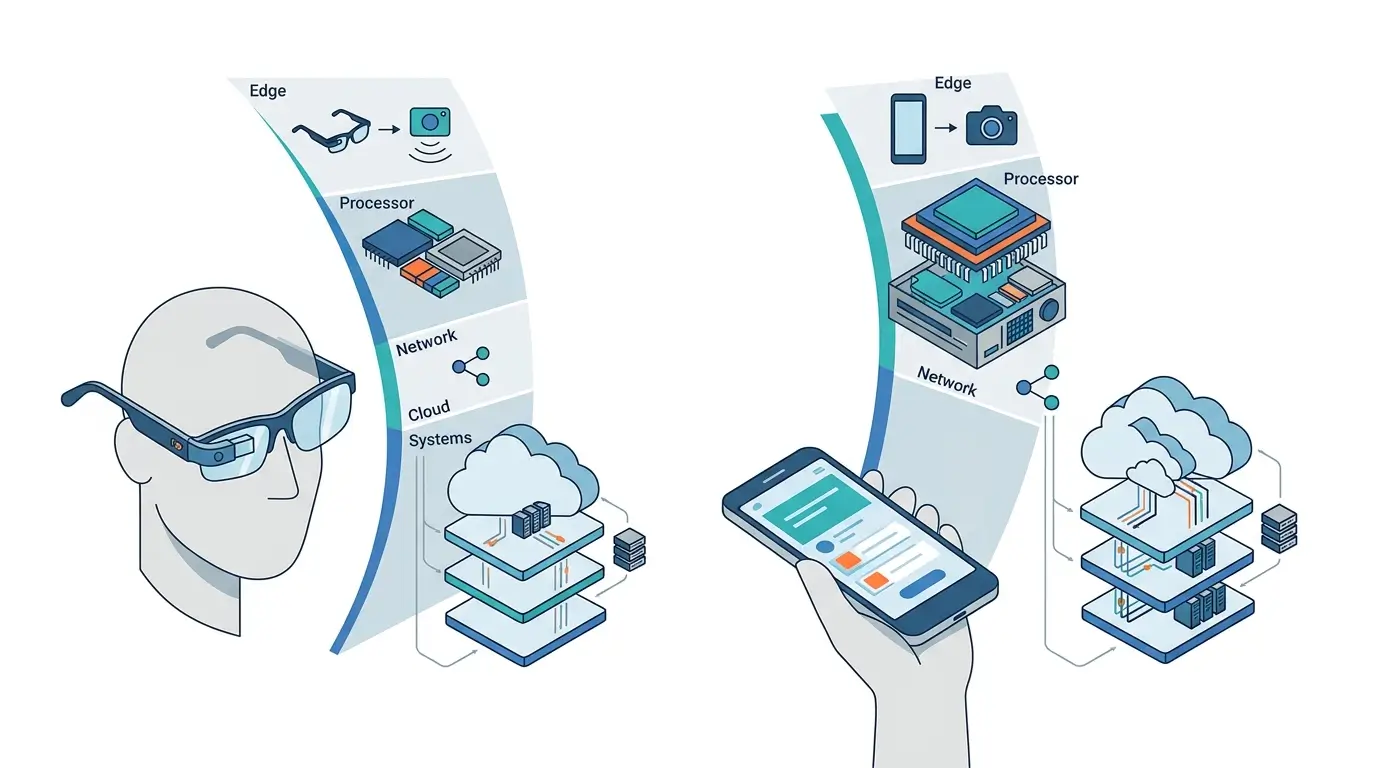

AI glasses integrate sophisticated sensor arrays and a highly optimized System-on-Chip (SoC) to acquire, process, and augment the user’s visual environment in real-time. Unlike traditional computing devices, their core function is continuous, low-latency visual inference, demanding a processing pipeline specifically engineered for power efficiency and thermal resilience.

The “real-time” aspect is critical, typically implying an end-to-end latency from photon capture to actionable insight of under 100 milliseconds. This low latency is essential for tasks like visual-inertial odometry (VIO), object recognition, and augmented reality (AR) overlay stabilization, all while operating within the device’s stringent power and thermal envelopes.

How AI Glasses Process Vision in Real Time

The real-time vision processing in AI glasses begins with high-frame-rate, low-latency image acquisition, typically via global shutter sensors connected through high-speed MIPI CSI-2 interfaces. Raw sensor data is typically fed into a dedicated Image Signal Processor (ISP) block within the SoC. The ISP performs essential pre-processing tasks such as demosaicing, noise reduction, lens distortion correction, and dynamic range optimization. This offloads computationally intensive operations from the main compute units, thereby reducing overall system power consumption and latency.

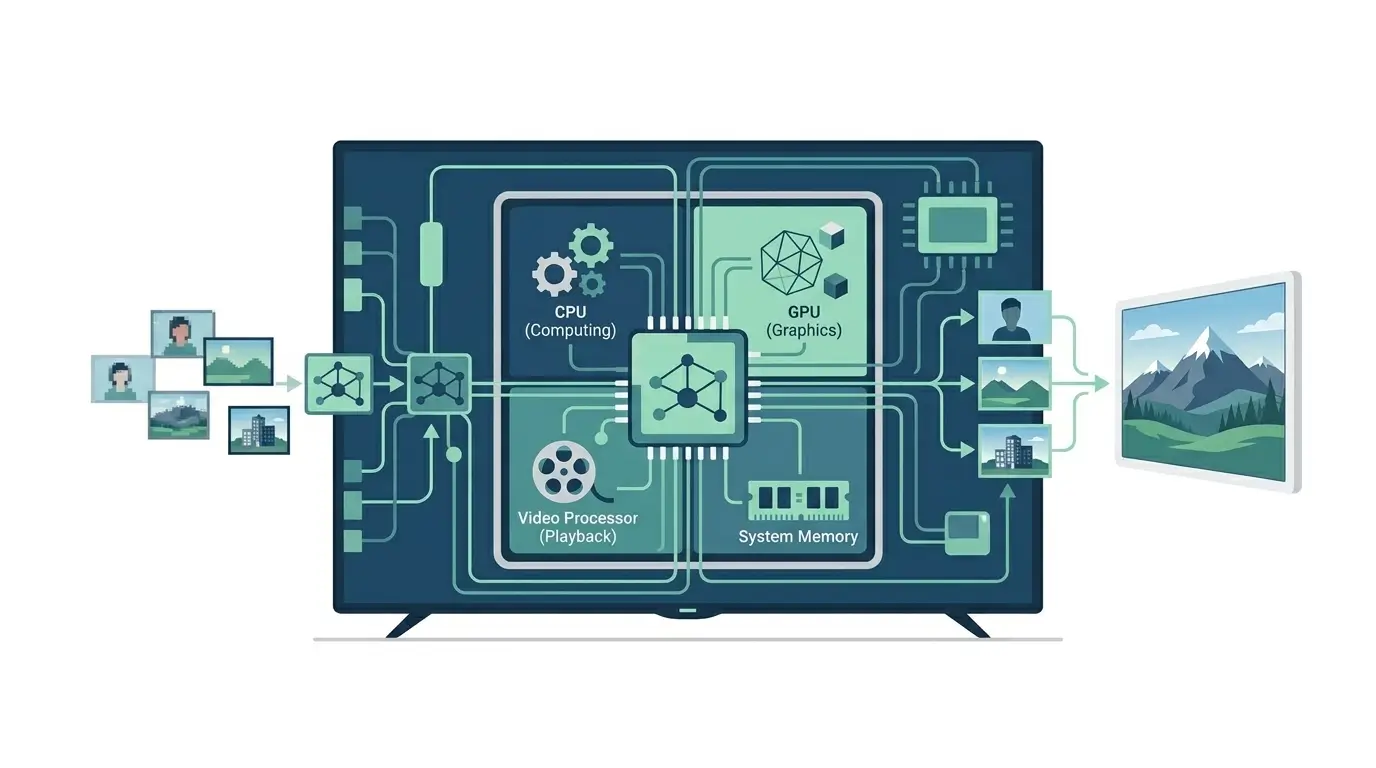

Once processed, the image data is often compressed and moved via Direct Memory Access (DMA) to a shared LPDDR5/5X memory pool. From there, it’s accessed by a custom Neural Processing Unit (NPU) for AI inference, specifically optimized for particular model architectures. Optimized for high operations per watt, the NPU executes quantized neural network models (e.g., INT8 or INT4) for tasks like object detection, semantic segmentation, and pose estimation, leveraging its dedicated compute resources.

This on-device AI inference is crucial for real-time responsiveness. Concurrently, a Digital Signal Processor (DSP) or a low-power CPU core might handle sensor fusion (e.g., combining visual data with IMU data for VIO) or other specialized signal processing tasks, depending on the system’s architecture and workload. The output of the NPU and DSP is then consumed by a Graphics Processing Unit (GPU) for rendering AR overlays or by the CPU for higher-level application logic. All these operations must occur within the strict sub-100ms latency budget, subject to available power and thermal headroom. Aggressive Dynamic Voltage and Frequency Scaling (DVFS) and power gating are continuously employed across all blocks to minimize power consumption and actively manage the thermal envelope.

AI Glasses Hardware Architecture for Real-Time Vision

The core of AI glasses’ vision processing relies on a highly integrated System-on-Chip (SoC), specifically designed for stringent power efficiency and heterogeneous computing.

| Component | Primary Function | Key Design Implication |

|---|---|---|

| Global Shutter Sensors | Low-latency, motion-blur-free image capture | Essential for VIO and dynamic AR; requires high data rates. |

| MIPI CSI-2 Interface | High-speed, low-power sensor data transfer | Minimizes I/O latency and power overhead. |

| Image Signal Processor (ISP) | Hardware-accelerated image pre-processing (demosaicing, noise reduction, HDR) | Offloads CPU/NPU, ensures clean data, and reduces latency. |

| Neural Processing Unit (NPU) | High-efficiency AI inference (matrix multiplications, convolutions) | Maximizes operations per watt for highly optimized, quantized models (INT8/INT4). |

| Digital Signal Processor (DSP) | Sensor fusion, audio processing, specialized signal tasks | Efficiently handles repetitive, fixed-point operations. |

| Low-Power CPU Cores | System control, application logic, task scheduling | Manages overall system, orchestrates heterogeneous blocks. |

| Graphics Processing Unit (GPU) | AR rendering, display output, visual effects | Optimized for parallel graphics workloads. |

| Shared LPDDR5/5X Memory | High-bandwidth, low-power unified memory pool | Reduces board space, power, and inter-chip latency; however, it can create contention points under high concurrent demand. |

| Direct Memory Access (DMA) | Efficient data movement between blocks without CPU intervention | Reduces latency and CPU overhead. |

| Power Management Unit (PMU) | Dynamic Voltage/Frequency Scaling (DVFS), power gating | Critical for thermal management and battery life. |

| Secure Hardware Elements | Secure boot, trusted execution environment | Protects sensitive data and system integrity. |

Modern wearable AI devices often rely on specialized neural accelerators similar to Arm’s Ethos edge AI processors, which are designed for efficient computer vision inference on low-power embedded hardware. You can explore the architecture details in ARM’s documentation for the Arm Ethos-N neural processing unit. This architecture is a direct response to the need for maximum computational throughput per watt, constrained by a passively cooled, miniature form factor, directly impacting sustained performance. This heterogeneous architecture explains how AI glasses process vision in real time while maintaining strict power and thermal limits in wearable devices.

Performance Requirements for Real-Time AI Vision in Glasses

Understanding how AI glasses process vision in real time also requires optimizing the entire sensor-to-inference pipeline to minimize latency and power consumption.

Advances in edge AI hardware continue to improve how AI glasses process vision in real time while maintaining strict wearable power budgets. The performance of AI glasses is defined by a delicate balance of computational throughput, power efficiency, and low latency. NPUs achieve exceptional operations per watt by specializing in quantized integer arithmetic (INT8/INT4). This significantly reduces memory bandwidth and power consumption compared to floating-point operations. This comes at a controlled tradeoff in model accuracy, which must be rigorously validated during development. End-to-end latency is paramount, with targets typically below 100ms for interactive experiences. This is driven by direct sensor-to-ISP-to-NPU pipelines and efficient DMA transfers.

Thermal management critically dictates sustained performance. While the SoC can achieve high burst performance for short durations, continuous heavy workloads across multiple blocks (e.g., NPU for inference, GPU for rendering, ISP for high-resolution video) will quickly lead to thermal throttling, severely impacting sustained performance. This dynamic scaling of clock frequencies and voltages, governed by the Power Management Unit (PMU), ensures the device remains within its thermal limits. However, it results in variable performance depending on workload and ambient temperature. Memory bandwidth, though high with LPDDR5/5X, can become a shared bottleneck under peak concurrent demands from multiple accelerators, potentially introducing micro-stalls and increasing effective latency.

Real-World Applications of AI Vision in Smart Glasses

The real-time vision processing capabilities of AI glasses enable a range of practical applications directly enhancing user experience and utility:

- Visual-Inertial Odometry (VIO): Essential for stable augmented reality (AR) overlays and accurate spatial tracking, allowing digital content to remain fixed in the real world despite head movements. This relies on fusing high-frequency IMU data with low-latency visual features, all within the system’s processing capabilities.

- Object Recognition and Tracking: Identifying and tracking real-world objects, faces, or text for contextual information, translation, or navigation cues. This requires robust, real-time inference on the NPU, utilizing highly optimized models.

- Semantic Segmentation: Classifying each pixel to understand scene composition, enabling advanced AR effects such as occluding digital objects behind real-world elements or applying filters only to specific surfaces.

- Gesture Recognition: Interpreting hand or body movements for intuitive, touchless interaction with the device or digital content.

- Real-time Translation: Overlaying translated text onto foreign signs or menus, demanding rapid Optical Character Recognition (OCR) and language model inference, all within the device’s processing and memory limits.

These applications are fundamentally constrained by the on-device processing capabilities, requiring highly optimized, quantized models to run efficiently within the stringent power and thermal budget, directly impacting offline execution capabilities.

Technical Limitations of AI Vision Processing in Glasses

Despite advanced engineering, AI glasses face inherent limitations stemming from their form factor and power constraints:

- Thermal Throttling: The passively cooled design means sustained, high-intensity computational loads will typically lead to performance degradation as the SoC reduces clock speeds and voltages to prevent overheating. This inherently limits the duration of peak performance and the complexity of continuous tasks.

- Model Complexity & Accuracy Tradeoffs: The reliance on highly quantized (INT8/INT4) neural networks and smaller model sizes, necessary for power efficiency and low latency, can lead to reduced accuracy compared to larger, FP32 models run on more powerful, less constrained hardware. Fine-grained distinctions or complex scene understanding may suffer as a result.

- Limited Computational Autonomy: While powerful for specific vision tasks, the on-device processing often lacks the general-purpose computational depth for highly complex AI, such as large language model inference or extensive 3D reconstruction, primarily due to power and memory limitations. More demanding tasks may require offloading to a connected smartphone or cloud, introducing additional latency and privacy considerations.

- Memory Bandwidth Bottleneck: The shared LPDDR5/5X memory, while efficient for space and power, can become a contention point when multiple high-bandwidth components (ISP, NPU, GPU) simultaneously demand access, potentially increasing effective latency and impacting overall responsiveness.

- Software Development Complexity: Optimizing applications across a heterogeneous SoC architecture (CPU, GPU, NPU, DSP, ISP) requires specialized toolchains, deep hardware understanding, and rigorous software-hardware co-design. This significantly increases development time and cost.

- Battery Life Variability: Performance and battery life are highly sensitive to workload. Continuous, demanding applications will drastically reduce operational time compared to intermittent, lighter use, leading to a highly variable and often unpredictable user experience.

Why Real-Time Vision Processing Is Critical for AI Glasses

Advances in edge AI hardware are rapidly improving how AI glasses process vision in real time, enabling more complex computer vision workloads to run locally on wearable devices. The ability of AI glasses to process vision in real-time is fundamental to the widespread adoption and utility of ubiquitous computing and augmented reality. It directly addresses the critical need for immediate, contextual intelligence without the inherent latency, privacy concerns, or connectivity dependencies of cloud-based solutions.

By enabling on-device, low-power visual perception, these systems enable new paradigms for human-computer interaction, environmental understanding, and assistive technologies. The continuous innovation in power-efficient silicon, specialized accelerators, and heterogeneous integration within these devices drives the broader semiconductor industry towards more intelligent, autonomous, and energy-conscious edge AI solutions, thereby advancing the capabilities of miniature, wearable form factors. Many wearable AI systems rely on optimized on-device machine learning frameworks that allow neural networks to run directly on edge hardware instead of cloud servers. Google provides detailed documentation on these techniques through its Edge AI development platform, which explains how machine learning models are optimized for local devices.

Key Takeaways on How AI Glasses Process Vision in Real Time

AI glasses achieve real-time vision processing through highly integrated SoCs featuring specialized accelerators like NPUs and ISPs, specifically designed for stringent power efficiency and low latency. Aggressive hardware-software co-design, including quantization and dynamic power management, is critical to operate within severe thermal and battery constraints. While enabling powerful on-device AI applications, this architecture necessitates tradeoffs in sustained performance, model complexity, and accuracy, highlighting the constant tension between miniaturization, power, and computational capability.