Table of Contents

Why AI features make smartphones heat up is primarily due to the high computational density and memory bandwidth demands of modern on-device AI workloads. While specialized Neural Processing Units (NPUs) are power-efficient per operation, their aggregate power draw during intensive workloads quickly exceeds the device’s limited thermal dissipation capabilities, leading to rapid thermal throttling and elevated component temperatures. The constant data movement between LPDDR memory and compute units also contributes substantially to the overall power budget and heat generation, directly impacting the device’s sustained performance.

Modern smartphones increasingly rely on AI for photography, voice recognition, and real-time processing. These features offer powerful capabilities but also impose a heavy computational load on mobile processors. Understanding why AI features make smartphones heat up requires examining the intense computational demands placed on modern mobile processors.

Driven by the need for low-latency responsiveness, enhanced privacy, and offline functionality, modern mobile platforms now incorporate specialized accelerators capable of billions of operations per second. However, cramming data-center-like inference capabilities into a pocketable, passively cooled, battery-constrained device inevitably creates a fundamental conflict with thermal management. As a result, heat generation becomes an intrinsic byproduct of advanced on-device AI execution. This article explores silicon constraints, runtime characteristics, and system bottlenecks—especially in the memory subsystem—that explain why AI features cause smartphones to heat up during demanding workloads.

What On-Device AI Means for Smartphones

On-device AI refers to the execution of machine learning models directly on a smartphone’s System-on-Chip (SoC) rather than offloading tasks to cloud servers. This paradigm enables real-time processing for applications such as advanced computational photography (e.g., semantic segmentation, super-resolution), real-time voice processing, augmented reality, and personalized user experiences without network latency or privacy concerns.

The computational core of these features involves high-volume Multiply-Accumulate (MAC) operations and tensor manipulations, which are characteristic of deep neural networks. The design philosophy aims to achieve comparable inference capabilities to desktop or data-center systems for specific, optimized models, albeit at a significantly reduced scale and within a severely constrained mobile environment, a challenge addressed by various mobile AI platforms (Snapdragon X Elite Vs Intel Ai Boost Vs Amd Xdna).

How AI Features Run on Smartphone Hardware

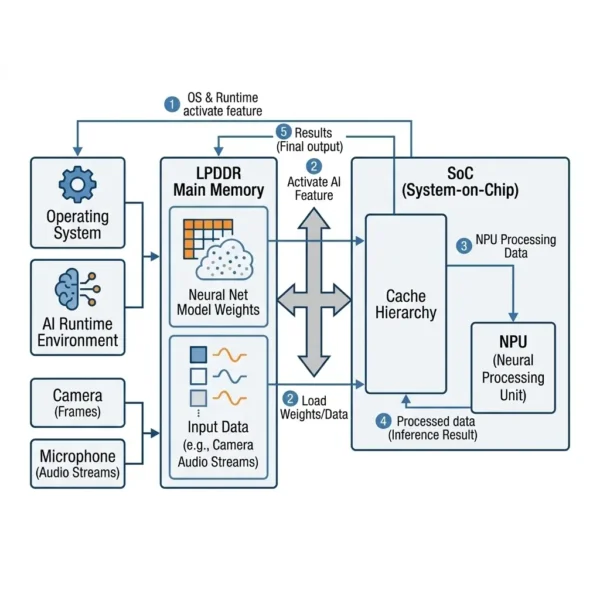

When an AI feature is activated, the smartphone’s operating system and AI runtime environment load the pre-trained neural network model weights and input data (e.g., camera frames, audio streams) from the LPDDR (Low Power Double Data Rate) main memory into the SoC’s cache hierarchy and specialized AI accelerators, primarily the Neural Processing Unit (NPU).

The NPU is architected for parallel execution of MAC operations, often utilizing fixed-point or INT8/INT16 precision for efficiency. It processes tensors through layers (convolutional, pooling, activation functions), generating intermediate activations that may be temporarily stored in on-chip SRAM or streamed back to LPDDR, before the final output is produced. This process is highly data-intensive, requiring sustained, high-bandwidth data movement between the main memory, on-chip caches, and the NPU’s compute engines, which directly impacts the device’s battery life during prolonged AI tasks.

Software optimization plays a critical role, mapping the AI workload efficiently to the NPU, GPU, or even specific CPU cores, depending on the model’s architecture and the available hardware capabilities. Dynamic Voltage and Frequency Scaling (DVFS) algorithms are continuously employed to adjust clock speeds and voltages of compute units to manage power consumption and, consequently, thermal output, often in response to real-time temperature sensor feedback.

Smartphone AI Architecture Overview

This architecture explains why AI features make smartphones heat up when multiple AI accelerators operate simultaneously under sustained workloads. Modern smartphone SoCs are heterogeneous compute platforms, integrating multiple specialized processing units onto a single die, typically fabricated on advanced process nodes (e.g., 4nm, 3nm). This heterogeneous architecture is a key reason why AI features make smartphones heat up during intensive workloads. The core components relevant to AI workloads include:

- Central Processing Unit (CPU): Comprising high-performance (e.g., Arm architecture reference documentation Cortex-X series) and high-efficiency (e.g., ARM Cortex-A series) cores. While capable of AI inference, CPUs are generally inefficient for the dense MAC operations of neural networks.

- Graphics Processing Unit (GPU): Optimized for parallel floating-point operations, making it suitable for certain AI workloads, especially those involving image processing or larger batch sizes.

- Neural Processing Unit (NPU): A dedicated hardware accelerator specifically designed for neural network inference. NPUs feature highly parallel MAC arrays, specialized data path logic, and often dedicated on-chip memory (SRAM) to minimize latency and maximize energy efficiency for tensor operations. Examples include Apple’s Neural Engine, Qualcomm’s Hexagon DSP with Tensor Accelerators, and MediaTek’s APU.

- Memory Subsystem: This includes the LPDDR DRAM modules, the memory controller integrated into the SoC, and the on-chip cache hierarchy (L1, L2, L3). The memory controller manages all data transfers between the compute units and main memory, operating at high frequencies to provide the necessary bandwidth.

- Interconnect Fabric: A high-speed on-chip network (e.g., ARM AMBA AXI) that facilitates data exchange between the CPU, GPU, NPU, memory controller, and other IP blocks.

The tight integration of these components on a single die, while minimizing latency and interconnect power, creates a highly concentrated heat source. Heat generated by any high-power block, such as the NPU or memory controller, quickly conducts to adjacent components, necessitating system-wide thermal management to prevent localized hotspots and ensure sustained performance.

Here’s a comparison of compute units for AI workloads:

| Feature | General-Purpose CPU | General-Purpose GPU | Specialized NPU |

|---|---|---|---|

| Primary Operations | Scalar/Vector, Branching, Control Flow | Parallel Floating Point, Graphics | Integer/Fixed-Point MACs, Activation Fns |

| Memory Access Pattern | Cache-coherent, irregular | Coalesced, streaming (texture/buffer) | Highly structured, block-wise, streaming |

| MACs/Watt Efficiency | Low | Medium | High (for target workloads) |

| Computational Density | Moderate | High | Very High |

| Power Profile | Variable, sensitive to instruction mix | High peak, sustained for graphics | High peak, burst for AI inference |

| Memory Bandwidth Needs | Moderate to High | Very High | Very High (for model weights/activations) |

Performance Characteristics of Mobile AI

Understanding why AI features make smartphones heat up requires examining both compute density and memory bandwidth usage. The performance of on-device AI features is characterized by a critical distinction between peak and sustained capabilities (Sustained Ai Performance Vs Peak Tops). SoCs are designed to deliver impressive peak Tera Operations Per Second (TOPS) figures, often for short bursts. This “burst tax” arises because achieving these peak numbers requires driving compute units (NPU, GPU, high-performance CPU cores) to their maximum clock frequencies and voltages, leading to a rapid surge in dynamic power consumption (). While NPUs are significantly more power-efficient per MAC operation than CPUs or GPUs, their sheer computational density means that when fully utilized, their total power draw can still be substantial, easily exceeding the SoC’s sustainable Thermal Design Power (TDP).

The memory subsystem is a significant contributor to overall power consumption. Large AI models necessitate constant, high-bandwidth data movement between LPDDR5/5X main memory and on-chip caches/compute units. This sustained memory traffic itself is a substantial power consumer within the memory controller, PHY (Physical Layer), and the DRAM modules. Achieving the necessary data throughput for complex AI models inherently generates considerable heat in the memory subsystem, often becoming a secondary thermal bottleneck even if the compute units are efficient. This memory-related heat can trigger thermal throttling independently or in conjunction with compute unit heat, directly impacting the device’s responsiveness and overall user experience.

Real-World AI Features on Smartphones

These scenarios illustrate why AI features make smartphones heat up when compute-heavy tasks run continuously on mobile processors. The thermal implications of AI features manifest in various real-world scenarios:

- Computational Photography: Features like real-time semantic segmentation for portrait mode, multi-frame HDR processing, or AI-driven image upscaling demand intense, short bursts of NPU/GPU activity. While initial processing is fast, extended use (e.g., recording AI-enhanced video) quickly leads to frame rate drops or increased processing latency as the SoC throttles.

- Real-time Generative AI: On-device large language models (LLMs) or image generation tasks, though currently limited in complexity on mobile, represent extremely demanding workloads. A real-time generative AI task might start quickly, but within 10-30 seconds, its inference latency will noticeably increase as the NPU/GPU throttles due to thermal limits.

- Augmented Reality (AR): Continuous object recognition, scene understanding, and simultaneous localization and mapping (SLAM) in AR applications require sustained NPU and GPU utilization. Prolonged AR sessions often result in noticeable device warming and reduced application responsiveness.

- Voice Assistants: While simpler voice commands are low-power, advanced on-device natural language processing (NLP) for complex queries or continuous listening can contribute to background heat generation, potentially affecting battery longevity over time.

Limitations of On-Device AI Processing

These hardware limits are a core reason why AI features make smartphones heat up during advanced camera processing or generative AI workloads. The pursuit of high-performance on-device AI within a smartphone’s form factor introduces several critical engineering limitations:

- Thermal Dissipation Constraints: Smartphones are passively cooled, relying on limited thermal mass and conduction/convection through the chassis. Thin designs offer minimal surface area for heat dissipation and limited internal volume for advanced cooling solutions (e.g., vapor chambers are typically very thin). This directly conflicts with the high power density of modern SoCs, forcing aggressive thermal throttling.

- Peak vs. Sustained Performance Discrepancy: The impressive peak TOPS figures are rarely sustainable. After a few seconds of intense AI workload, the SoC’s temperature rises rapidly, triggering DVFS mechanisms to reduce clock frequencies and voltages, thereby lowering performance to prevent thermal damage. This leads to inconsistent user experiences where initial responsiveness degrades.

- Memory Subsystem Thermal Bottleneck: Even with efficient NPUs, the sheer volume of data movement required by large AI models means the memory controller, PHY, and LPDDR DRAM modules themselves become significant heat sources. This memory-induced heat can independently trigger throttling, even if the compute units are not at their maximum thermal limits.

- Power Delivery Network (PDN) Challenges: The PDN must be robust enough to supply high peak currents to compute units during bursts, but sustained high power draw puts stress on voltage regulators and can lead to efficiency losses, further contributing to heat.

- Battery Life Impact: Intensive AI feature usage directly correlates with accelerated battery drain. Sustained high power draw also leads to elevated battery temperatures (>40°C), which significantly accelerates chemical degradation and reduces the battery’s overall lifespan.

- Localized Hotspots: Due to high power density, specific areas of the device (often near the SoC’s physical location or camera module) can become uncomfortably hot (>45°C surface temperature), impacting ergonomic comfort and potentially triggering OS warnings or disabling features.

Why It Matters

Ultimately, understanding why AI features make smartphones heat up helps engineers design more efficient mobile AI systems. The thermal implications of on-device AI are not merely an engineering footnote; they fundamentally shape the user experience, device longevity, and the practical utility of advanced AI features. The ability to perform complex AI inference locally offers unparalleled advantages in terms of low latency, enhanced privacy, and offline functionality, which are critical for the next generation of mobile computing.

However, the inherent conflict between these benefits and the physical constraints of heat dissipation means that raw computational power, as measured in peak TOPS, is often a misleading metric. The real challenge lies in designing SoCs and system software that can deliver sustained, efficient AI performance within a tight thermal budget. This requires continuous innovation in NPU architecture, memory subsystem optimization for power efficiency, advanced thermal management materials, and sophisticated software scheduling that intelligently balances performance with thermal and power envelopes. Ultimately, understanding and mitigating these thermal bottlenecks is crucial for realizing the full potential of ubiquitous, intelligent mobile devices without compromising user experience or device reliability.

Key Takeaways

- On-device AI features demand high computational density and memory bandwidth, generating substantial heat within passively-cooled smartphones.

- Specialized NPUs are power-efficient per operation, but their aggregate power draw during intensive workloads quickly exceeds thermal limits, leading to rapid throttling.

- The memory subsystem, including LPDDR DRAM and its controller, is a significant power consumer and heat source due to constant, high-bandwidth data movement for AI models.

- Smartphone thermal design is inherently limited by form factor, minimal thermal mass, and passive cooling, forcing a trade-off between peak performance and sustained operation.

- These thermal constraints manifest as dynamic performance, localized hotspots, and accelerated battery degradation, directly impacting user experience and device longevity.