Table of Contents

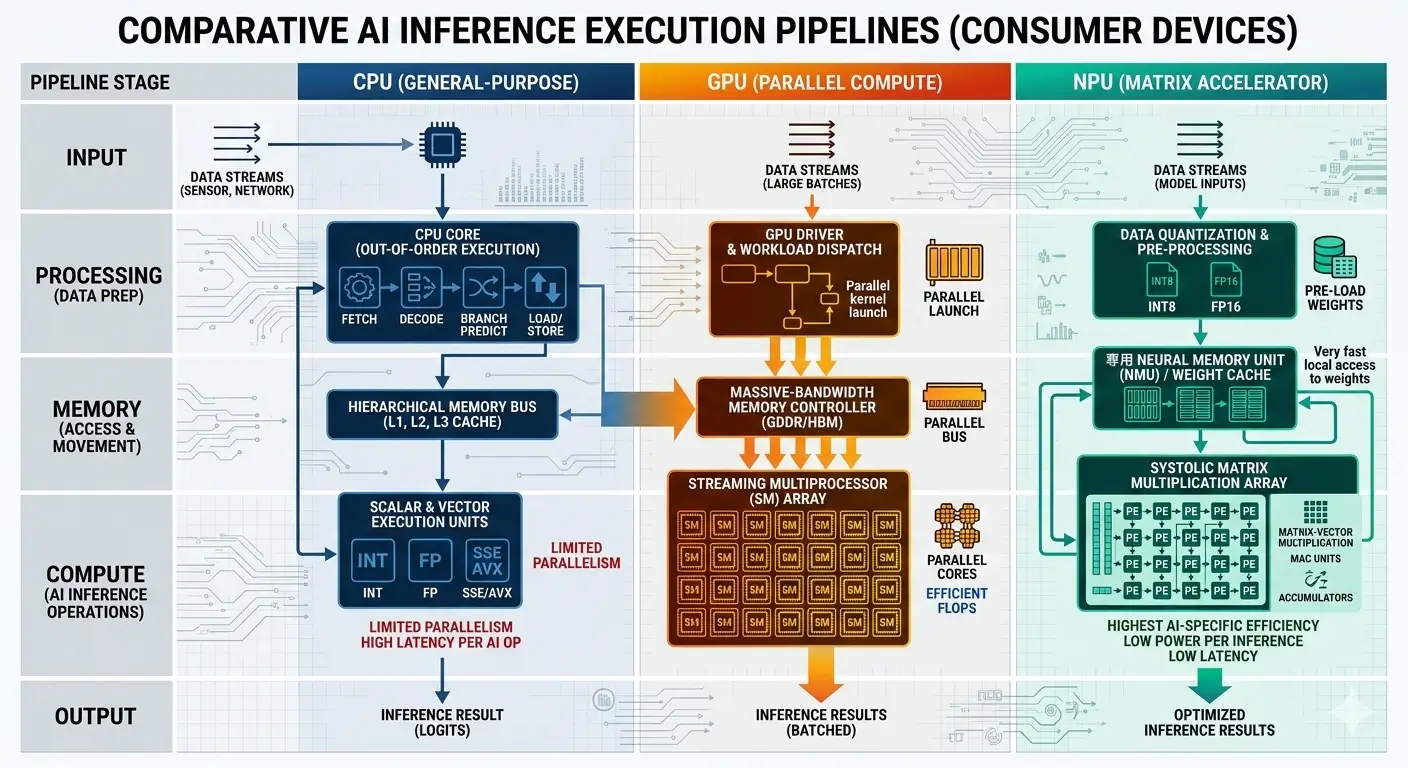

Modern laptops and smartphones handle advanced tasks efficiently using different types of processors. NPUs (Neural Processing Units) are designed for power-efficient, continuous workloads, GPUs handle high-performance parallel tasks, and CPUs provide flexibility for general system operations.

In real devices, performance comes from using all three together. This combination allows devices to balance speed, battery life, and sustained performance depending on the task.

This directly affects everyday experiences like camera quality, battery life, app performance, and device heating. Understanding how these processors work helps explain why some devices feel faster, last longer on battery, or handle demanding tasks more smoothly.

Artificial Intelligence is increasingly executed directly on consumer hardware. This enables real-time processing and AI inference without constant cloud dependence. Understanding NPU vs GPU vs CPU for AI Inference on Consumer Devices requires examining how each processor architecture handles compute scheduling, memory movement, and power constraints at the silicon level. These architectural decisions directly determine sustained performance, thermal behavior, and battery efficiency in modern smartphones, laptops, and edge AI systems.

Why This Matters for You

If you use a smartphone or laptop daily, this directly affects your experience.

- Faster photo processing and better camera quality

- Improved battery life during heavy tasks

- Smoother performance in apps and games

- Better privacy with on-device processing

Understanding how different processors work helps you choose better devices and understand real performance differences.

CPU vs GPU vs NPU: Quick Comparison Table

| Processor | Best Use Case | Power Efficiency | Performance | AI Role |

|---|---|---|---|---|

| CPU | Control logic & fallback inference | Low | Moderate | General-purpose processing |

| GPU | High-throughput AI workloads | Medium | Very High | Parallel AI compute |

| NPU | On-device AI inference | Very High | High (Sustained) | Dedicated AI acceleration |

This comparison highlights why modern consumer devices increasingly rely on NPUs for always-on AI features while GPUs handle demanding workloads and CPUs manage system coordination. Understanding NPU vs GPU vs CPU for AI inference on consumer devices helps users choose the right hardware architecture for efficient local AI execution.

How CPU, GPU, and NPU Handle AI Inference

- CPU: Executes AI inference by breaking down neural network operations into sequences of scalar and vector instructions. Its deep pipelines and out-of-order execution units handle the control flow and data dependencies, with SIMD units performing parallel operations on modest data sets.

- Data is fetched from system RAM through a complex cache hierarchy. Real-device implication: This design often leads to higher power consumption and reduced battery life when performing sustained AI tasks on mobile devices.

- GPU: Processes AI inference by distributing matrix multiplications and convolutions across thousands of parallel threads (warps/wavefronts). Each thread executes the same instruction on different data elements (SIMT). Tensor Cores accelerate low-precision matrix operations directly. Data is primarily streamed from dedicated high-bandwidth VRAM. Real-device implication: While powerful, the GPU’s high power draw can necessitate robust cooling solutions, impacting device form factor and noise levels in laptops.

- NPU: Operates by mapping neural network layers directly onto arrays of Multiply-Accumulate (MAC) units. It often employs fixed-function or highly configurable data paths optimized for low-precision integer arithmetic (e.g., INT8, INT4). Minimal control logic and extensive on-chip scratchpad memory enable high data locality and high power efficiency. Real-device implication: This specialized design allows for “always-on” AI features like real-time noise cancellation without significantly impacting battery life on smartphones.

CPU

The CPU’s architecture is fundamentally designed for maximal flexibility and sequential task execution, with deep pipelines, out-of-order execution, and branch prediction. For AI inference, its role is primarily as a ubiquitous fallback and for handling the unpredictable control flow and scalar pre/post-processing that often surrounds core neural network computations. SIMD vector extensions (e.g., AVX-512, NEON, AMX) are an adaptation to AI, allowing some data-parallel operations without sacrificing its general-purpose nature. Developers often utilize frameworks like the Intel OpenVINO toolkit documentation to optimize AI workloads for CPU execution.

Hidden Tradeoffs:

- Control Logic Overhead: Significant silicon area and power are dedicated to complex control logic (branch predictors, instruction decoders, out-of-order engines) that are largely inefficient overhead for the highly predictable, data-parallel matrix multiplications and convolutions central to AI inference. This complexity consumes power and limits the number of parallel compute units.

- Cache Coherence Tax: Maintaining cache coherence across multiple powerful cores is a complex and power-intensive protocol. For AI inference, where weights are often read-only, and activations follow predictable dataflow patterns, this sophisticated coherence mechanism is largely underutilized, adding unnecessary complexity and power consumption.

GPU

The GPU’s architecture is characterized by thousands of simpler, parallel cores and high memory bandwidth, designed for massive throughput of repetitive, data-parallel operations. Its origins in graphics (pixel shading, vertex transformations) directly translated to AI, where matrix multiplications and convolutions exhibit similar parallelism. The addition of Tensor Cores/Matrix Cores further specialized this existing parallel architecture, making it highly efficient for low-precision matrix math, effectively creating a “super-SIMD” engine for AI.

Hidden Tradeoffs:

- Control Flow Divergence Penalty: While SIMT (Single Instruction, Multiple Thread) is efficient for uniform operations, if threads within a warp/wavefront encounter conditional branches and take different execution paths, the GPU must serialize these paths. This leads to stalled execution cycles and reduced efficiency, particularly for custom AI operations or sparse models that break the highly regular dataflow.

- Overhead for Small Tasks: The GPU’s architecture is optimized for massive parallelism. For very small AI models or inference with a batch size of 1, the overhead of launching kernels, managing data transfers to/from VRAM, and orchestrating thousands of threads can dominate the actual computation time, making it less efficient than an NPU or even a CPU for certain latency-critical, small-scale tasks.

NPU

The NPU’s architecture, built around arrays of Multiply-Accumulate (MAC) units, on-chip scratchpad memory, and minimal control logic, is driven by the imperative for high power efficiency and sustained performance for specific neural network operations. This dedicated hardware accelerator is crucial for modern AI workloads AI Image Processing ISP NPU. It exists to offload highly repetitive, low-precision AI tasks from the CPU/GPU, enabling “always-on” AI features with minimal battery drain and thermal impact. It’s a direct architectural response to the ubiquity of AI in consumer experiences.

Hidden Tradeoffs:

- Flexibility vs. Efficiency: The NPU’s high efficiency comes at the cost of architectural rigidity. It is highly optimized for known neural network primitives (e.g., convolutions, matrix multiplications, specific activation functions, low-precision integers). Novel or highly custom operations, sparse models, or non-standard data types might not map efficiently, or at all, to the NPU’s fixed-function or highly configurable data paths, forcing a fallback to less efficient CPU/GPU execution.

- Quantization Dependency and Accuracy: NPUs achieve their peak efficiency by operating on low-precision integer data (INT8, INT4). This necessitates model quantization, which is a complex process that can introduce accuracy degradation, requiring careful calibration. The NPU’s performance is intrinsically tied to the success and quality of the quantization process, adding a significant step and potential point of failure in the development workflow.

When Should You Use CPU, GPU, or NPU for AI Inference?

Choosing the right processor depends on workload size, latency requirements, and power constraints.

Use CPU for AI Inference When:

- Running small AI models

- Performing preprocessing or postprocessing tasks

- Specialized accelerators are unavailable

- Flexibility matters more than efficiency

Use GPU for AI Inference When:

- Running large AI models or local LLMs

- Performing real-time video or image processing

- High parallel throughput is required

- Power consumption is less constrained

Use NPU for AI Inference When:

- Executing always-on AI features

- Running quantized neural networks

- Maximizing battery life on laptops or smartphones

- Sustained inference performance is required

Performance Characteristics

Benchmark comparisons of CPU vs GPU vs NPU for AI inference on consumer devices reveal how specialization directly impacts sustained throughput and energy efficiency. The architectural differences manifest directly in measurable performance metrics for AI inference, highlighting the critical considerations in the NPU vs GPU vs CPU for AI Inference on Consumer Devices debate.

| Characteristic | CPU (e.g., Intel Core i9, AMD Ryzen) | GPU (e.g., Integrated/Discrete Laptop GPUs) | NPU (e.g., Dedicated Mobile/Laptop NPUs) |

|---|---|---|---|

| Core Architecture for AI | General-purpose, scalar/vector (SIMD) units, complex control flow. | Massively parallel, many simple cores (SIMT), specialized Tensor Cores. | Arrays of MAC units, minimal control, on-chip scratchpad, fixed-function/configurable. |

| Peak AI Performance (INT8/FP16 TOPS) | 0.1 – 10+ TOPS (with AVX-512/AMX) | 2 – 100+ TOPS (integrated to high-end discrete) | 4 – 48+ TOPS (depending on NPU generation/tier) |

| Sustained AI Performance (INT8/FP16 TOPS) | 0.05 – 5 TOPS (highly thermal limited) | 1 – 50+ TOPS (thermal limited in consumer devices) | 3 – 40+ TOPS (excellent sustained performance) |

| Typical Power Consumption (AI Inference) | 10 – 100W (high-end laptop/desktop) | 15 – 300W (integrated to high-end discrete) | 1 – 10W (highly efficient) |

| Efficiency (TOPS/W) | 0.01 – 0.1 TOPS/W | 0.1 – 1 TOPS/W | 3 – 15+ TOPS/W |

| Latency (Low-Batch Inference) | Excellent (<1ms for simple ops) due to low overhead. | Good for high-batch; higher for small batches due to launch overhead. | Excellent for target models due to low overhead, high data locality. |

| Memory Architecture Impact | System RAM, complex cache hierarchy. Bandwidth gap for large models. | High-bandwidth VRAM (discrete) or shared system RAM (integrated). VRAM capacity is a hard limit. | On-chip scratchpad, minimal external memory access for core ops. Highly optimized for dataflow. |

Sustained Workload Behavior:

- CPU: Under sustained AI inference, consumer CPUs rapidly hit their thermal design power (TDP) limits. This leads to aggressive clock frequency reductions (throttling), causing a significant drop from peak performance. Performance becomes highly dependent on the device’s cooling solution, making it less reliable for continuous, demanding AI tasks.

- GPU: GPUs can sustain high throughput for AI inference for longer than CPUs due to their specialized parallel architecture. However, in consumer devices, thermal throttling remains a significant factor, particularly for sustained high-load scenarios, especially for discrete GPUs in laptops. Integrated GPUs, sharing power and thermal budgets with the CPU, also experience performance degradation under prolonged heavy AI loads.

- NPU: NPUs are designed for excellent sustained performance for its target workloads. Their high power efficiency means they can run AI tasks continuously without hitting thermal limits, making them ideal for always-on features and long-duration inference. Performance is highly predictable for supported models.

Real‑World CPU vs GPU vs NPU Use Cases on Consumer Devices

In practice, modern devices like flagship phones, Snapdragon X Elite laptops, and AI‑developer boxes distribute AI work across CPU, GPU, and NPU: CPU as the flexible orchestrator, GPU as the heavy‑duty throughput engine, and NPU as the low‑power, always‑on intelligence layer.

| Device Type / Example | CPU Role | GPU Role | NPU Role |

|---|---|---|---|

| Flagship smartphone (Galaxy S26 / Pixel 10 / iPhone 17) | Handles system control, app logic, and pre/post-processing for camera and voice tasks | Supports camera processing (HDR, stabilization), gaming features, and image enhancements | Runs continuous features like portrait blur, night mode, noise cancellation, voice assistant, and on-device search |

| AI-PC laptop (Snapdragon X Elite / Intel Core Ultra / AMD Ryzen AI) | Manages operating system, applications, and smaller workloads | Handles heavy tasks like large model processing, video enhancement, and creative workloads | Supports features like background blur, gaze detection, local processing, and real-time translation |

| Entry-level Android / IoT device | Handles basic processing and small tasks when no dedicated hardware is available | Limited or not present; mainly used for basic graphics | Usually absent or very limited, so advanced features are not supported |

| Gaming PC (NVIDIA / AMD-based) | Runs game logic, physics, and general system tasks | Handles advanced graphics, upscaling, rendering, and high-performance workloads | An optional accelerator used for local processing, creative tools, or enhanced upscaling |

| Edge device (Jetson / Movidius-type systems) | Runs the operating system, device control, and manages processing pipelines | Handles parallel tasks or preprocessing before specialized processing | Executes real-time detection, recognition, and vision tasks efficiently with low power |

Real-World Applications

- CPU: Handles AI inference for basic tasks, pre/post-processing of NPU/GPU outputs, or when no specialized accelerator is available. Examples include simple image classification, natural language processing for small models, or general-purpose AI tasks where flexibility outweighs raw throughput.

- GPU: Powers more demanding AI applications like real-time video processing, complex image generation, local execution of medium to large language models (LLMs), and advanced gaming AI features. Its high throughput makes it suitable for tasks requiring significant data parallelism, a capability highlighted in discussions comparing platforms like Snapdragon X Elite, Intel AI Boost, and AMD XDNA.

- NPU: Enables “invisible” and “always-on” AI features with minimal battery drain. This includes real-time noise cancellation, gaze detection, background blur for video calls, local voice assistants, semantic search, and efficient execution of quantized LLMs on mobile devices. It’s the architectural enabler for efficient integration of advanced intelligence into daily device usage.

CPU

- Power Wall for Parallelism: The fundamental architectural commitment to general-purpose flexibility and sequential performance inherently limits the CPU’s ability to achieve the power efficiency of specialized accelerators for AI. Scaling its AI performance further increasingly encounters a power wall, making it unsustainable for battery-powered devices.

- Memory Bandwidth Gap: While system RAM bandwidth improves, the CPU’s memory hierarchy, optimized for diverse access patterns, struggles to efficiently feed the massive, continuous data streams required by large AI models compared to dedicated VRAM or on-chip NPU memory.

GPU

- Memory Capacity as a Hard Limit: For large AI models (e.g., LLMs), the fixed VRAM capacity of a consumer GPU (especially integrated ones sharing system RAM) becomes a hard architectural constraint. Exceeding this limit necessitates costly data swapping to system RAM, severely degrading performance and increasing latency.

- Power Efficiency Plateau: While Tensor Cores significantly boost efficiency, the underlying general-purpose parallel architecture of a GPU still carries some overhead compared to a purpose-built NPU. There’s an inherent limit to how power-efficient a GPU can become while retaining its flexibility for graphics and general-purpose compute.

NPU

- Architectural Obsolescence Risk: The rapid evolution of AI research constantly introduces new model architectures, activation functions, and data types. NPUs, being specialized, face a risk of architectural obsolescence if future AI paradigms diverge too much from their optimized primitives, potentially requiring costly redesigns or rendering them inefficient.

- Programming Model Fragmentation & Vendor Lock-in: The lack of a strong, unified industry standard for NPU programming models leads to vendor-specific SDKs and APIs. This fragmentation increases developer effort, hinders cross-platform deployment, and creates a risk of vendor lock-in, potentially hindering broader adoption and innovation.

Real-world performance differences between CPU vs GPU vs NPU for AI inference on consumer devices become more visible during sustained workloads and battery-constrained scenarios.

Why CPU vs GPU vs NPU Matters for Consumer AI

Modern devices rely on a combination of CPU, GPU, and NPU to deliver efficient and responsive performance across different workloads.

CPU vs GPU vs NPU for AI Inference

Modern AI devices combine all three processors for optimal performance.

NPU provides the best performance-per-watt for AI inference.

GPU delivers the highest raw AI compute throughput.

CPU offers maximum flexibility but the lowest efficiency.

Which One Should You Care About?

- If you want better battery life → NPU matters most

- If you do heavy tasks like editing or gaming → GPU matters more

- If you want flexibility and general performance → CPU is still essential

Most modern devices use all three together, but understanding their roles helps you choose the right device for your needs.

Key Takeaways

- Specialization for Efficiency: NPUs are purpose-built for AI inference, achieving superior power efficiency and sustained performance for target neural network operations through specialized MAC arrays and on-chip memory.

- Throughput vs. Flexibility: GPUs offer high throughput for data-parallel AI, leveraging their graphics heritage and Tensor Cores, but CPUs provide unmatched flexibility for general-purpose tasks and unpredictable control flow.

- Thermal and Power Constraints: CPUs are generally the least efficient for sustained AI, leading to rapid thermal throttling. GPUs are better but still thermally constrained in consumer devices. NPUs excel in thermal management, enabling fanless designs and “always-on” AI.

- Architectural Trade-offs: NPU efficiency comes at the cost of flexibility and dependence on quantization. GPUs face VRAM capacity limits and power efficiency plateaus. CPUs are bottlenecked by general-purpose overhead and memory bandwidth for AI.

- Heterogeneous Compute is Key: Optimal AI experiences on consumer devices increasingly rely on a heterogeneous compute approach, intelligently offloading tasks to the most suitable processor (CPU for control, GPU for high-throughput general AI, NPU for power-efficient, specific NN inference).

Simple Takeaways for Users

- If your phone has better battery life during camera or voice features, it is likely using an NPU efficiently

- If your device heats up during heavy tasks like gaming or video processing, the GPU is being used heavily

- CPU is still important for overall performance, but it is not optimized for continuous heavy workloads

For most users, you don’t need to choose between CPU, GPU, or NPU — modern devices use all three together. However, understanding their roles helps explain why performance and battery life differ between devices.