Table of Contents

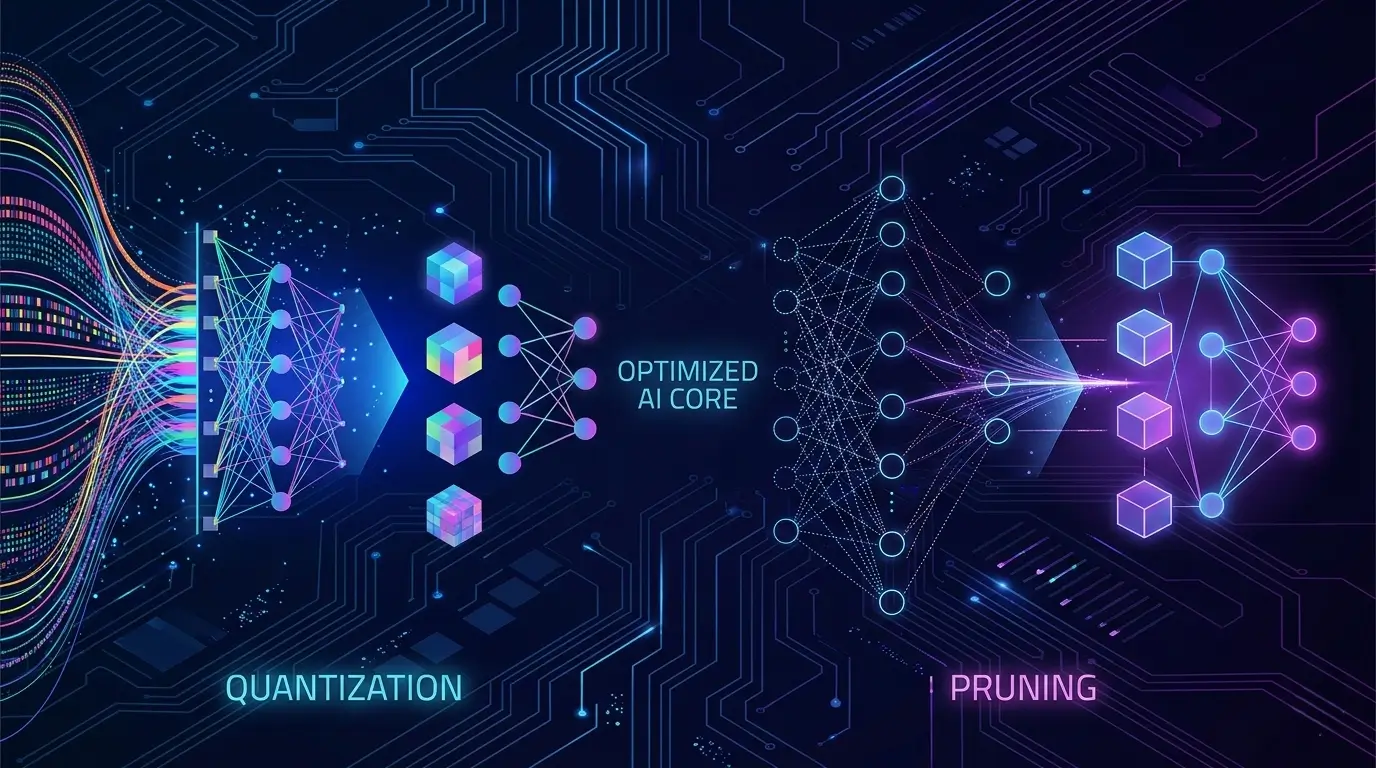

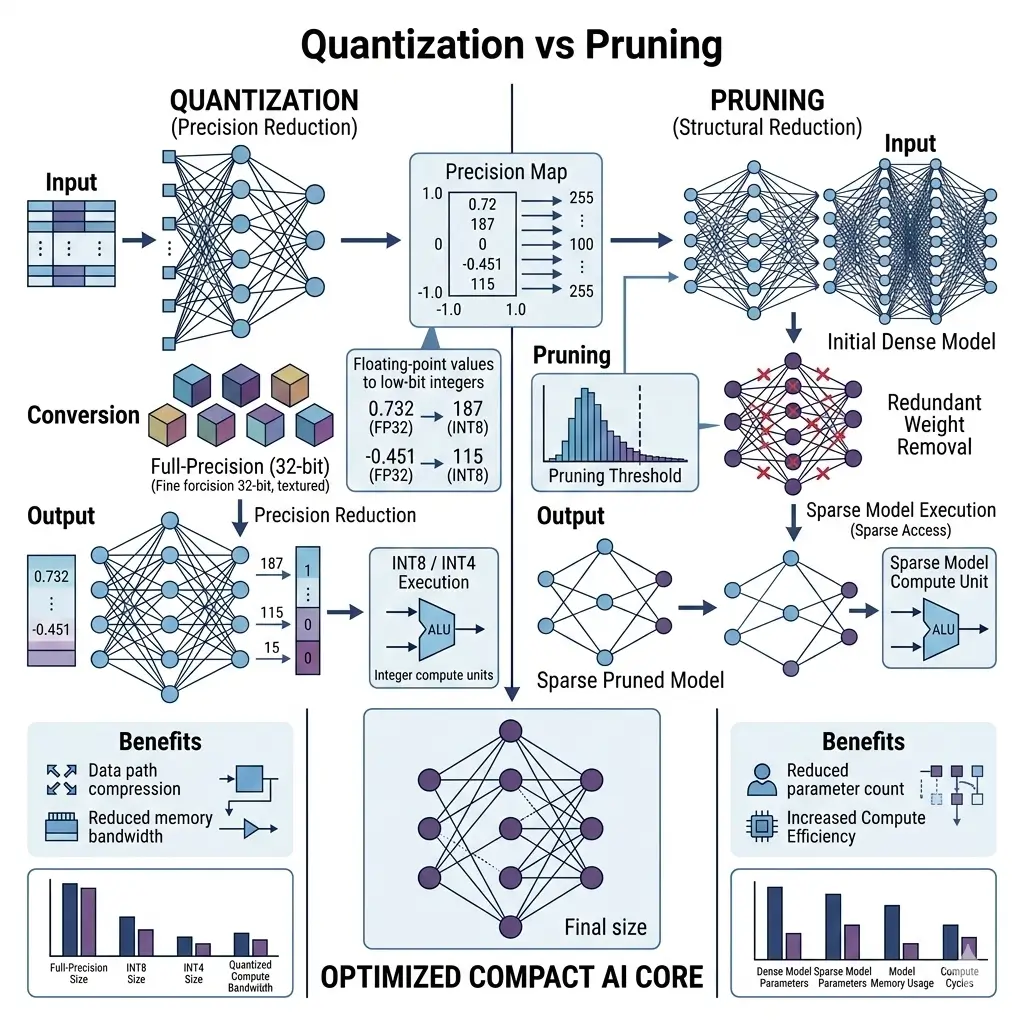

This Quantization vs Pruning comparison explains how both optimization strategies affect edge LLM deployment efficiency. For large language models (LLMs) on edge devices, quantization primarily optimizes the numerical precision of the model to align with the native, power-efficient integer arithmetic capabilities of edge silicon, directly boosting throughput and reducing power. Pruning, conversely, optimizes the model’s structural redundancy by removing unnecessary parameters, aiming to reduce the overall model size and computational load. While both reduce resource consumption, quantization offers more predictable and widespread efficiency gains on current edge hardware, whereas pruning’s benefits are highly dependent on its structure and specialized hardware support.

Deploying large language models on edge devices such as smartphones, embedded systems, and IoT hardware introduces strict power, thermal, and memory constraints that cloud environments do not face. Modern edge processors are optimized for efficient low-precision computation rather than large floating-point workloads.

Quantization vs Pruning becomes central in this context, as both techniques adapt LLM execution to match real device hardware capabilities. Instead of redesigning the silicon, these optimization methods reshape model computation to improve efficiency, reduce memory pressure, and enable practical on-device AI deployment.

Quantization

To optimize LLMs for edge hardware, quantization reduces the numerical precision of model weights and activations. Instead of using high-precision floating-point numbers (e.g., FP32), it converts them to lower-precision formats like FP16, INT8, or even INT4. This transformation directly aligns the LLM’s numerical representation with the native capabilities of edge hardware, significantly improving battery efficiency by leveraging power-optimized integer ALUs.

- Mechanism: This technique maps a range of floating-point values to a smaller set of integer values, with the mapping defined by a “scale” and a “zero-point.”

- Types:

- Post-Training Quantization (PTQ): Quantizes a fully trained model without retraining, often requiring a small calibration dataset to determine optimal scales and zero-points.

- Quantization-Aware Training (QAT): Simulates the effects of quantization during the training process, allowing the model to adapt and mitigate accuracy degradation.

- Mixed-Precision Quantization: Applies different precision levels to different layers of the model, based on their sensitivity to quantization.

Pruning

Pruning addresses model redundancy by removing unnecessary connections or parameters to reduce model size and computational load. This technique removes redundant connections, weights, neurons, or even entire channels from a neural network, resulting in a sparser, smaller model. The goal is to reduce the total number of operations (FLOPs) and the model’s memory footprint without significantly impacting accuracy. This reduction is crucial for enabling larger models to fit within the constrained memory footprints of edge devices.

- Mechanism: Pruning identifies and removes parameters or structures that contribute least to the model’s output, often by setting their values to zero.

- Types:

- Unstructured Pruning: Removes individual weights, leading to irregular sparsity patterns. While it can achieve high sparsity, it often requires specialized hardware or software to realize performance gains.

- Structured Pruning: Removes entire groups of parameters, such as channels, filters, or blocks. This results in regular, dense sub-networks that are more amenable to acceleration on standard hardware. Examples include channel pruning or block pruning.

Architectural Differences

In Quantization vs Pruning optimization workflows, hardware compatibility becomes the deciding factor. Understanding the core architectural differences between quantization and pruning is essential for optimizing LLM deployment on edge silicon.

Quantization is fundamentally a re-architecture of the data path. It directly addresses the numerical representation of data flowing through the compute units and memory subsystems. Edge NPUs and DSPs (e.g., Qualcomm Hexagon, Apple Neural Engine, ARM Ethos-U) are architecturally optimized for low-precision integer arithmetic, often to the exclusion of efficient high-precision floating-point operations.

By converting FP32 to INT8 or INT4, quantization allows the LLM to leverage these smaller, faster, and more power-efficient integer ALUs and memory subsystems. Without this, LLMs would be forced onto less efficient floating-point units or general-purpose cores, rendering them impractical for edge deployment. This implies a direct architectural lock-in: if an LLM cannot tolerate sufficient quantization, the device’s primary compute engine becomes inaccessible, leading to significant performance and power penalties.

Pruning, conversely, addresses the architectural redundancy within LLMs themselves. It is a design decision to reduce the effective model complexity and parameter count, ensuring the model can physically fit within limited on-device memory and reduce the total number of operations. This is particularly critical for LLMs, where billions of parameters often contain significant sparsity that can be exploited.

Modern edge inference performance heavily depends on dedicated neural accelerators, as explained in our detailed guide on NPU in Smartphones: The Powerful Engine Driving Modern Mobile AI. However, unstructured pruning often comes with a “sparse tax” on general-purpose hardware. The irregular memory access patterns introduced by sparse data structures can lead to increased cache misses, higher memory bandwidth consumption for metadata (indices), and significant control logic overheads. This frequently results in increased actual inference latency despite fewer arithmetic operations, negating theoretical gains unless highly specialized sparse accelerators or specific, hardware-defined structured sparsity patterns (like NVIDIA’s 2:4 sparsity in Tensor Cores) are present and fully utilized by the software stack.

Latency

Latency is a critical metric for real-time edge AI, and both quantization and pruning impact it differently.

- Quantization: Significantly reduces inference latency. Smaller data types mean more data can be fetched per memory transaction, reducing memory bandwidth requirements. Crucially, dedicated hardware units like INT8 ALUs (found in NVIDIA Tensor Cores on Jetson Orin/AGX Xavier, Qualcomm Hexagon DSPs/NPUs, ARM Ethos-U cores) can perform operations significantly faster than FP32 units, often executing multiple lower-precision operations per cycle. This direct hardware acceleration translates to substantial speedups, enabling real-time responses for interactive AI applications on the device.

- Pruning:

- Structured Pruning: Can lead to significant latency reduction. By removing entire channels or blocks, it directly reduces the number of FLOPs and the memory footprint. The remaining operations are dense and can be efficiently processed by standard hardware, leading to faster execution.

- Unstructured Pruning: While it reduces total FLOPs, it often does not translate to proportional latency reduction on standard dense matrix multiplication hardware. Sparse matrix operations can be slower than dense operations due to irregular memory access patterns, the overhead of managing sparsity (e.g., fetching indices alongside values), and the inability of general-purpose ALUs to efficiently skip zero operations.

TOPS (Tera Operations Per Second)

Effective computational throughput, measured in TOPS, is significantly influenced by how models are optimized for edge hardware.

- Quantization: Increases effective TOPS. Hardware is often designed to support higher throughput for lower precision. For example, an INT8 unit might perform 4x the operations of an FP32 unit in the same clock cycle. A device rated for 100 FP32 TOPS might achieve 400 INT8 TOPS on its dedicated hardware. This effectively multiplies the compute capability for quantized models, allowing more complex LLMs to run on less powerful edge silicon.

- Pruning:

- Structured Pruning: Can increase effective TOPS by reducing the total number of operations required for inference. The same hardware can complete the task faster, as the workload is smaller.

- Unstructured Pruning: While the total theoretical FLOPs decrease, the effective TOPS (actual operations per second) might not increase, or could even decrease, if the hardware cannot efficiently skip zero operations or if the overhead of sparse computation outweighs the benefit.

Power Consumption

Managing power consumption is paramount for battery-powered edge devices, and optimization techniques play a crucial role.

- Quantization: Quantized LLMs exhibit consistent and predictable throughput and power consumption under sustained inference. By aligning with native integer ALUs and significantly reducing memory bandwidth, the hardware operates within its designed thermal and power envelopes. This leads to:

- Reduced Memory Access Power: Less data is moved from DRAM to compute units.

- Reduced Compute Power: Lower precision operations typically consume less energy per operation.

- Overall System Power Reduction: Due to fewer active compute and memory components.

- Thermal Stability: Lower power consumption reduces heat generation, minimizing thermal throttling and allowing the device to operate at higher frequencies for longer periods. This results in stable performance over extended periods, making them ideal for continuous, low-latency edge inference scenarios where consistent responsiveness is paramount.

- Pruning:

- Reduced Compute Power: Fewer operations directly translate to less energy consumed by the compute units.

- Reduced Memory Access Power: A smaller model size (if weights are truly removed and not just zeroed out) means less data needs to be loaded from memory.

- Performance Variability (Unstructured Pruning): Unstructured pruning introduces fundamental unpredictability into sustained workload behavior. The irregular memory access patterns and increased control logic overhead associated with sparse data can lead to highly variable inference latency, increased cache misses, and potentially higher localized power consumption due to inefficient data movement and processing. This makes pruned models prone to thermal throttling and inconsistent performance under continuous load, challenging their use in applications requiring strict real-time guarantees unless sparsity is perfectly managed by specialized hardware.

Memory Footprint & Bandwidth

Optimizing memory footprint and bandwidth is critical for deploying LLMs on memory-constrained edge devices.

- Quantization: Quantization significantly reduces memory footprint and bandwidth requirements.

- Memory Footprint: An FP32 model requires 4 bytes per parameter, while an INT8 model requires only 1 byte. This directly reduces the model’s size in memory, enabling larger LLMs to fit entirely on-chip or within limited DRAM.

- Memory Bandwidth: Smaller data types mean more data can be fetched per memory transaction from DRAM to on-chip caches and compute units. This alleviates a common bottleneck in LLM inference, where memory access often dominates computation time. Memory efficiency remains one of the primary constraints for edge LLM deployment, which is explored further in On-Device AI Memory Limits: Performance, Thermal, and Bandwidth Explained.

- Pruning: Pruning aims to reduce the model’s memory footprint by removing parameters.

- Model Size Reduction: Structured pruning can lead to substantial reductions in model size, as entire channels or blocks are removed, meaning less data needs to be loaded from memory.

- Unstructured Pruning & Metadata: While unstructured pruning reduces the number of non-zero weights, the actual memory footprint might not shrink proportionally unless specialized sparse data formats (e.g., Compressed Sparse Row/Column) are used. These formats require storing not just the values but also their indices, which introduces metadata overhead. This metadata can sometimes increase the effective memory bandwidth consumption, especially if the hardware isn’t optimized for sparse data access.

Software Ecosystem

The maturity and capabilities of the software ecosystem significantly influence the practical deployability of optimized LLMs.

- Quantization: The software ecosystem for quantization is relatively mature and well-supported.

- Tooling: Frameworks like TensorFlow Lite, PyTorch Mobile, and ONNX Runtime provide robust tools for PTQ and QAT. These tools automate the calibration process and integrate with various edge hardware backends.

- Maturity: Quantization is a widely adopted and understood technique, with extensive documentation and community support.

- Hidden Tradeoff – Calibration Fragility: While PTQ is appealing for its simplicity, its reliance on a representative calibration dataset introduces a hidden operational dependency and fragility. A subtle mismatch between the calibration data distribution and real-world inference data can lead to catastrophic, difficult-to-debug accuracy failures in production, effectively shifting a significant data quality and validation burden from model training to the deployment and maintenance phases.

- Pruning: The software ecosystem for pruning is more fragmented, especially for unstructured sparsity.

- Tooling: Tools for structured pruning are available within major frameworks, often integrated with model compression libraries. However, for unstructured pruning, realizing performance gains often requires custom sparse kernels or highly optimized libraries that can efficiently handle irregular memory access patterns. For instance, realizing optimal performance often involves leveraging specialized optimization frameworks, such as those detailed in the NVIDIA TensorRT documentation, which can efficiently handle both quantization and structured pruning for deployment on NVIDIA hardware.

- Hardware Dependency: NVIDIA’s specific 2:4 structured sparsity support in Tensor Cores creates a vendor-specific architectural incentive for LLM developers to align their pruning strategies with this fixed pattern. This can lead to sub-optimal model architectures if the natural sparsity of an LLM doesn’t align with this fixed pattern, or it creates a dependency on a single vendor’s hardware.

- Hidden Tradeoff – Retraining Cost: The necessity of retraining or fine-tuning a pruned model to recover accuracy represents a high hidden cost in the development lifecycle. This computational and time overhead can make iterative model development and frequent updates prohibitively expensive, especially for rapidly evolving LLMs, potentially offsetting the deployment benefits over the product’s lifetime.

Deployment Considerations

Real-world Quantization vs Pruning decisions depend heavily on available edge accelerator support. Practical deployment on edge devices requires careful consideration of how optimization techniques translate into real-world performance and reliability.

- Quantization: Quantization is the cornerstone of efficient LLM deployment on edge devices.

- Ubiquitous Adoption: It is widely adopted because it directly leverages the architectural strengths of modern edge NPUs and DSPs. These specialized accelerators are designed from the ground up for low-precision integer arithmetic, offering the highest throughput-per-watt.

- Predictable Performance: Quantized models offer predictable and stable performance under sustained loads, making them suitable for applications requiring consistent responsiveness and thermal stability.

- Offline Inference: Reduced memory footprint and bandwidth requirements enable more complex LLMs to fit within the limited memory of edge devices, facilitating robust offline AI capability without constant cloud connectivity.

- Pruning: The real-world deployment of pruning is more nuanced.

- Structured Pruning: Is viable and effective when the pruning patterns align with hardware capabilities (e.g., NVIDIA’s 2:4 sparsity). It can lead to significant reductions in model size and FLOPs, improving latency and power.

- Unstructured Pruning: Is challenging for most general-purpose edge devices. For most other edge devices, unstructured pruning becomes a software-defined challenge, where performance gains are entirely contingent on the maturity and efficiency of custom sparse kernels and memory management, rather than inherent hardware acceleration. Without dedicated sparse compute hardware, the “sparse tax” often negates the theoretical benefits, leading to inconsistent performance and potential thermal issues under sustained workloads.

Which Design Is More Efficient

Evaluating the overall efficiency of quantization and pruning requires an objective assessment of their practical benefits and limitations on current edge silicon.

For the current generation of edge AI silicon, quantization generally offers more predictable, consistent, and widespread efficiency gains. Its direct alignment with the native integer ALUs and memory subsystems of NPUs and DSPs provides a clear path to higher throughput, lower power, and better thermal stability. This makes it the primary and often indispensable optimization for deploying LLMs on the edge, ensuring reliable operation in diverse environments.

Pruning, particularly structured pruning, can be highly efficient when its patterns are explicitly supported by the hardware (e.g., NVIDIA’s 2:4 sparsity). In such cases, it can further reduce model size and FLOPs beyond what quantization alone achieves. However, unstructured pruning, despite its theoretical FLOP reduction, often struggles to translate into real-world efficiency on general-purpose edge hardware due to the overheads of sparse computation. The lack of ubiquitous, general-purpose sparse compute hardware on edge devices means that unstructured pruning remains largely a software-bound optimization with significant performance overheads, limiting its practical efficiency for most edge deployments.

Key Takeaways

- Quantization is foundational: It is the primary technique for bridging the architectural gap between high-precision LLMs and low-precision edge hardware, directly leveraging integer ALUs for superior throughput and power efficiency.

- Pruning targets redundancy: It reduces model size and FLOPs, but its real-world efficiency is highly dependent on the type of pruning (structured vs. unstructured) and the presence of specialized hardware acceleration.

- Hidden Tradeoffs are critical: Quantization faces calibration fragility, requiring careful data validation. Unstructured pruning incurs a “sparse tax” on general-purpose hardware, often increasing latency despite fewer FLOPs, and both techniques can introduce high retraining costs.

- Device implications are profound: Edge NPUs are architecturally locked into low-precision integer arithmetic, making quantization essential. The device landscape for pruning is bifurcated, with specific vendor support (e.g., NVIDIA’s 2:4 sparsity) offering benefits, while general-purpose devices struggle with unstructured sparsity.

- Sustained performance matters: Quantized models offer predictable thermal stability and consistent performance. Unstructured pruned models can exhibit performance variability and thermal throttling under continuous load.

- Future limitations exist: There is an inherent accuracy floor for extremely low-bit activation quantization in LLMs, and a persistent architectural gap in ubiquitous, general-purpose sparse compute hardware on edge devices, which will continue to limit the widespread efficiency of unstructured pruning.