Table of Contents

The Snapdragon X2 Elite NPU is a dedicated neural processing unit integrated within the Snapdragon X2 Elite System-on-Chip, engineered to deliver 80 TOPS (INT8) of peak AI inference performance. Its architecture prioritizes power-efficient, low-latency execution of highly parallel tensor operations. This is crucial for enabling responsive on-device AI features in Copilot+ PCs while offloading the CPU and GPU, all within the device’s thermal and power envelopes.

The proliferation of artificial intelligence workloads has necessitated the evolution of specialized hardware accelerators within modern computing platforms. Qualcomm’s Snapdragon X2 Elite NPU represents a significant architectural commitment to this paradigm. It serves as the cornerstone for on-device AI acceleration in the next generation of Copilot+ PCs. From an engineering perspective, the design of this neural processing unit reflects a calculated strategy. It balances raw computational throughput with the stringent power, thermal, and latency demands inherent in mobile and thin-and-light computing form factors.

What It Is

The Snapdragon X2 Elite NPU is a specialized hardware block embedded within the Snapdragon X2 Elite System-on-Chip (SoC). Its fundamental purpose is to act as a dedicated accelerator for artificial intelligence workloads, specifically neural network inference.

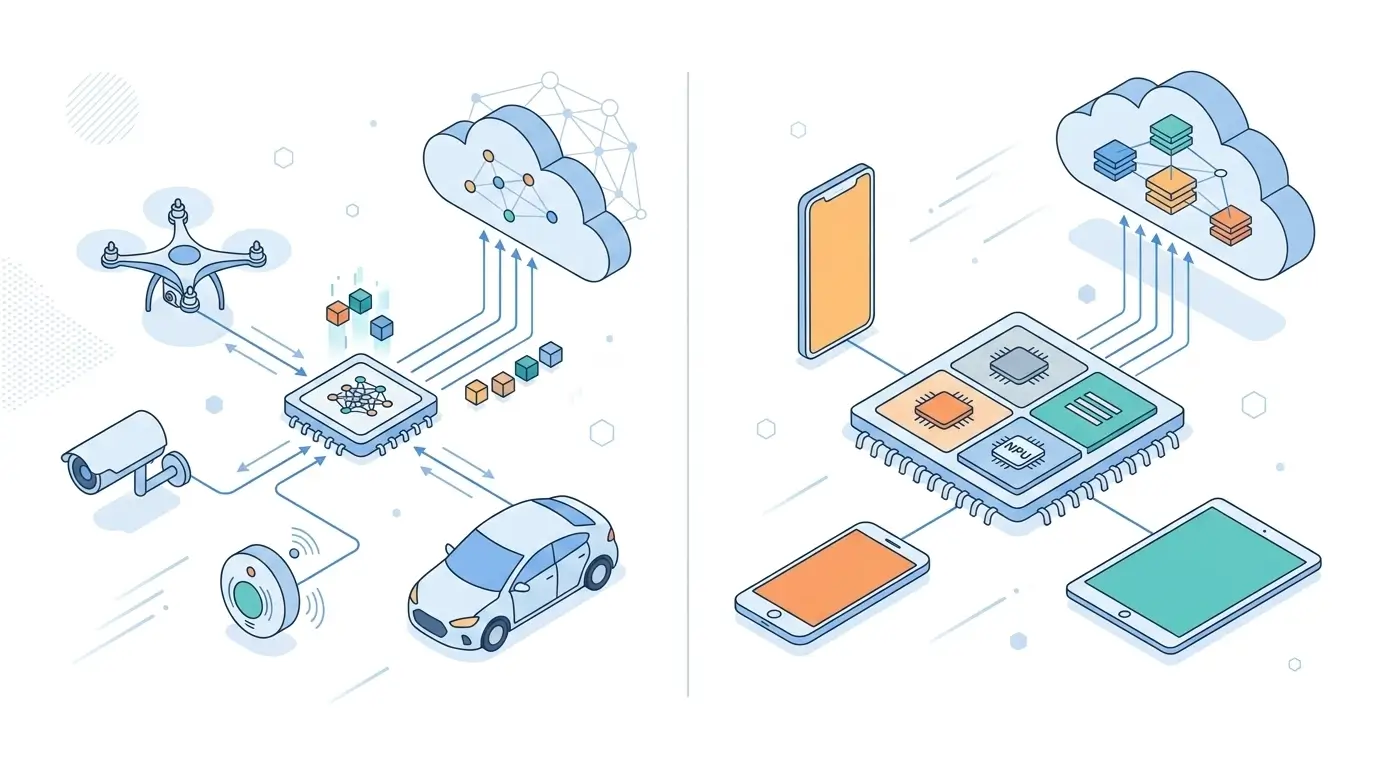

Unlike general-purpose CPUs or GPUs, this NPU is architecturally optimized for the highly parallel, repetitive tensor operations—such as matrix multiplications and convolutions—that form the computational core of most modern deep learning models. By providing 80 TOPS (Tera Operations Per Second) of peak INT8 performance, the Snapdragon X2 Elite NPU meets and exceeds the baseline requirements for AI-centric platforms like Copilot+ PCs, ensuring a robust foundation for on-device AI capabilities. This specialized hardware block is increasingly common in modern devices, extending beyond PCs to enhance capabilities in various platforms, including NPU-equipped smartphones.

How It Works

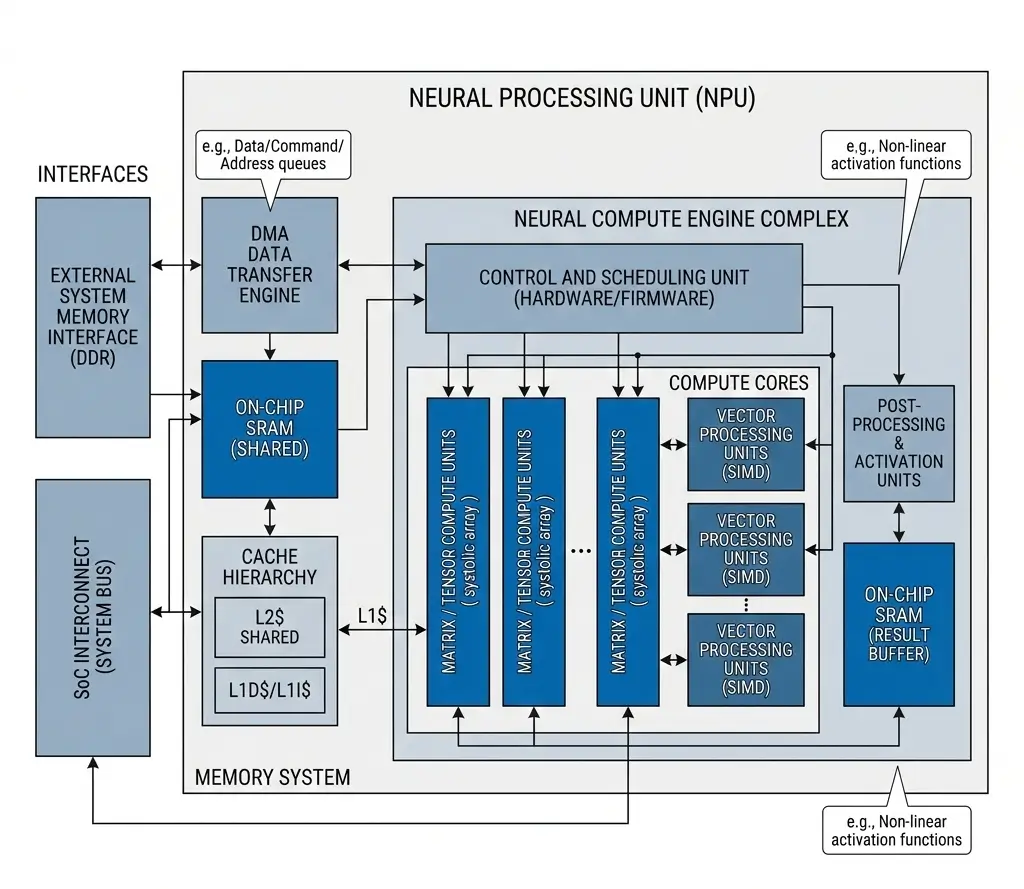

The operational efficacy of the Snapdragon X2 Elite NPU stems from its highly parallel and specialized design, focusing on efficient execution of neural network inference. At its core, the NPU leverages massive arrays of Multiply-Accumulate (MAC) units, which are the fundamental building blocks for tensor operations. These MAC arrays are specifically optimized for low-precision arithmetic, primarily INT8 and INT4, enabling a significant reduction in power consumption and memory bandwidth requirements compared to higher precision formats like FP16 or FP32.

Data flow within the Snapdragon X2 Elite NPU is meticulously managed by an internal dataflow scheduler and sophisticated Direct Memory Access (DMA) engines. These components work in concert to pre-fetch and stage data into an extensive on-chip memory hierarchy. This comprises SRAM scratchpads and dedicated NPU L1/L2 caches. This aggressive data locality strategy minimizes costly external DRAM accesses, ensuring that the MAC arrays are continuously fed with data. This maximizes utilization and reduces inference latency.

Once initiated by the host CPU, the Snapdragon X2 Elite NPU can execute complex AI graphs autonomously. This offloads the CPU from micro-managing individual tensor operations, reducing system-level overhead. Developers leverage tools and libraries, such as the Qualcomm AI Engine SDK documentation, to optimize and deploy models effectively on this hardware. The NPU’s internal scheduler also dynamically adjusts voltage and frequency (DVFS) to operate at optimal power points, balancing performance with the device’s thermal envelope.

Architecture Overview

| Specification | Description |

|---|---|

| AI Engine | Snapdragon X2 Elite NPU |

| Compute Type | Dedicated NPU |

| Precision Support | INT8 / INT4 |

| Optimization Target | AI inference |

| Integration | SoC-level accelerator |

The architecture of the Snapdragon X2 Elite NPU is a testament to specialized design for AI acceleration, integrating several key components to achieve its performance and efficiency targets:

- Tensor Compute Units: These are the heart of the NPU, comprising massively parallel arrays of MAC units. These units are highly optimized for dense matrix multiplications and convolutions, which are the dominant operations in neural network inference. Their design specifically targets low-precision integer arithmetic (INT8, INT4) for maximum throughput and power efficiency.

- On-Chip Memory Hierarchy: To mitigate memory bottlenecks, the Snapdragon X2 Elite NPU incorporates a multi-level memory system. This includes large, high-bandwidth SRAM scratchpads and dedicated L1/L2 caches. This hierarchy is critical for maintaining data locality, reducing latency, and ensuring the compute units are continuously supplied with data, thereby minimizing stalls due to external memory access.

- DMA Engines: Sophisticated DMA controllers are integrated to efficiently manage data transfers between the NPU’s on-chip memory and the shared system LPDDR5X DRAM. These engines are designed to pre-fetch data and manage memory access patterns, optimizing bandwidth utilization and reducing the CPU’s involvement in data movement.

- Control and Scheduling Units: The Snapdragon X2 Elite NPU features dedicated control logic and an internal scheduler. This unit is responsible for parsing AI graph representations, mapping operations to the NPU’s compute resources, managing data dependencies, and orchestrating the overall execution flow. It also incorporates dynamic voltage and frequency scaling (DVFS) capabilities, allowing the NPU to adapt its power consumption and performance based on workload demands and thermal constraints.

- Interconnect: The NPU is tightly integrated within the broader Snapdragon X2 Elite SoC fabric, sharing the high-bandwidth LPDDR5X memory subsystem with other components like the CPU and GPU. This necessitates efficient arbitration by the SoC’s memory controller to manage potential contention.

Performance Characteristics

The Snapdragon X2 Elite NPU exhibits distinct performance characteristics driven by its specialized architecture and design objectives:

- Peak vs. Sustained Performance: The Snapdragon X2 Elite NPU is rated for 80 TOPS (INT8) peak performance. However, for sustained, long-duration AI workloads, the effective performance typically settles into a range of 40-56 TOPS. This difference is primarily due to the dynamic interplay between the NPU’s internal DVFS and the device’s thermal management. The NPU’s scheduler will dynamically reduce clock frequencies and voltages to maintain thermal stability within the device’s envelope, prioritizing continuous operation over peak bursts.

- Power Efficiency: A critical characteristic of the Snapdragon X2 Elite NPU is its exceptional power efficiency, achieving an estimated 15-20 TOPS/W. This allows the NPU to operate within a low power envelope (typically 4-6W for sustained workloads), enabling “always-on” AI features without significantly impacting battery life in thin-and-light form factors.

- Predictable Latency: Through its extensive on-chip memory hierarchy and dedicated dataflow scheduler, the Snapdragon X2 Elite NPU is designed for predictable, low-latency inference. By minimizing external memory accesses and ensuring continuous data supply to its MAC arrays, the NPU reduces variability in execution times, which is crucial for real-time interactive AI applications.

- Throughput for Parallel Operations: The massively parallel nature of the NPU’s compute units ensures high throughput for tensor-based operations. This makes the Snapdragon X2 Elite NPU highly effective for workloads dominated by dense matrix multiplications and convolutions, which are common in many neural network architectures.

- Quantization’s Role: The Snapdragon X2 Elite NPU is heavily optimized for low-precision integer arithmetic (INT8, INT4). Models that are aggressively quantized to these formats will demonstrate significantly better sustained performance and power efficiency compared to those requiring FP16 or FP32, which may incur emulation overhead or utilize fewer, less efficient floating-point units on the NPU.

Real-World Applications

The Snapdragon X2 Elite NPU is engineered to power a new generation of AI-enhanced experiences, particularly within the Copilot+ PC ecosystem. Its capabilities translate directly into several key real-world applications:

- Copilot+ PC Features: The NPU is fundamental to enabling the core AI features of Copilot+ PCs, such as real-time live transcription, gaze correction during video calls, and advanced background effects. Its low-latency execution ensures these features are responsive and seamless.

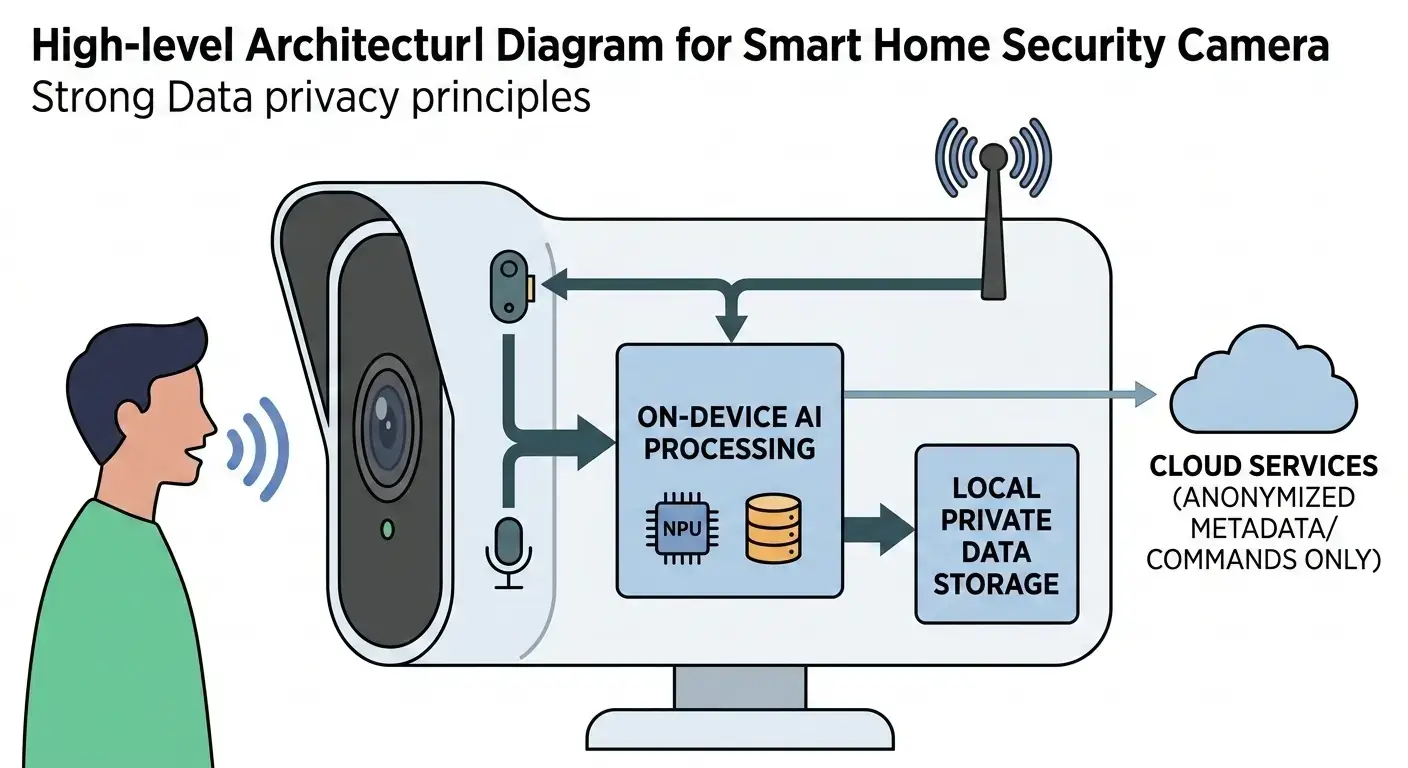

- Local Large Language Model (LLM) Inference: The high INT8 TOPS performance of the Snapdragon X2 Elite NPU allows for efficient execution of smaller, quantized LLMs directly on the device. This enables privacy-preserving AI assistants and content generation without constant cloud connectivity.

- Always-On, Background AI Processing: The power efficiency of the Snapdragon X2 Elite NPU facilitates continuous background AI tasks, such as intelligent photo organization, video analysis, or system optimization, with minimal impact on battery life or foreground application performance. The OS/system scheduler can rapidly activate the NPU from low-power states for quick inference bursts.

- Enhanced Multitasking and System Fluidity: By offloading demanding AI workloads from the CPU and GPU, the Snapdragon X2 Elite NPU ensures that these general-purpose processors remain available for their primary tasks. This leads to a smoother, more fluid overall user experience, allowing concurrent execution of AI-powered applications alongside traditional productivity or entertainment software without noticeable performance degradation.

- Edge AI for Privacy and Security: Processing AI workloads locally on the Snapdragon X2 Elite NPU enhances user privacy by keeping sensitive data on the device. It also reduces reliance on cloud services, improving security and enabling functionality in offline environments.

Limitations

Despite its advanced capabilities, the specialized nature and performance targets of the Snapdragon X2 Elite NPU introduce several inherent limitations and engineering tradeoffs that impact its runtime behavior and adaptability. These limitations include:

- Specialization vs. Future Model Adaptability: The Snapdragon X2 Elite NPU is highly optimized for current, well-understood neural network primitives (e.g., dense matrix multiplication, convolutions, specific activation functions) and low-precision inference. This specialization, while a strength for current models, inherently limits its efficiency for novel AI architectures that may rely heavily on sparse operations, dynamic graph structures, or custom layers not directly mappable to the NPU’s fixed-function units. Such models might necessitate inefficient emulation or offloading to the CPU/GPU, incurring significant performance degradation and latency.

- Thermal Throttling and Sustained Performance: While the Snapdragon X2 Elite NPU boasts 80 TOPS peak performance, its real-world sustained performance is constrained by the device’s thermal design power (TDP) and cooling solution. For long-running, demanding AI workloads, the NPU’s internal DVFS will dynamically throttle its operating frequency and voltage to stay within the thermal envelope, leading to a sustained performance ceiling significantly lower than the advertised peak.

- Software Stack Maturity and Runtime Efficiency: The effective utilization of the Snapdragon X2 Elite NPU is heavily dependent on the maturity and optimization of the software stack, including SDKs, compilers, and runtime APIs. An immature or suboptimal compiler may struggle to efficiently map complex AI graphs onto the NPU’s specialized hardware, leading to inefficient resource utilization, unnecessary data transfers, suboptimal precision mapping, or partial graph offloading, all of which can significantly reduce the NPU’s effective runtime performance and power efficiency. Access to comprehensive resources like the Qualcomm AI Engine SDK documentation is crucial for maximizing NPU utilization.

- Memory Bandwidth Contention: While the Snapdragon X2 Elite NPU leverages high internal bandwidth and LPDDR5X, it shares this external memory with other SoC components like the CPU and GPU. For very large models or large batch sizes, the NPU’s DMA engines can contend for memory access. If the CPU or GPU is also heavily active, the SoC’s memory controller arbitration scheduler might reduce the NPU’s effective memory bandwidth, leading to compute unit stalls and increased inference latency.

- Precision Flexibility: The Snapdragon X2 Elite NPU is primarily optimized for INT8 and INT4. While it may support or emulate higher precision formats like FP16 or FP32, doing so typically results in lower throughput and higher power consumption compared to its integer capabilities, limiting its efficiency for models that cannot be adequately quantized.

Why It Matters

The Snapdragon X2 Elite NPU is a pivotal component in the evolving landscape of computing. Its significance extends beyond raw performance metrics and includes:

- Enabling the Copilot+ PC Era: The Snapdragon X2 Elite NPU is a critical enabler for the Copilot+ PC platform, meeting and exceeding the stringent 40 TOPS minimum requirement. This ensures a guaranteed baseline for on-device AI capabilities, driving a new generation of AI-centric user experiences that are responsive, personal, and efficient.

- Shifting AI Workloads to the Edge: This NPU accelerates the industry trend of moving AI inference from the cloud to the edge device. By performing complex AI tasks locally, it enhances user privacy, reduces latency, decreases reliance on internet connectivity, and lowers operational costs associated with cloud computing.

- Optimizing Heterogeneous Computing: The integration of a powerful, specialized NPU within the SoC exemplifies the benefits of heterogeneous computing. By offloading AI tasks from the CPU and GPU, the Snapdragon X2 Elite NPU allows each processor to focus on its strengths, leading to superior overall system efficiency, improved multitasking, and a more fluid user experience.

- Driving Power Efficiency for Mobile AI: The high TOPS/W efficiency of the Snapdragon X2 Elite NPU is crucial for extending battery life in AI-intensive use cases. This allows for “always-on” AI features in thin-and-light laptop designs without compromising portability or thermal comfort, making AI truly pervasive in mobile computing.

- Setting a Benchmark for Integrated AI Acceleration: The Snapdragon X2 Elite NPU establishes a significant benchmark for integrated AI acceleration in consumer devices, influencing future architectural designs across the industry by demonstrating robust on-device intelligence capabilities and setting a competitive standard against other leading platforms like Intel AI Boost and AMD XDNA.

Key Takeaways

The Snapdragon X2 Elite NPU is a highly specialized, power-efficient AI accelerator designed to be the computational backbone for on-device intelligence in Copilot+ PCs. Its 80 TOPS (INT8) peak performance, coupled with an architecture optimized for low-precision tensor operations, enables responsive, always-on AI features while significantly offloading the CPU and GPU.

While its specialization and reliance on a mature software stack present engineering tradeoffs, the Snapdragon X2 Elite NPU is critical for driving the shift towards pervasive edge AI, enhancing system fluidity, and delivering extended battery life for AI-centric workloads in mobile computing.